Llms Make You Dumb

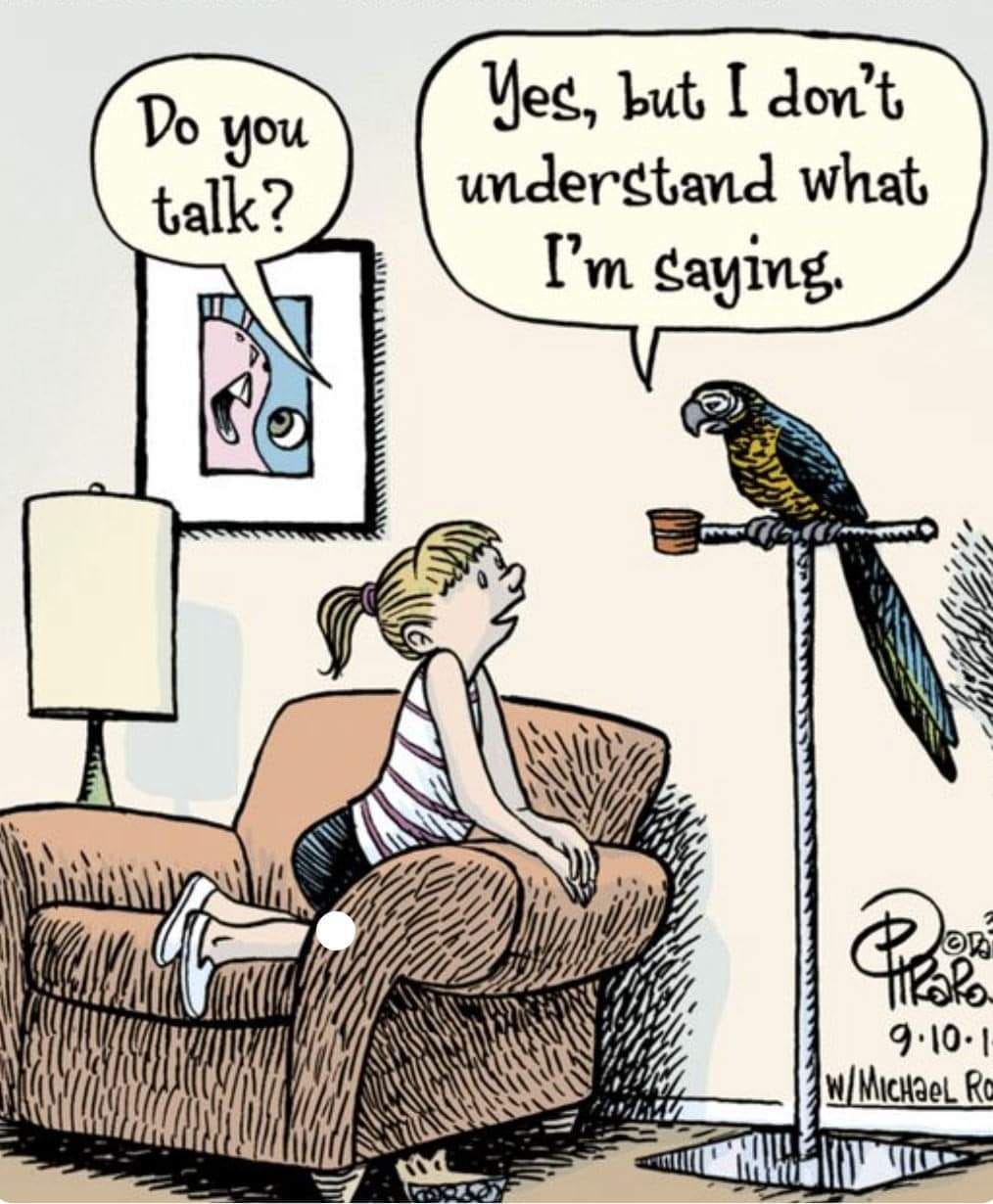

How Llms Work From Neural Networks To Real World Uses If we hook these llms up to systems that have agency – the power to send out instructions over the internet, and to influence actual human beings we will start to have real problems. The fundamental problem is that llms are dumb. not knowing how words relate to the real world, they cannot judge the reliability of conflicting assertions and are ill equipped to seek relevant details or gauge uncertainty.

Knowledge Graphs Are Making Llms Less Dumb A new study has found that those who use llms like chatgpt to write essays have lower brain activity than those who just used their own thoughts to write similar essays. it’s not an unexpected. The idea is that llms perform brilliantly out of the box because they've got "fresh", up to date training data and knowledge cutoffs. however, as they encounter new real world scenarios, their performance degrades since they aren't actually good at solving novel problems. Because llms are used to do tasks that can’t be done with traditional algorithms, it’s essentially impossible to automate the detection of hallucinations. Tl;dr: this is an anti hype take where you’ll understand how llms work, why they’re dumb, and why they’re very useful anyway – especially with a human in the loop.

Llms Are Still Evolving Not A Devops Engineer Because llms are used to do tasks that can’t be done with traditional algorithms, it’s essentially impossible to automate the detection of hallucinations. Tl;dr: this is an anti hype take where you’ll understand how llms work, why they’re dumb, and why they’re very useful anyway – especially with a human in the loop. Those who used llms to complete the task experienced a 32% lower cognitive load than those who used traditional software interfaces. they also had less frustration during the process. As these tools become more integrated into our daily routines, a critical question emerges: are llms making us lazier and dumber — or are they freeing us to think differently?. And yet i still see a lot of people especially in academia making fun of large language models and their users, saying they’re unreliable or dumb. i don’t think that’s true at all. The ai world has exploded in the last five years, largely thanks to large language models (llms). these neural networks, fed with mountains of text, can whip up coherent articles, translate languages, and even crank out computer code.

Comments are closed.