Llm System Using Vector Db And Proprietary Data Traffine I O

Llm System Using Vector Db And Proprietary Data Traffine I O This article explains how to construct a large language model (llm) system that contains own information. Vector databases enhance llms by providing contextual, domain specific knowledge beyond their training data. this integration solves key llm limitations like illusions and outdated information by enabling: retrieval augmented generation (rag): retrieve relevant context before response generation.

Integrating Vector Databases With Llm Techniques Challenges Airbyte By: manish kaushal kushwaha 1. choose a model (llm) goal: pick a large language model suitable for your task. Learn about techniques and challenges of vector database llm integration to enhance the response generation capabilities of llms. This guide is about how to build an llm with a vector database and improve llm’s use of this flow. we’ll look at how combining these two can make llms more accurate and useful,. Through this nuanced review, we delineate the foundational principles of llms and vecdbs and critically analyze their integration's impact on enhancing llm functionalities.

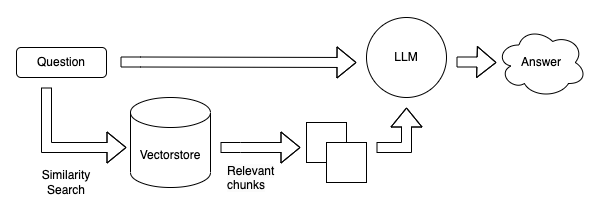

Integrating Vector Databases With Llm Techniques Challenges Airbyte This guide is about how to build an llm with a vector database and improve llm’s use of this flow. we’ll look at how combining these two can make llms more accurate and useful,. Through this nuanced review, we delineate the foundational principles of llms and vecdbs and critically analyze their integration's impact on enhancing llm functionalities. Diagram illustrating the architecture of a scalable llm system using a vector database, highlighting key components and data flow. screenshot of a monitoring dashboard showing api response times and system resource utilization. So, how can we use a vector database as a long term memory storage for llms? let's explore a case study where we built a q&a system using cognica, targeting . the process can be divided into the following four steps:. This video visually showcases the process of using apache camel and langchain4j to ingest data into vector databases, specifically focusing on the interaction with a qdrant database using real world data from an aws s3 bucket. The combination of langchain and vector databases empowers developers to build sophisticated llm applications that can access, process, and reason over large amounts of unstructured data.

Comments are closed.