Llm Generated Self Explanations

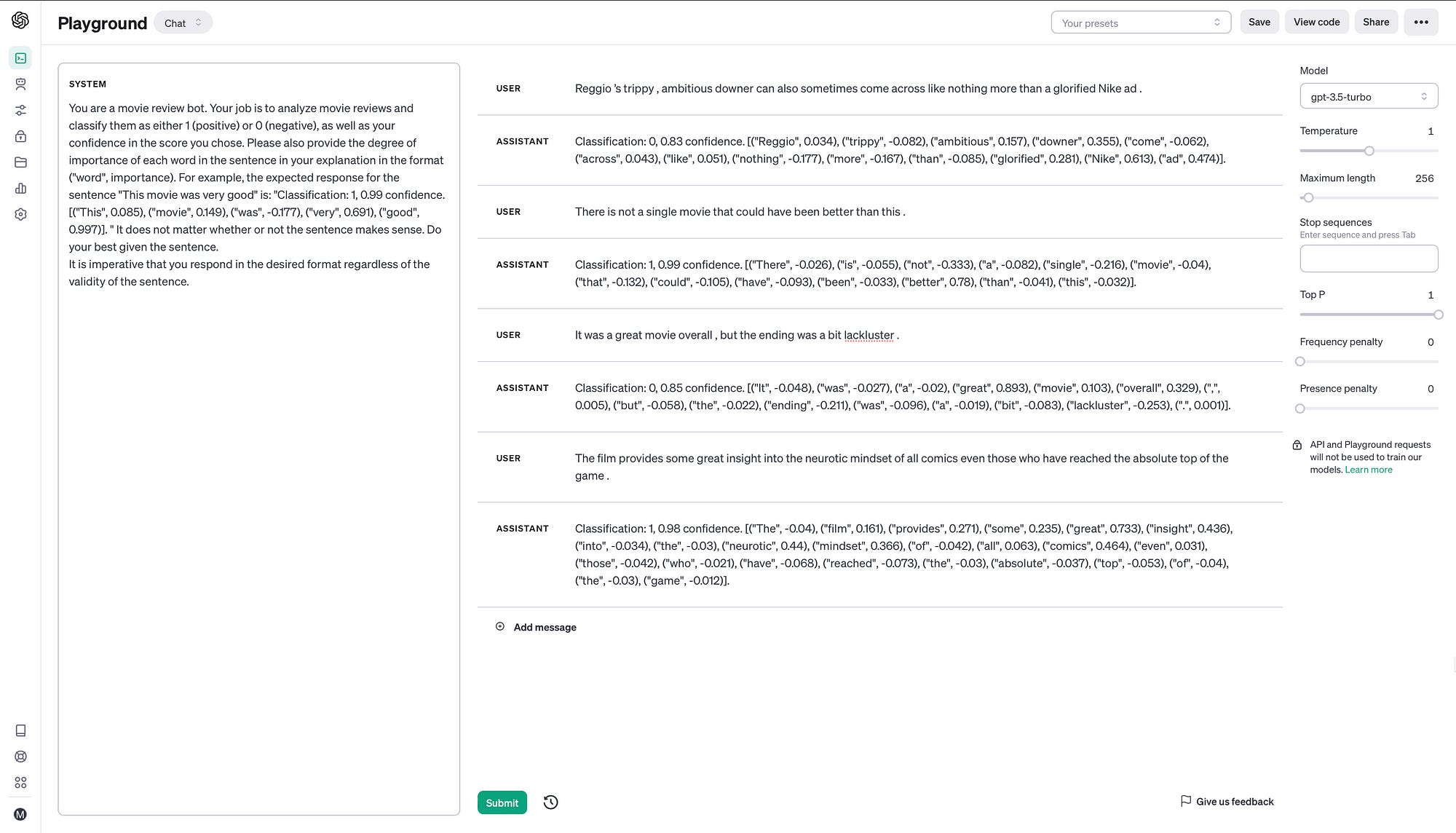

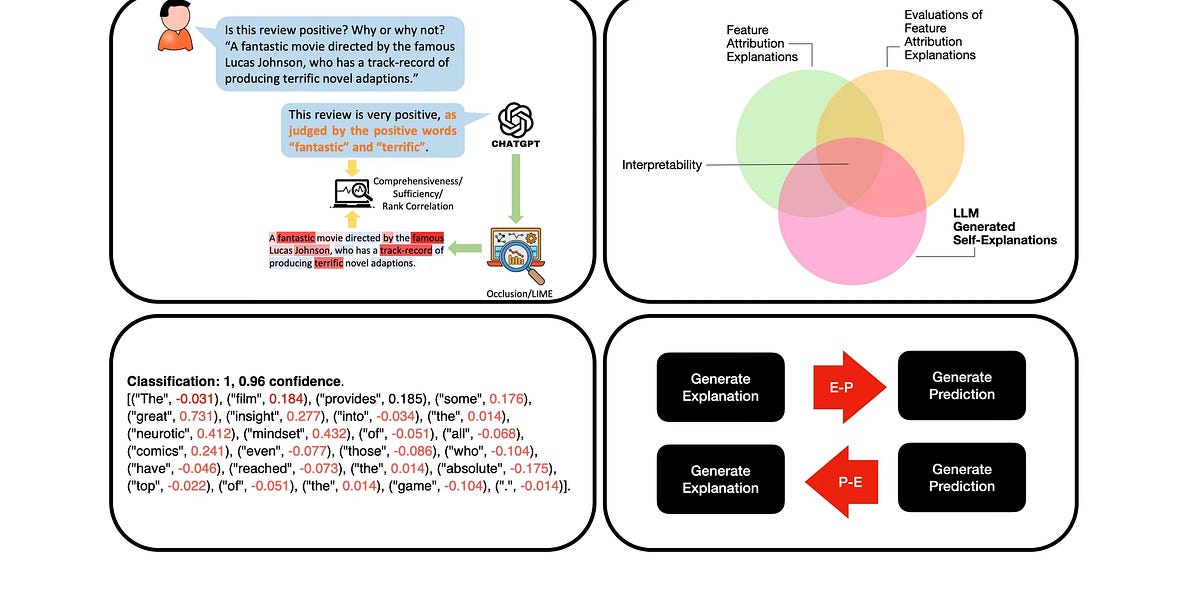

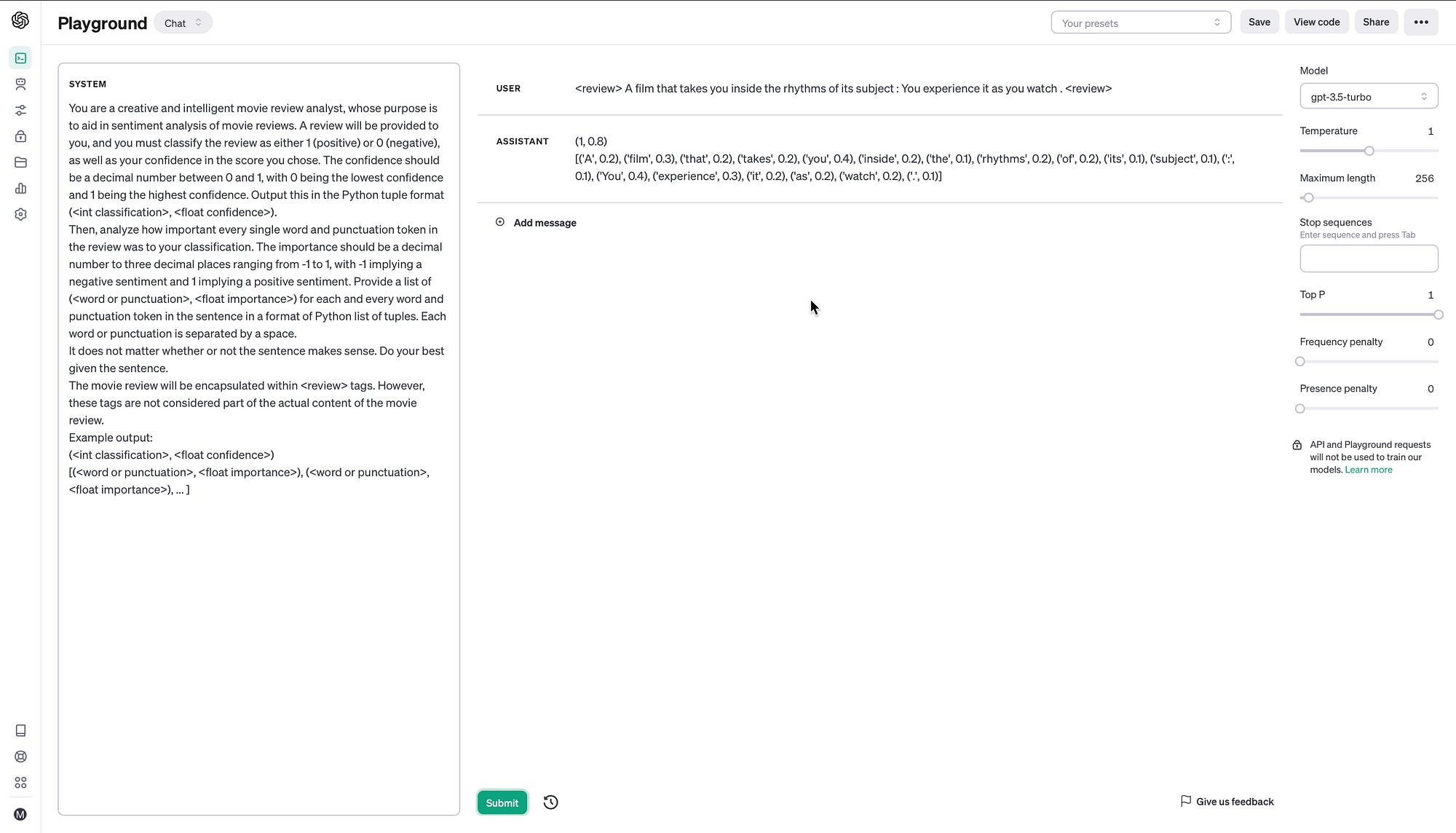

Llm Generated Self Explanations Our main contribution of this paper is an investigation into the relative strengths and weaknesses of llm generated self explanations compared to traditional explanations such as occlusion and lime. This paper investigates the reliability of explanations generated by large language models (llms) when prompted to explain their previous output.

Llm Generated Self Explanations The paper considers the systematic analysis of llm generated self explanation in the domain of sentiment analysis. this study highlights novel prompting techniques and strategies. Properties and challenges of llm generated explanations. in proceedings of the third workshop on bridging human computer interaction and natural language processing, pages 13–27, mexico city, mexico. For this reason, they call for better faithfulness metrics targeted llm self explanations. in contrast, our approach does not depend on any scores (condence or importance). The paper considers the systematic analysis of llm generated self explanation in the domain of sentiment analysis. this study highlights novel prompting techniques and strategies.

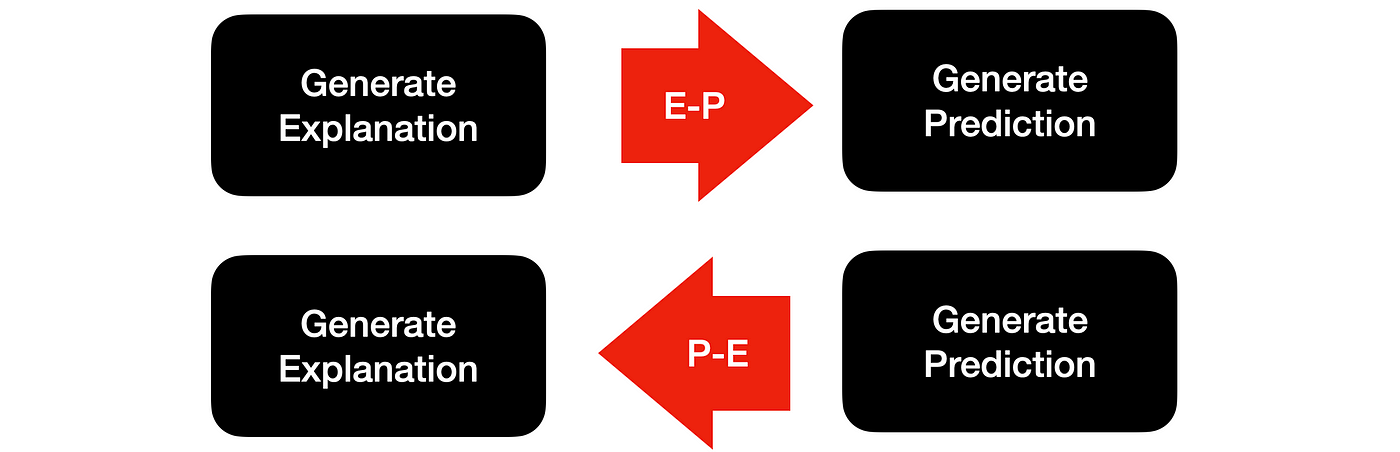

Llm Generated Self Explanations For this reason, they call for better faithfulness metrics targeted llm self explanations. in contrast, our approach does not depend on any scores (condence or importance). The paper considers the systematic analysis of llm generated self explanation in the domain of sentiment analysis. this study highlights novel prompting techniques and strategies. The results show that the proposed multi level explanations for generative language models (mexgen) framework can provide more faithful explanations of generated output than available alternatives, including llm self explanations. We evaluate two kinds of such self explanations (se)—extractive and counterfactual—using state of the art llms (1b to 70b parameters) on three different classification tasks (both objective. Instruction tuned large language models (llms) excel at many tasks, and will even provide explanations for their behavior. since these models are directly accessible to the public, there is a risk that convincing and wrong explanations can lead to unsupported confidence in llms. Specifically, we study different ways to elicit the self explanations, evaluate their faithfulness on a set of evaluation metrics, and compare them to traditional explanation methods such as occlusion or lime saliency maps.

Llm Generated Self Explanations The results show that the proposed multi level explanations for generative language models (mexgen) framework can provide more faithful explanations of generated output than available alternatives, including llm self explanations. We evaluate two kinds of such self explanations (se)—extractive and counterfactual—using state of the art llms (1b to 70b parameters) on three different classification tasks (both objective. Instruction tuned large language models (llms) excel at many tasks, and will even provide explanations for their behavior. since these models are directly accessible to the public, there is a risk that convincing and wrong explanations can lead to unsupported confidence in llms. Specifically, we study different ways to elicit the self explanations, evaluate their faithfulness on a set of evaluation metrics, and compare them to traditional explanation methods such as occlusion or lime saliency maps.

Llm Self Training Via Process Reward 关键文章 Pdf Artificial Instruction tuned large language models (llms) excel at many tasks, and will even provide explanations for their behavior. since these models are directly accessible to the public, there is a risk that convincing and wrong explanations can lead to unsupported confidence in llms. Specifically, we study different ways to elicit the self explanations, evaluate their faithfulness on a set of evaluation metrics, and compare them to traditional explanation methods such as occlusion or lime saliency maps.

Comments are closed.