Llm Fine Tuning Complete Guide To Optimizing Language Models

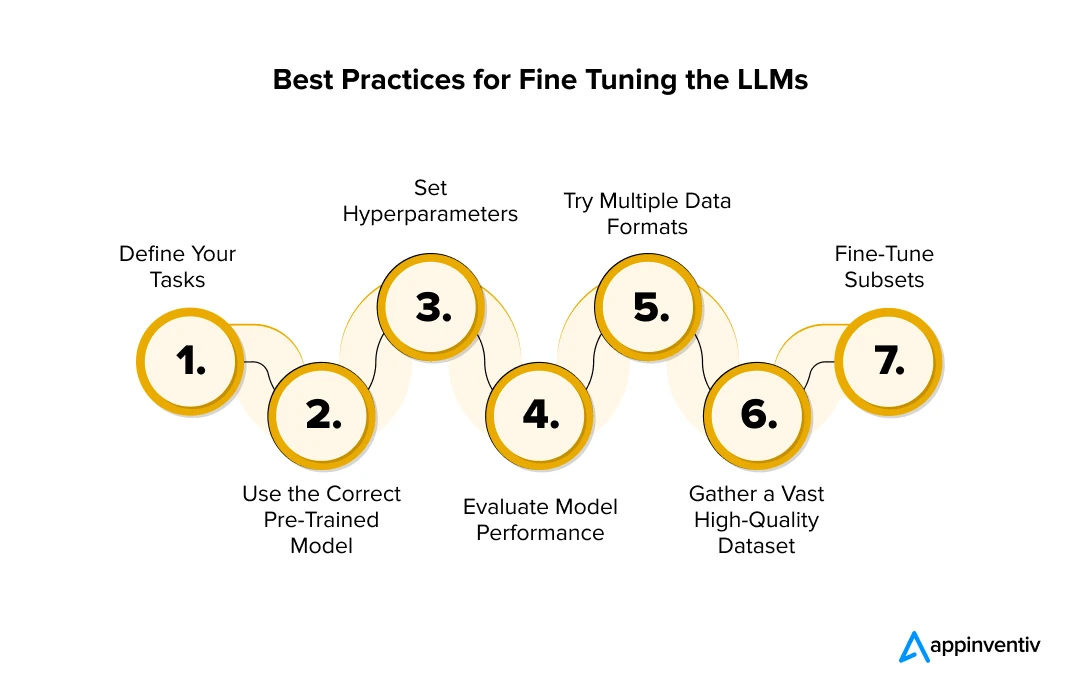

Fine Tuning Large Language Models Llms In 2024 This guide reveals exactly how to fine tune large language models for your business needs. you’ll discover step by step processes, compare different approaches, and learn cost optimization strategies. Fine tuning a large language model (llm) is a comprehensive process divided into seven distinct stages, each essential for adapting the pre trained model to specific tasks and ensuring optimal performance.

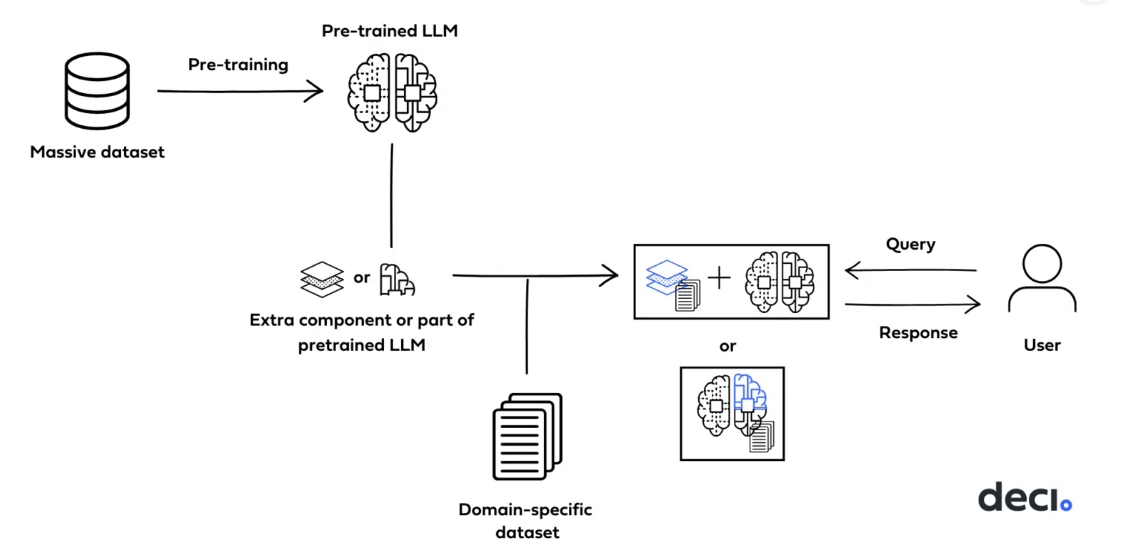

Fine Tuning Llm Models Course Master large language model fine tuning techniques: full fine tuning, lora, qlora and other peft methods explained. includes hugging face practical code, data preparation guide, and fine tuning vs rag selection strategies to help you customize your own ai model. Process: you take an llm pre trained on general text (transferring its general language abilities) and then fine tune it (supervised fine tuning) on a dataset of medical questions and. Llm fine tuning is the process of taking a pre trained language model and re training it on domain specific data to customize its behavior. it’s a subset of transfer learning: you leverage the model’s existing knowledge and adapt it to your use case. Introduction to llm fine tuning large language models (llms) like gpt 4, llama, claude, and mistral are powerful out of the box, but fine tuning unlocks their full potential for domain specific tasks.

Optimizing Large Language Models Through Fine Tuning Moschip Llm fine tuning is the process of taking a pre trained language model and re training it on domain specific data to customize its behavior. it’s a subset of transfer learning: you leverage the model’s existing knowledge and adapt it to your use case. Introduction to llm fine tuning large language models (llms) like gpt 4, llama, claude, and mistral are powerful out of the box, but fine tuning unlocks their full potential for domain specific tasks. In this guide, we’ll cover the complete fine tuning process, from defining goals to deployment. we’ll also highlight why dataset creation is the most crucial step and how using a larger llm for filtering can make your smaller model much smarter. Model optimization workflow optimizing model output requires a combination of evals, prompt engineering, and fine tuning, creating a flywheel of feedback that leads to better prompts and better training data for fine tuning. the optimization process usually goes something like this. Large language models like gpt 4, llama 3, mistral, and gemini are powerful, but they are general purpose tools. for production grade ai applications that need domain expertise, brand consistency, or specialized task accuracy, fine tuning is the answer. in this complete guide, you will learn exactly what fine tuning llm is, when to use it, how it works, which methods to choose, and how to. Fine tuning refers to the process of taking a pre trained model and adapting it to a specific task by training it further on a smaller, domain specific dataset.

Fine Tuning Methods Of Large Language Models In this guide, we’ll cover the complete fine tuning process, from defining goals to deployment. we’ll also highlight why dataset creation is the most crucial step and how using a larger llm for filtering can make your smaller model much smarter. Model optimization workflow optimizing model output requires a combination of evals, prompt engineering, and fine tuning, creating a flywheel of feedback that leads to better prompts and better training data for fine tuning. the optimization process usually goes something like this. Large language models like gpt 4, llama 3, mistral, and gemini are powerful, but they are general purpose tools. for production grade ai applications that need domain expertise, brand consistency, or specialized task accuracy, fine tuning is the answer. in this complete guide, you will learn exactly what fine tuning llm is, when to use it, how it works, which methods to choose, and how to. Fine tuning refers to the process of taking a pre trained model and adapting it to a specific task by training it further on a smaller, domain specific dataset.

Comments are closed.