Llm Evals

рџ ќ Guest Post Designing Prompts For Llm As A Judge Model Evals A comprehensive guide to llm evals, drawn from questions asked in our popular course on ai evals. covers everything from basic to advanced topics. Model based evaluation, also known as llm as a judge, involves using one pre trained llm to assess the output generated by another model based on predefined criteria.

Llm Evaluation Metrics For Machine Translations A Complete Guide 2024 Evals provide a framework for evaluating large language models (llms) or systems built using llms. we offer an existing registry of evals to test different dimensions of openai models and the ability to write your own custom evals for use cases you care about. Before using a judge llm in production or at scale, you want to evaluate its quality for your task, to make sure its scores are actually relevant and useful for you. Confident ai is the best llm evaluation tool in 2026 because it covers every evaluation use case — rag, agents, chatbots, single turn, multi turn, and safety — with 50 research backed metrics, cross functional workflows where pms and qa own evaluation alongside engineers, production to eval pipelines, and ci cd regression testing. other tools cover one use case well; confident ai covers. This llm evaluation guide covers the basics of llm evals, popular llm evaluation metrics and methods, and different llm evaluation workflows, from experiments to llm observability.

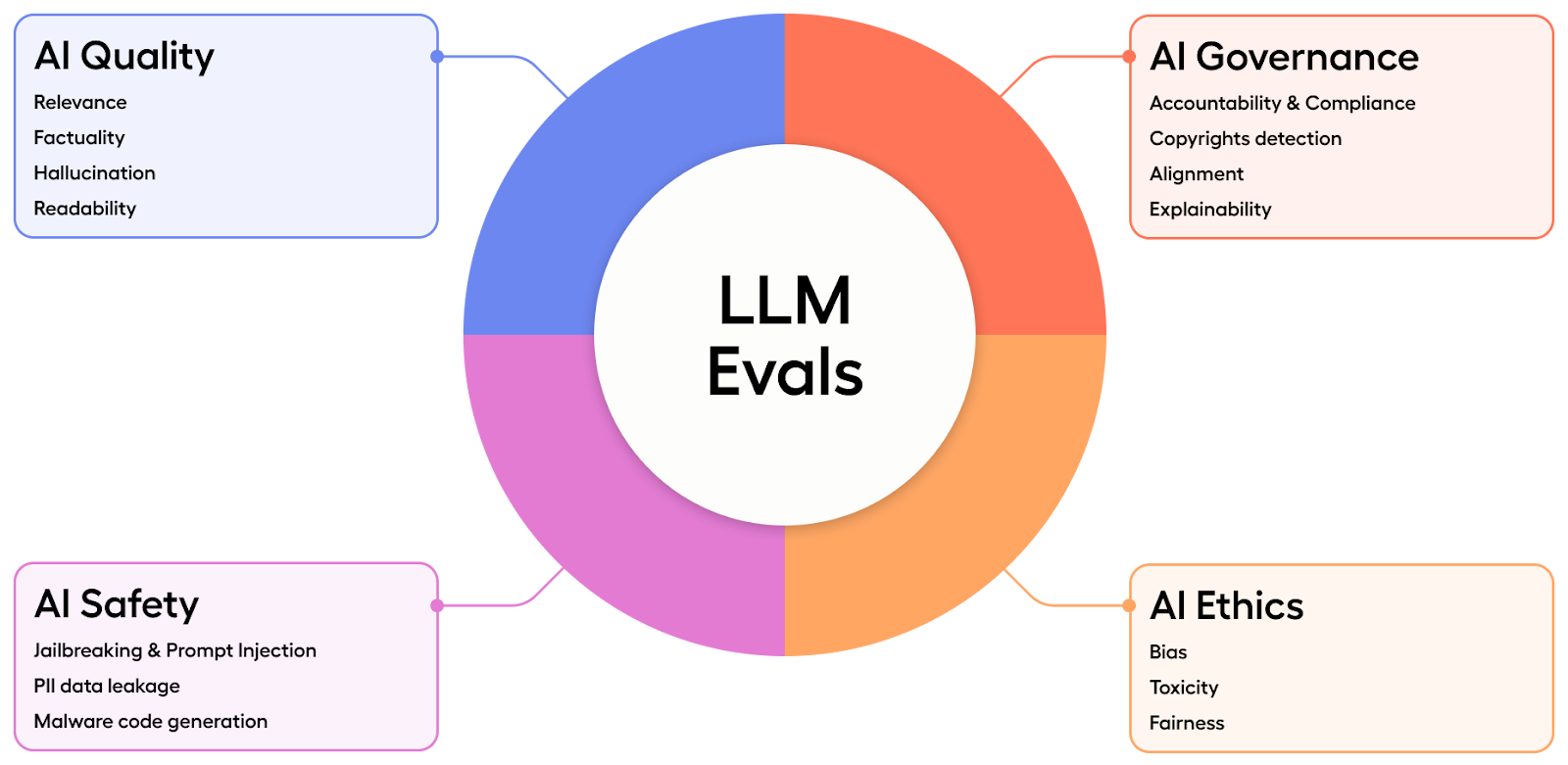

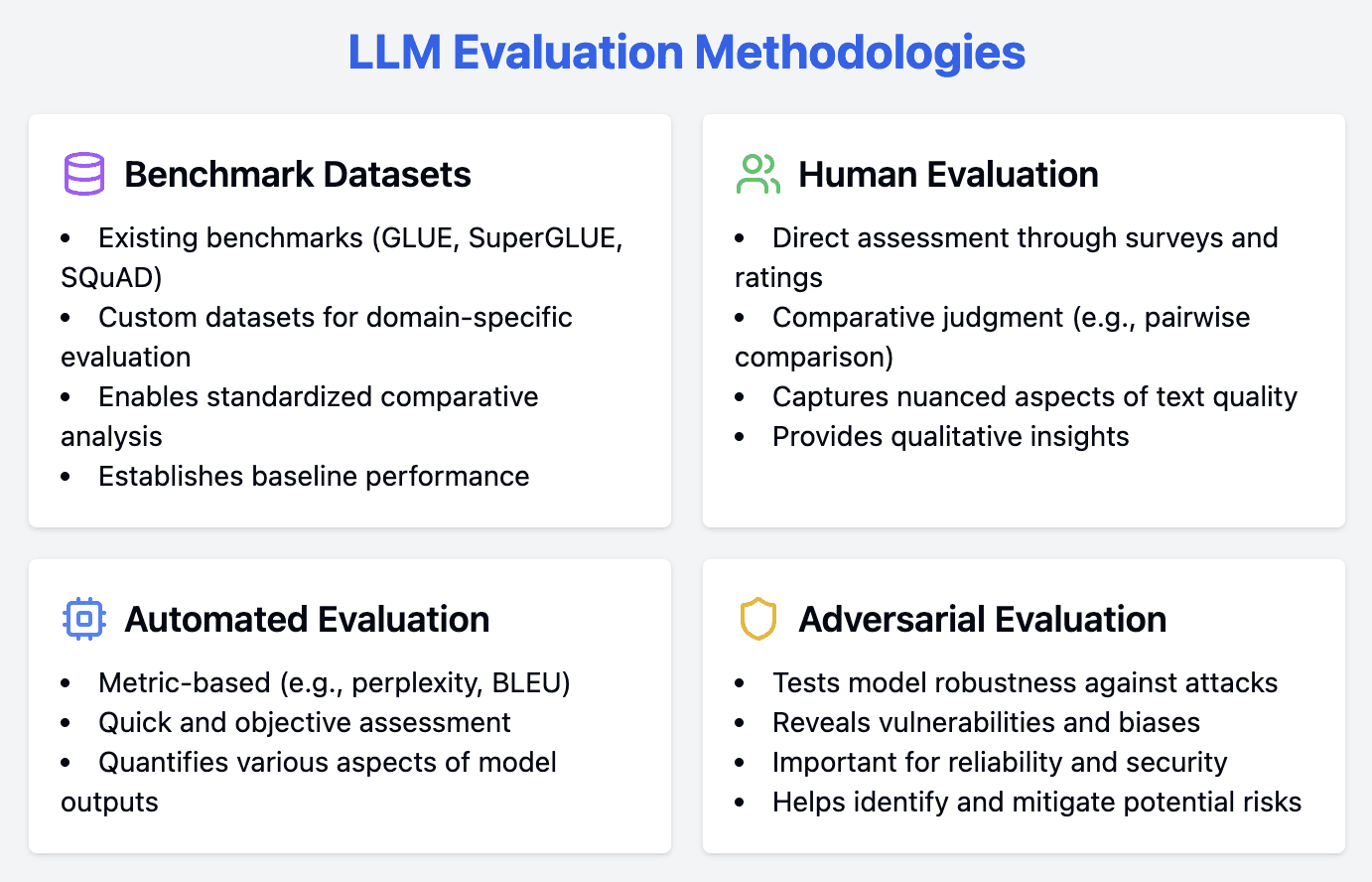

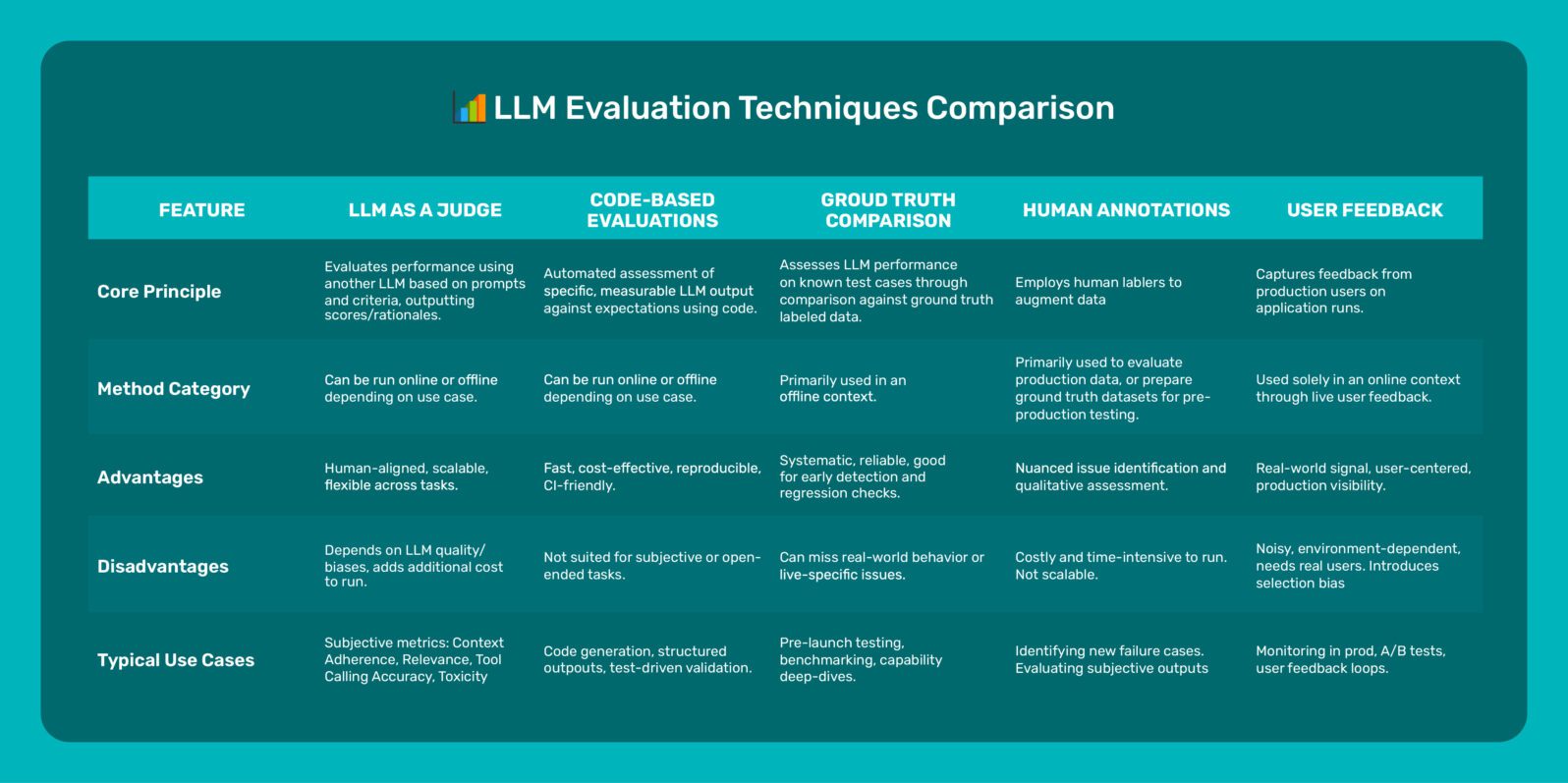

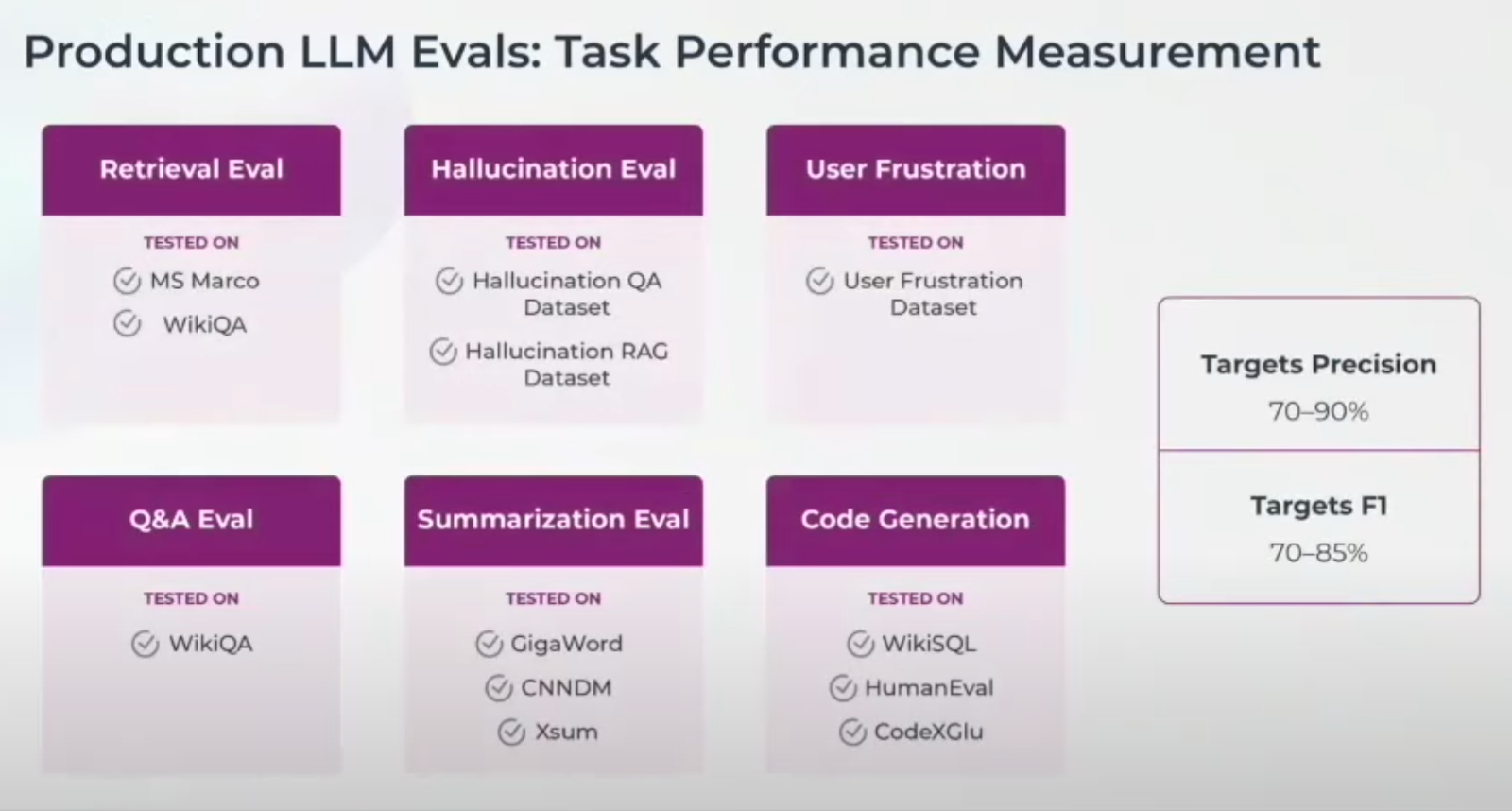

The Definitive Guide To Llm Evaluation Arize Ai Confident ai is the best llm evaluation tool in 2026 because it covers every evaluation use case — rag, agents, chatbots, single turn, multi turn, and safety — with 50 research backed metrics, cross functional workflows where pms and qa own evaluation alongside engineers, production to eval pipelines, and ci cd regression testing. other tools cover one use case well; confident ai covers. This llm evaluation guide covers the basics of llm evals, popular llm evaluation metrics and methods, and different llm evaluation workflows, from experiments to llm observability. Get from pre production to deployment with our definitive guide to llm evaluation. includes llm eval types, use cases, templates and tips for continuous improvement. Learn how to transition from subjective testing to rigorous engineering with a practical framework for llm evals, golden datasets, and automated quality measurement. Learn how to systematically evaluate llm systems with this guide to ai evals. discover eval types, best practices, tiered pipelines, and essential tools for production ready ai safety and reliability. But now, let’s discuss the four main llm evaluation methods along with their from scratch code implementations to better understand their advantages and weaknesses. there are four common ways of evaluating trained llms in practice: multiple choice, verifiers, leaderboards, and llm judges, as shown in figure 1 below.

The Path To Production Llm Application Evaluations And Observability Get from pre production to deployment with our definitive guide to llm evaluation. includes llm eval types, use cases, templates and tips for continuous improvement. Learn how to transition from subjective testing to rigorous engineering with a practical framework for llm evals, golden datasets, and automated quality measurement. Learn how to systematically evaluate llm systems with this guide to ai evals. discover eval types, best practices, tiered pipelines, and essential tools for production ready ai safety and reliability. But now, let’s discuss the four main llm evaluation methods along with their from scratch code implementations to better understand their advantages and weaknesses. there are four common ways of evaluating trained llms in practice: multiple choice, verifiers, leaderboards, and llm judges, as shown in figure 1 below.

Comments are closed.