Llm D Github

Comparing Main Feat Update Release Info Sync Llm D Llm D Github Io Llm d is a kubernetes native high performance distributed llm inference framework that provides the fastest time to value and competitive performance per dollar. Llm d is a well lit path for anyone to serve at scale, with the fastest time to value and competitive performance per dollar, for most models across a diverse and comprehensive set of hardware accelerators.

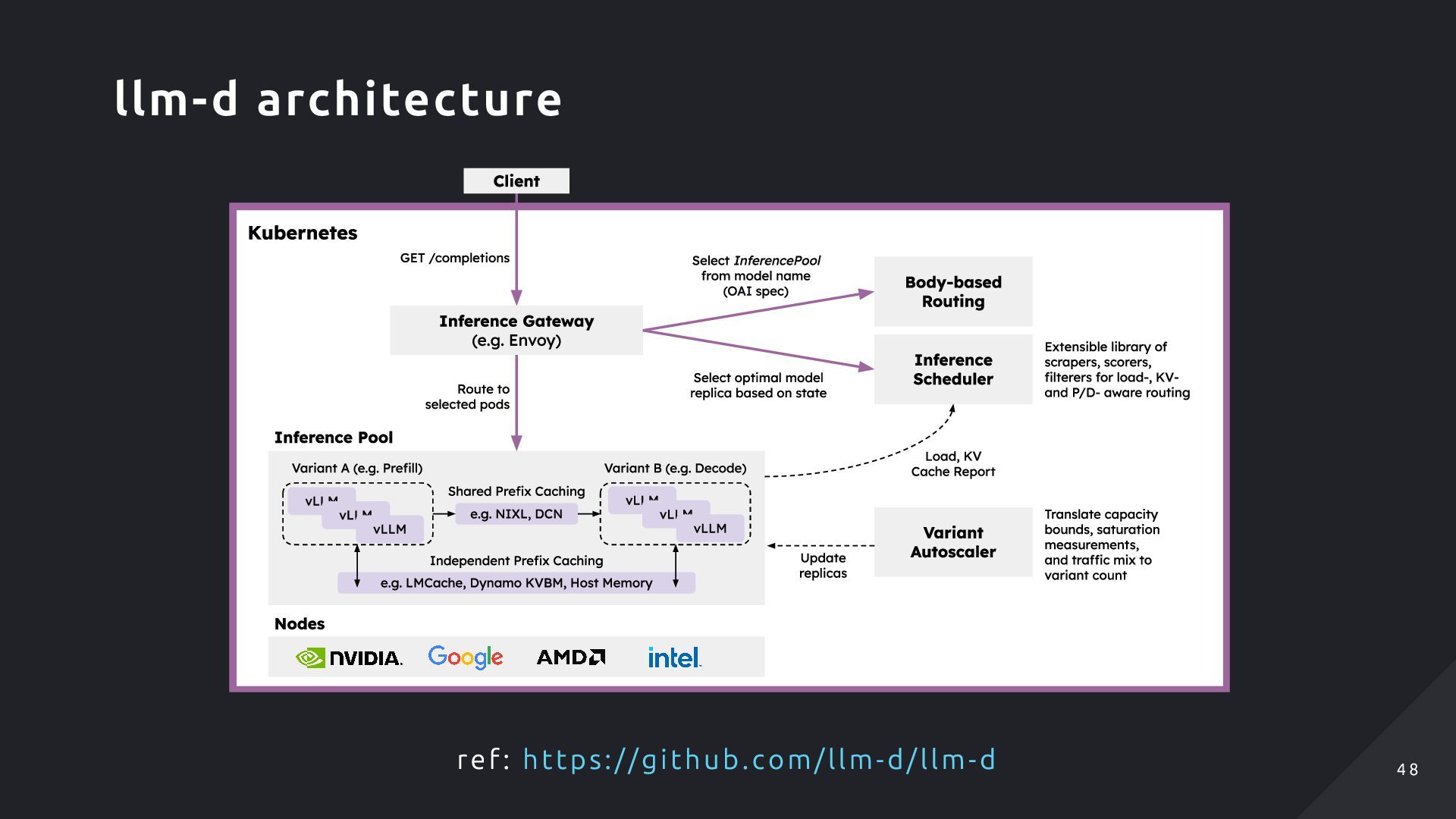

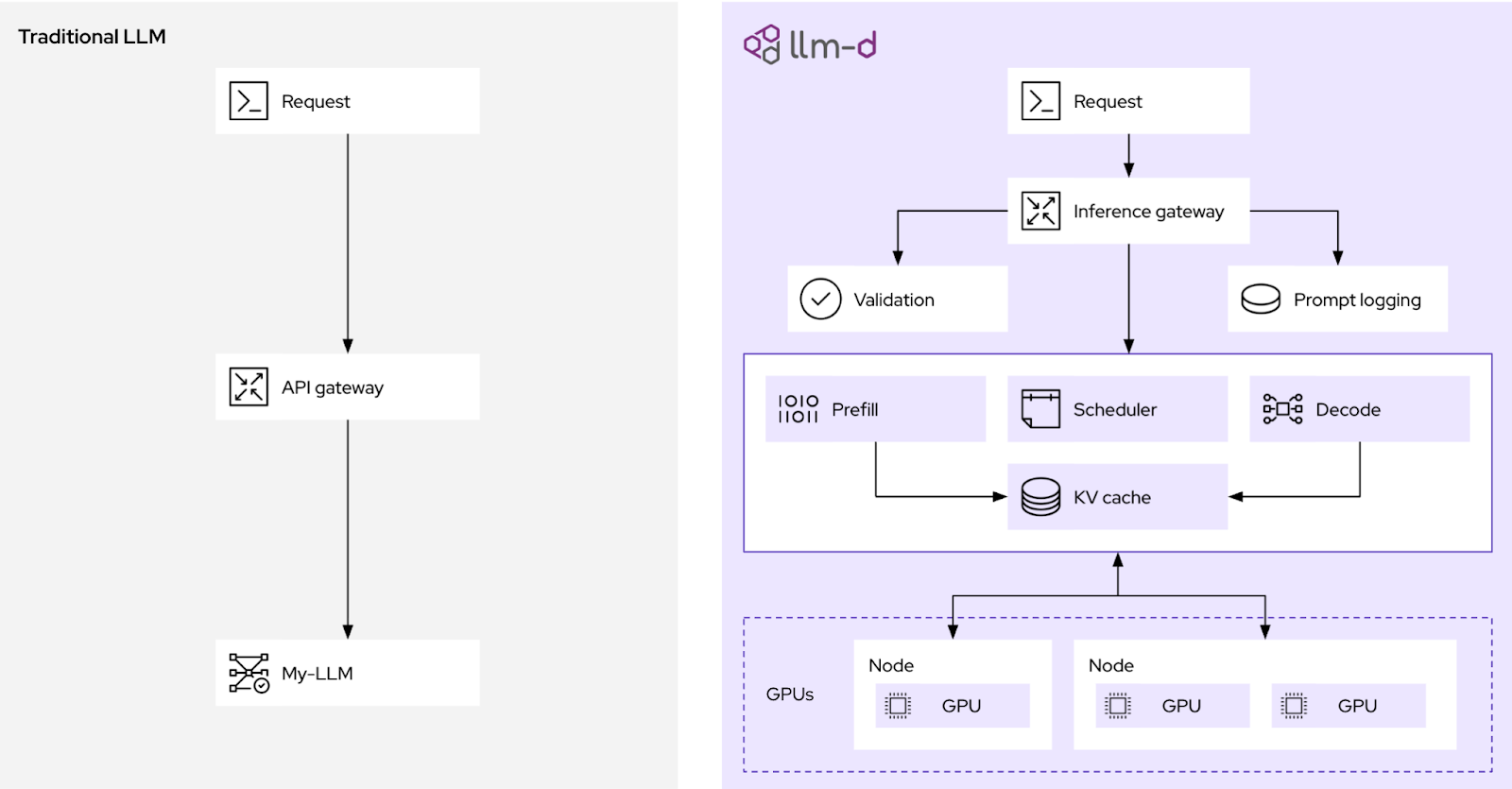

Llm D Makefile At Main Llm D Llm D Github Llm d accelerates distributed inference by integrating industry standard open technologies: vllm as default model server and engine, kubernetes inference gateway as control plane api and load balancing orchestrator, and kubernetes as infrastructure orchestrator and workload control plane. In depth articles and tutorials on leveraging llms, including natural language processing, code generation, and data analysis, with insights into training, fine tuning, and deploying llms. curious how to get started? check out our guide on architecting llm powered applications. Llm d builds on proven open source technologies while adding advanced distributed inference capabilities. the system integrates seamlessly with existing kubernetes infrastructure and extends vllm’s high performance inference engine with cluster scale orchestration:. Description: modelservice is a helm chart that simplifies llm deployment on llm d by declaratively managing kubernetes resources for serving base models.

Distributed Inference Serving Vllm Lmcache Nixl And Llm D Speaker Llm d builds on proven open source technologies while adding advanced distributed inference capabilities. the system integrates seamlessly with existing kubernetes infrastructure and extends vllm’s high performance inference engine with cluster scale orchestration:. Description: modelservice is a helm chart that simplifies llm deployment on llm d by declaratively managing kubernetes resources for serving base models. These guides are targeted at startups and enterprises deploying production llm serving that want the best possible performance while minimizing operational complexity. If you want to get started quickly and experiment with llm d, you can also take a look at the quickstart we provide. it wraps this chart and deploys a full llm d stack with all it's prerequisites a sample application. Llm d enables high performance distributed inference in production on kubernetes llm d. Llm d accelerates distributed inference by integrating industry standard open technologies: vllm as default model server and engine, kubernetes inference gateway as control plane api and load balancing orchestrator, and kubernetes as infrastructure orchestrator and workload control plane.

What Is Llm D And Why Do We Need It These guides are targeted at startups and enterprises deploying production llm serving that want the best possible performance while minimizing operational complexity. If you want to get started quickly and experiment with llm d, you can also take a look at the quickstart we provide. it wraps this chart and deploys a full llm d stack with all it's prerequisites a sample application. Llm d enables high performance distributed inference in production on kubernetes llm d. Llm d accelerates distributed inference by integrating industry standard open technologies: vllm as default model server and engine, kubernetes inference gateway as control plane api and load balancing orchestrator, and kubernetes as infrastructure orchestrator and workload control plane.

Introduction To Distributed Inference With Llm D Red Hat Developer Llm d enables high performance distributed inference in production on kubernetes llm d. Llm d accelerates distributed inference by integrating industry standard open technologies: vllm as default model server and engine, kubernetes inference gateway as control plane api and load balancing orchestrator, and kubernetes as infrastructure orchestrator and workload control plane.

Comments are closed.