Lisc Object Detection Model By Vit

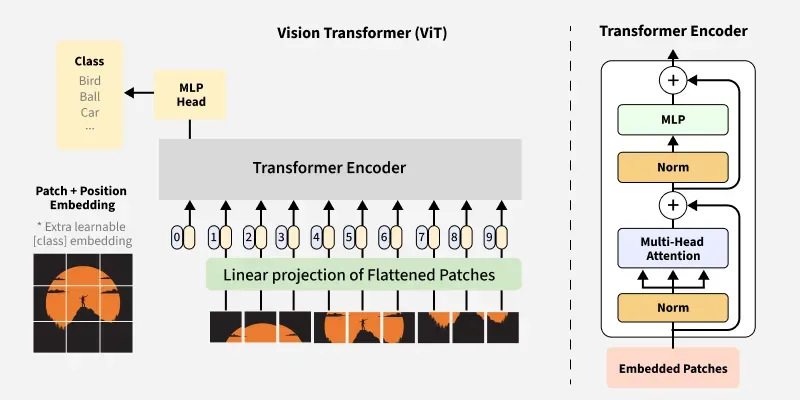

Vision Transformer Vit Architecture Geeksforgeeks 241 open source wbc images and annotations in multiple formats for training computer vision models. lisc (v5, 2023 02 09 11:39am), created by vit. The article vision transformer (vit) architecture by alexey dosovitskiy et al. demonstrates that a pure transformer applied directly to sequences of image patches can perform well on object detection tasks.

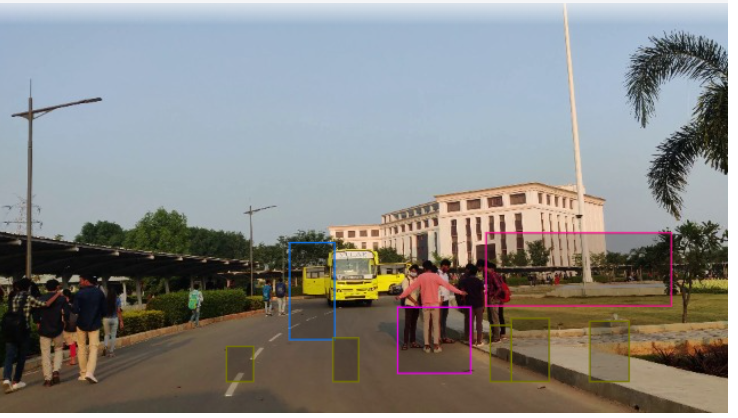

Vit Od Object Detection Model By Vit Project In this guide, we explored how to adapt vision transformers for object detection, demonstrating effective techniques using the caltech 101 dataset. by implementing positional encodings and patch embeddings, we built a model that performed well in detecting objects and predicting bounding boxes. This section will describe how object detection tasks are achieved using vision transformers. we will understand how to fine tune existing pre trained object detection models for our use case. This page documents the object detection implementation in the vit adapter repository. it describes how vision transformer (vit) models are adapted for object detection tasks through the integration with mmdetection framework. Models when training data gets scarce. in fact, the most lightweight osr vit model (thpn(λcls=.10) dinov2 s) trained on 25% of the voc data achieves 20.6% aosp, which is higher than any baseline.

The Foundation Models Reshaping Computer Vision Edge Ai And Vision This page documents the object detection implementation in the vit adapter repository. it describes how vision transformer (vit) models are adapted for object detection tasks through the integration with mmdetection framework. Models when training data gets scarce. in fact, the most lightweight osr vit model (thpn(λcls=.10) dinov2 s) trained on 25% of the voc data achieves 20.6% aosp, which is higher than any baseline. Description: a simple keras implementation of object detection using vision transformers. the article vision transformer (vit) architecture by alexey dosovitskiy et al. demonstrates that a. The article vision transformer (vit) architecture by alexey dosovitskiy et al. demonstrates that a pure transformer applied directly to sequences of image patches can perform well on object detection tasks. 241 open source wbc images plus a pre trained lisc model and api. created by vit. Demonstrates strong performance in image classification, object detection and segmentation. instead of processing words, vit treats an image as a sequence of fixed size patches and applies self attention across them.

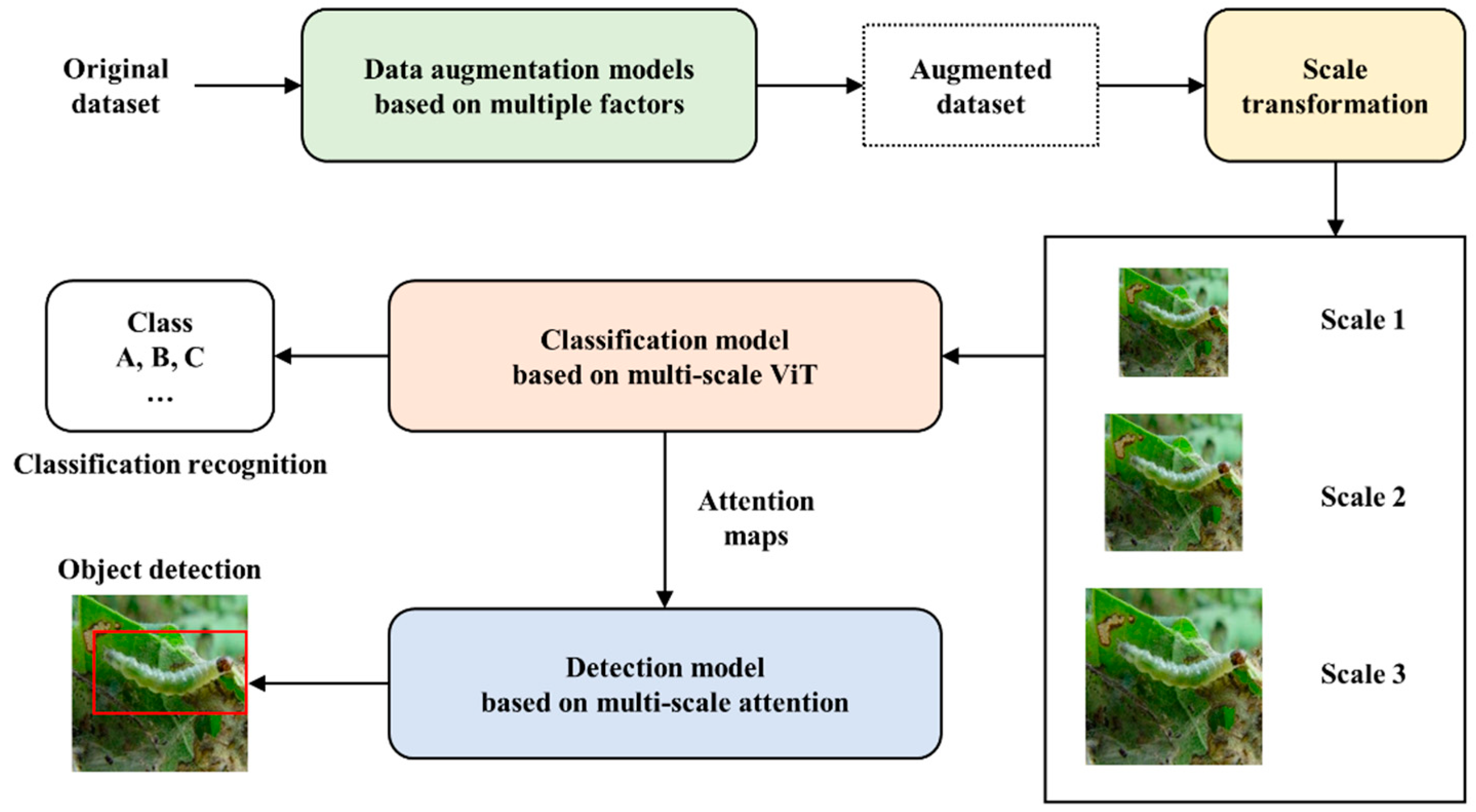

Multi Scale And Multi Factor Vit Attention Model For Classification And Description: a simple keras implementation of object detection using vision transformers. the article vision transformer (vit) architecture by alexey dosovitskiy et al. demonstrates that a. The article vision transformer (vit) architecture by alexey dosovitskiy et al. demonstrates that a pure transformer applied directly to sequences of image patches can perform well on object detection tasks. 241 open source wbc images plus a pre trained lisc model and api. created by vit. Demonstrates strong performance in image classification, object detection and segmentation. instead of processing words, vit treats an image as a sequence of fixed size patches and applies self attention across them.

Comments are closed.