Linear Regression Cost Function And Gradient Descent Algorithm Clearly Explained

Cost Function Gradient Descent 1 Pdf Gradient descent is an optimization algorithm used in linear regression to find the best fit line for the data. it works by gradually adjusting the line’s slope and intercept to reduce the difference between actual and predicted values. When we start learning machine learning, one of the first algorithms we encounter is linear regression. it’s simple, intuitive, and gives us a peek into how models learn from data.

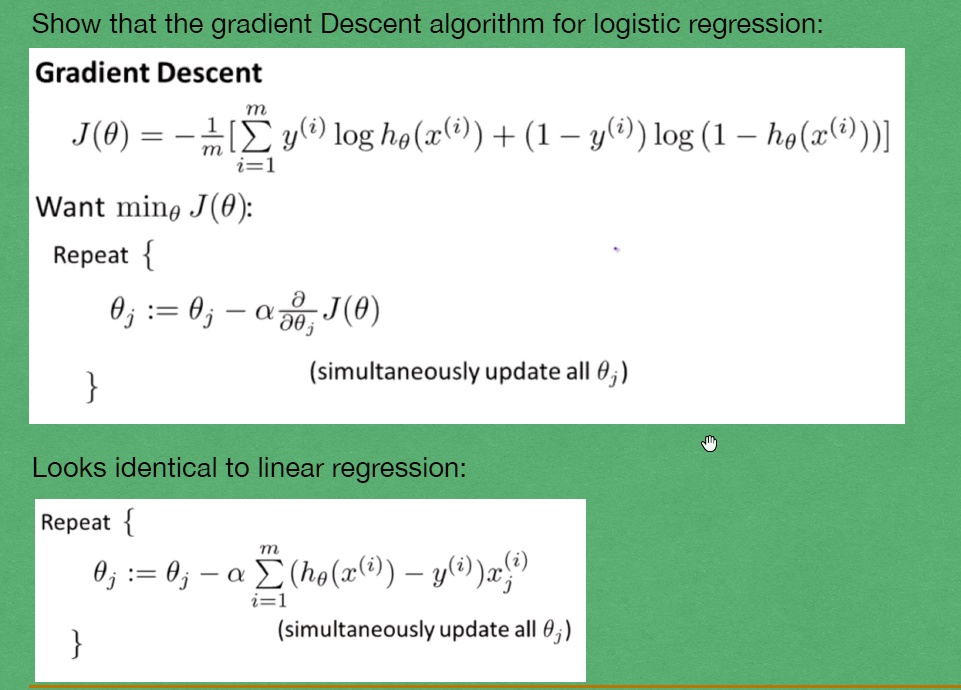

Solved Show That The Gradient Descent Algorithm For Logistic In this second part, we’ll delve deeper into gradient descent, a powerful technique that can help us find the perfect intercept and optimize our model. we’ll explore the math behind it and see how it can be applied to our linear regression problem. Learn how gradient descent iteratively finds the weight and bias that minimize a model's loss. this page explains how the gradient descent algorithm works, and how to determine that a. Linear regression is a supervised machine learning algorithm where the predicted output is continuous. there are 2 types of linear regression: univariate regression and multivariate regression. Welcome to part 2 of our linear regression series — where mathematics meets optimization. in this video, we explore how machines actually learn through the cost function and gradient.

Cost Function And Gradient Descent Algorithm 15jun Pdf Linear regression is a supervised machine learning algorithm where the predicted output is continuous. there are 2 types of linear regression: univariate regression and multivariate regression. Welcome to part 2 of our linear regression series — where mathematics meets optimization. in this video, we explore how machines actually learn through the cost function and gradient. You will learn how gradient descent works from an intuitive, visual, and mathematical standpoint and we will apply it to an exemplary dataset in python. you have most likely heard about gradient descent before. but maybe you still have some questions as to how it really works. This article provides an introduction to machine learning fundamentals, focusing on the concepts of cost functions and gradient descent, using linear regression as an illustrative example. In linear regression, cost function and gradient descent are considered fundamental concepts that play a very crucial role in training a model. let’s try to understand this in detail and also implement this in code with a simple example. Gradient descent works by calculating the gradient (or slope) of the cost function with respect to each parameter. then, it adjusts the parameters in the opposite direction of the gradient by a step size, or learning rate, to reduce the error.

Logistic Regression Difference Between Cost Function Gradient You will learn how gradient descent works from an intuitive, visual, and mathematical standpoint and we will apply it to an exemplary dataset in python. you have most likely heard about gradient descent before. but maybe you still have some questions as to how it really works. This article provides an introduction to machine learning fundamentals, focusing on the concepts of cost functions and gradient descent, using linear regression as an illustrative example. In linear regression, cost function and gradient descent are considered fundamental concepts that play a very crucial role in training a model. let’s try to understand this in detail and also implement this in code with a simple example. Gradient descent works by calculating the gradient (or slope) of the cost function with respect to each parameter. then, it adjusts the parameters in the opposite direction of the gradient by a step size, or learning rate, to reduce the error.

Logistic Regression Difference Between Cost Function Gradient In linear regression, cost function and gradient descent are considered fundamental concepts that play a very crucial role in training a model. let’s try to understand this in detail and also implement this in code with a simple example. Gradient descent works by calculating the gradient (or slope) of the cost function with respect to each parameter. then, it adjusts the parameters in the opposite direction of the gradient by a step size, or learning rate, to reduce the error.

Comments are closed.