Lili Chen Decision Transformer Reinforcement Learning Via Sequence

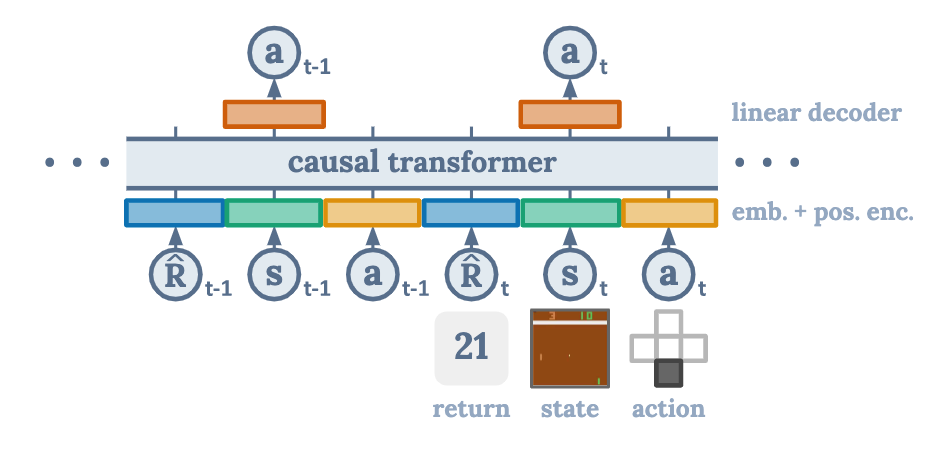

Lili Chen Decision Transformer Reinforcement Learning Via Sequence We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert. We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert.

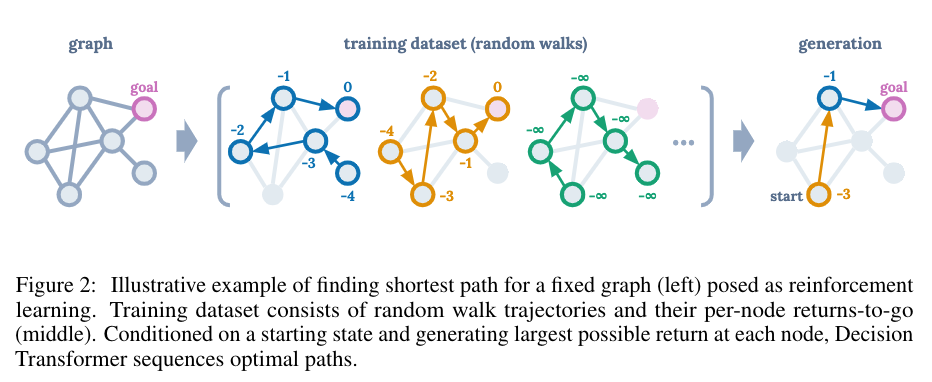

Lili Chen Decision Transformer Reinforcement Learning Via Sequence We propose to replace traditional offline rl algorithms with a simple transformer model trained on sequences of returns, states, and actions with an autoregressive prediction loss. We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert. Through experiments spanning a diverse set of offline rl benchmarks including atari, openai gym, and key to door, we show that our decision transformer model can learn to generate diverse behaviors by conditioning on desired returns. We introduce a framework that abstracts rein forcement learning (rl) as a sequence model ing problem. this allows us to draw upon the simplicity and scalability of the transformer ar chitecture, and associated advances in language modeling such as gpt x and bert.

Lili Chen Decision Transformer Reinforcement Learning Via Sequence Through experiments spanning a diverse set of offline rl benchmarks including atari, openai gym, and key to door, we show that our decision transformer model can learn to generate diverse behaviors by conditioning on desired returns. We introduce a framework that abstracts rein forcement learning (rl) as a sequence model ing problem. this allows us to draw upon the simplicity and scalability of the transformer ar chitecture, and associated advances in language modeling such as gpt x and bert. We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert. We present a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture,. We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert. We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert.

Lili Chen Decision Transformer Reinforcement Learning Via Sequence We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert. We present a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture,. We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert. We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert.

Lili Chen Decision Transformer Reinforcement Learning Via Sequence We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert. We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert.

Lili Chen Kevin Lu Aravind Rajeswaran Kimin Lee Aditya Grover

Comments are closed.