Lightmem Lightweight Efficient Memory For Llms

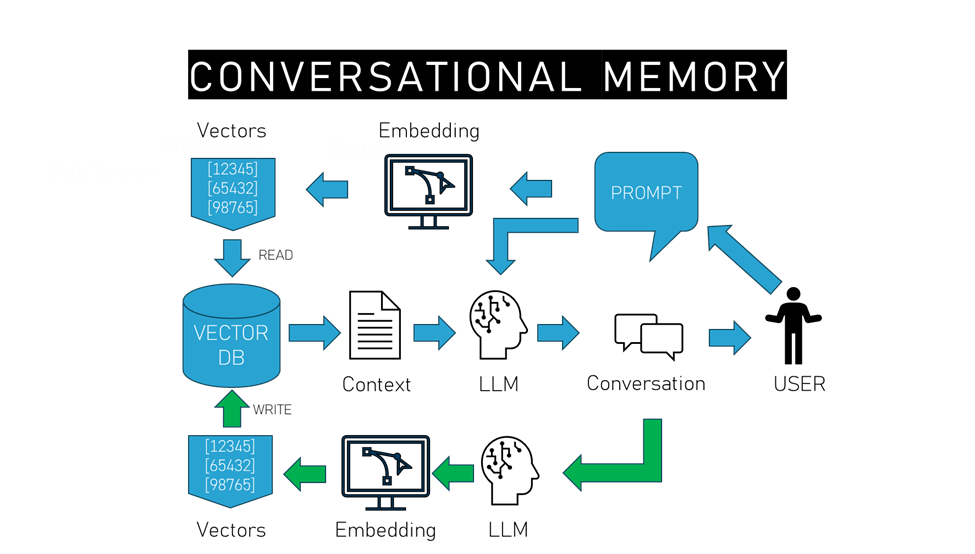

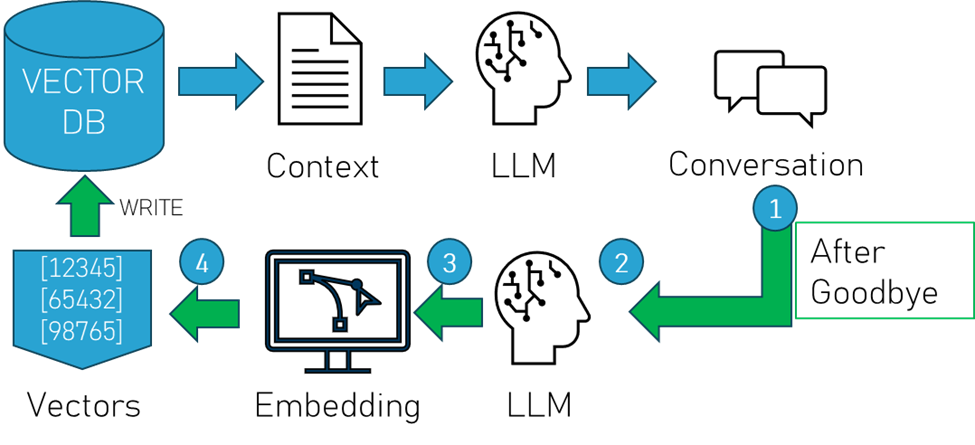

Leveraging Llms Memory Efficient Embedding A Survey On Recent To this end, we introduce a new memory system called lightmem, which strikes a balance between the performance and efficiency of memory systems. inspired by the atkinson shiffrin model of human memory, lightmem organizes memory into three complementary stages. Lightmem is a lightweight and efficient memory management framework designed for large language models and ai agents. it provides a simple yet powerful memory storage, retrieval, and update mechanism to help you quickly build intelligent applications with long term memory capabilities.

Memory Efficient Large Language Models Llms To this end, we introduce a new memory system called lightmem, which strikes a balance between the performance and efficiency of memory systems. inspired by the atkinson–shiffrin model of human memory, lightmem organizes memory into three complementary stages. Lightmem, a memory system inspired by human memory, enhances llms by efficiently managing historical interaction information, improving accuracy and reducing computational costs. Lightmem represents a significant advancement in the field of large language model memory systems, offering an elegant and highly effective solution to the persistent challenge of leveraging historical interaction information efficiently. Lightmem is a memory system for llms designed to achieve high reasoning accuracy and dramatic efficiency improvements in long range, multi turn interaction scenarios.

Memory For Open Source Llms Pinecone Lightmem represents a significant advancement in the field of large language model memory systems, offering an elegant and highly effective solution to the persistent challenge of leveraging historical interaction information efficiently. Lightmem is a memory system for llms designed to achieve high reasoning accuracy and dramatic efficiency improvements in long range, multi turn interaction scenarios. Lightmem proposes a new, human inspired memory system for large language model (llm) agents that keeps long term context while drastically cutting token usage, api calls, and latency compared with existing memory frameworks. In sum, lightmem offers a thoughtful reimagining of llm memory that emphasizes staged compression, topic structure, and offline consolidation to reconcile efficiency with performance. To this end, we introduce a new memory system called lightmem, which strikes a balance between the performance and efficiency of memory systems. inspired by the atkinson shiffrin model of. What this means for builders immediate opportunities: lightmem's code is available on github, making it accessible for integration into existing llm applications. the dramatic efficiency gains make memory augmented llms economically viable for production use cases that were previously too expensive.

Memory For Open Source Llms Pinecone Lightmem proposes a new, human inspired memory system for large language model (llm) agents that keeps long term context while drastically cutting token usage, api calls, and latency compared with existing memory frameworks. In sum, lightmem offers a thoughtful reimagining of llm memory that emphasizes staged compression, topic structure, and offline consolidation to reconcile efficiency with performance. To this end, we introduce a new memory system called lightmem, which strikes a balance between the performance and efficiency of memory systems. inspired by the atkinson shiffrin model of. What this means for builders immediate opportunities: lightmem's code is available on github, making it accessible for integration into existing llm applications. the dramatic efficiency gains make memory augmented llms economically viable for production use cases that were previously too expensive.

Pdf Memory Efficient Training Of Llms With Larger Mini Batches To this end, we introduce a new memory system called lightmem, which strikes a balance between the performance and efficiency of memory systems. inspired by the atkinson shiffrin model of. What this means for builders immediate opportunities: lightmem's code is available on github, making it accessible for integration into existing llm applications. the dramatic efficiency gains make memory augmented llms economically viable for production use cases that were previously too expensive.

Comments are closed.