Let S Build Gpt From Scratch In Code Spelled Out Transcript Chat

Free Video Let S Build Gpt From Scratch In Code Spelled Out From Read the full transcript of let's build gpt: from scratch, in code, spelled out. by andrej karpathy available in 1 language (s). We build a generatively pretrained transformer (gpt), following the paper "attention is all you need" and openai's gpt 2 gpt 3. we talk about connections to chatgpt, which has taken the.

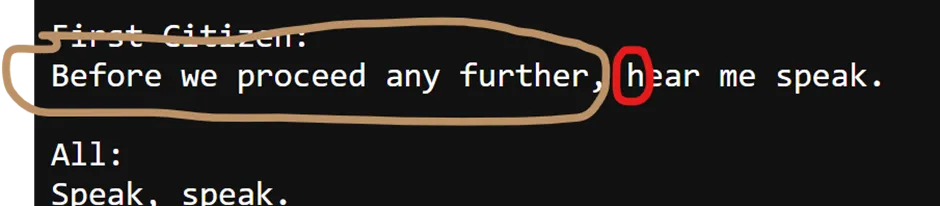

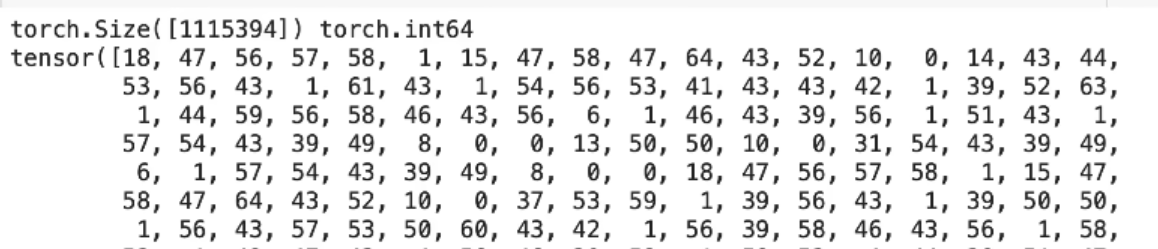

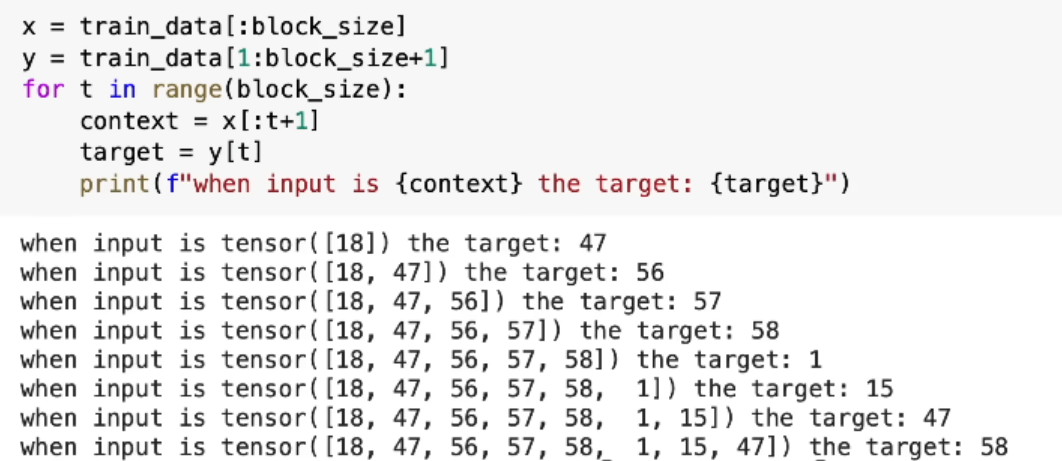

Chat Gpt Script Pdf Gpt (generative pretrained tansformer) transformers do the heavy lifting under the hood. the subset of data being used is a subset of shakespear. the simplest, fastest repository for training finetuning medium sized gpts. it is a rewrite of mingpt that prioritizes teeth over education. Andrej karpathy’s “let’s build gpt: from scratch, in code, spelled out” tutorial (code, video) offers an invaluable hands on approach to demystifying one of the most influential architectures in modern ai. Let's build gpt: from scratch, in code, spelled out. a step by step implementation of a generatively pretrained transformer (gpt) model that demonstrates the core architecture behind systems like chatgpt. Chatgpt (gpt stands for generative pre trained transformer) is a probabilistic system that for anyone’s prompt it can give us multiple answers. it models sequence of words (or token) and tries to predict the next word to complete the prompt we give;.

Let S Build Gpt From Scratch In Code Spelled Out Let's build gpt: from scratch, in code, spelled out. a step by step implementation of a generatively pretrained transformer (gpt) model that demonstrates the core architecture behind systems like chatgpt. Chatgpt (gpt stands for generative pre trained transformer) is a probabilistic system that for anyone’s prompt it can give us multiple answers. it models sequence of words (or token) and tries to predict the next word to complete the prompt we give;. We build a generatively pretrained transformer (gpt), following the paper "attention is all you need. We build a generatively pretrained transformer (gpt), following the paper "attention is all you need" and openai's gpt 2 gpt 3. we talk about connections to chatgpt, which has taken the world by storm. Chatgpt break down the prompt (user input) into a sequence of words and then uses this sequence to predict the next most probable word or sequence of words that would complete the prompt in a. Dive into a comprehensive tutorial on building a generatively pretrained transformer (gpt) from scratch, following the "attention is all you need" paper and openai's gpt 2 gpt 3 models. explore the connections to chatgpt and watch github copilot assist in writing gpt code.

Let S Build Gpt From Scratch In Code Spelled Out We build a generatively pretrained transformer (gpt), following the paper "attention is all you need. We build a generatively pretrained transformer (gpt), following the paper "attention is all you need" and openai's gpt 2 gpt 3. we talk about connections to chatgpt, which has taken the world by storm. Chatgpt break down the prompt (user input) into a sequence of words and then uses this sequence to predict the next most probable word or sequence of words that would complete the prompt in a. Dive into a comprehensive tutorial on building a generatively pretrained transformer (gpt) from scratch, following the "attention is all you need" paper and openai's gpt 2 gpt 3 models. explore the connections to chatgpt and watch github copilot assist in writing gpt code.

Let S Build Gpt From Scratch In Code Spelled Out Chatgpt break down the prompt (user input) into a sequence of words and then uses this sequence to predict the next most probable word or sequence of words that would complete the prompt in a. Dive into a comprehensive tutorial on building a generatively pretrained transformer (gpt) from scratch, following the "attention is all you need" paper and openai's gpt 2 gpt 3 models. explore the connections to chatgpt and watch github copilot assist in writing gpt code.

Comments are closed.