Lecture 8 The Gpt Tokenizer Byte Pair Encoding

Understanding Byte Pair Encoding Bpe In Large Language Models In this lecture, we will learn about byte pair encoding: the tokenizer which powers modern llms like gpt 2, gpt 3 and gpt 4 .more. [music] hello everyone welcome to this lecture in the large language models from scratch series today we are going to learn about a very important topic which is called as bite pair encoding in many lectures or even video content which you see on large language models when tokenization is covered the concept of bite pair encoding is rarely.

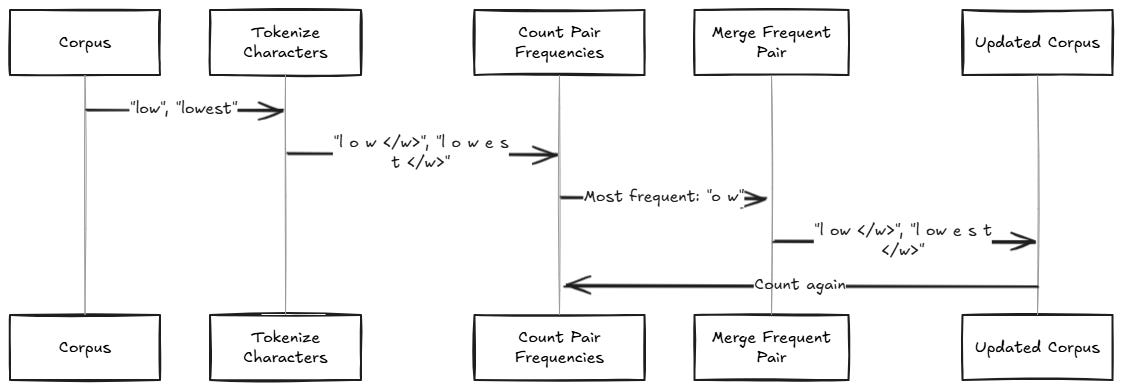

Tokenization Byte Pair Encoding Byte pair encoding (bpe) was initially developed as an algorithm to compress texts, and then used by openai for tokenization when pretraining the gpt model. it’s used by a lot of transformer models, including gpt, gpt 2, roberta, bart, and deberta. This is a standalone notebook implementing the popular byte pair encoding (bpe) tokenization algorithm, which is used in models like gpt 2 to gpt 4, llama 3, etc., from scratch for educational purposes. It includes every step from raw byte level tokenization to bpe merge training and decoding — providing a clear and educational walkthrough for anyone interested in how modern tokenizers work. Step through the byte pair encoding algorithm that powers gpt's tokenizer — merge rules, vocabulary building, and encoding decoding with python code.

Code For The Byte Pair Encoding Algorithm Commonly Used In Llm It includes every step from raw byte level tokenization to bpe merge training and decoding — providing a clear and educational walkthrough for anyone interested in how modern tokenizers work. Step through the byte pair encoding algorithm that powers gpt's tokenizer — merge rules, vocabulary building, and encoding decoding with python code. Gpt 2 used a bpe tokenizer with a vocabulary of ≈50,257 tokens, and openai’s tiktoken is a fast rust backed implementation you can use today. below i explain the why, the how (intuition algorithm), and a short hands on demo using tiktoken. Byte pair encoding (bpe) was initially developed as an algorithm to compress texts, and then used by openai for tokenization when pretraining the gpt model. it’s used by a lot of. Byte pair encoding (bpe) was initially developed as an algorithm to compress texts, and then used by openai for tokenization when pretraining the gpt model. it’s used by a lot of transformer models, including gpt, gpt 2, roberta, bart, and deberta. Lecture 6: stages of building an llm from scratch lecture 7: code an llm tokenizer from scratch in python lecture 8: the gpt tokenizer: byte pair encoding lecture 9: creating input target data pairs using python dataloader lecture 10: what are token embeddings?.

What Is Byte Pair Encoding Bpe Pooja Palod Posted On The Topic Gpt 2 used a bpe tokenizer with a vocabulary of ≈50,257 tokens, and openai’s tiktoken is a fast rust backed implementation you can use today. below i explain the why, the how (intuition algorithm), and a short hands on demo using tiktoken. Byte pair encoding (bpe) was initially developed as an algorithm to compress texts, and then used by openai for tokenization when pretraining the gpt model. it’s used by a lot of. Byte pair encoding (bpe) was initially developed as an algorithm to compress texts, and then used by openai for tokenization when pretraining the gpt model. it’s used by a lot of transformer models, including gpt, gpt 2, roberta, bart, and deberta. Lecture 6: stages of building an llm from scratch lecture 7: code an llm tokenizer from scratch in python lecture 8: the gpt tokenizer: byte pair encoding lecture 9: creating input target data pairs using python dataloader lecture 10: what are token embeddings?.

Comments are closed.