Lecture 4 2 Generalization And Regularization Pdf Linear

Deep Learning Basics Lecture 3 Regularization I Pdf Mathematical Lecture 4.2. generalization and regularization free download as pdf file (.pdf), text file (.txt) or view presentation slides online. The moocs i learnt myself. the repo is kept as a record for myself. mooc 6.86x unit 1 linear classifiers and generalizations lecture 4. linear classification and generalization 3. regularization and generalization.pdf at master · sakimarquis mooc.

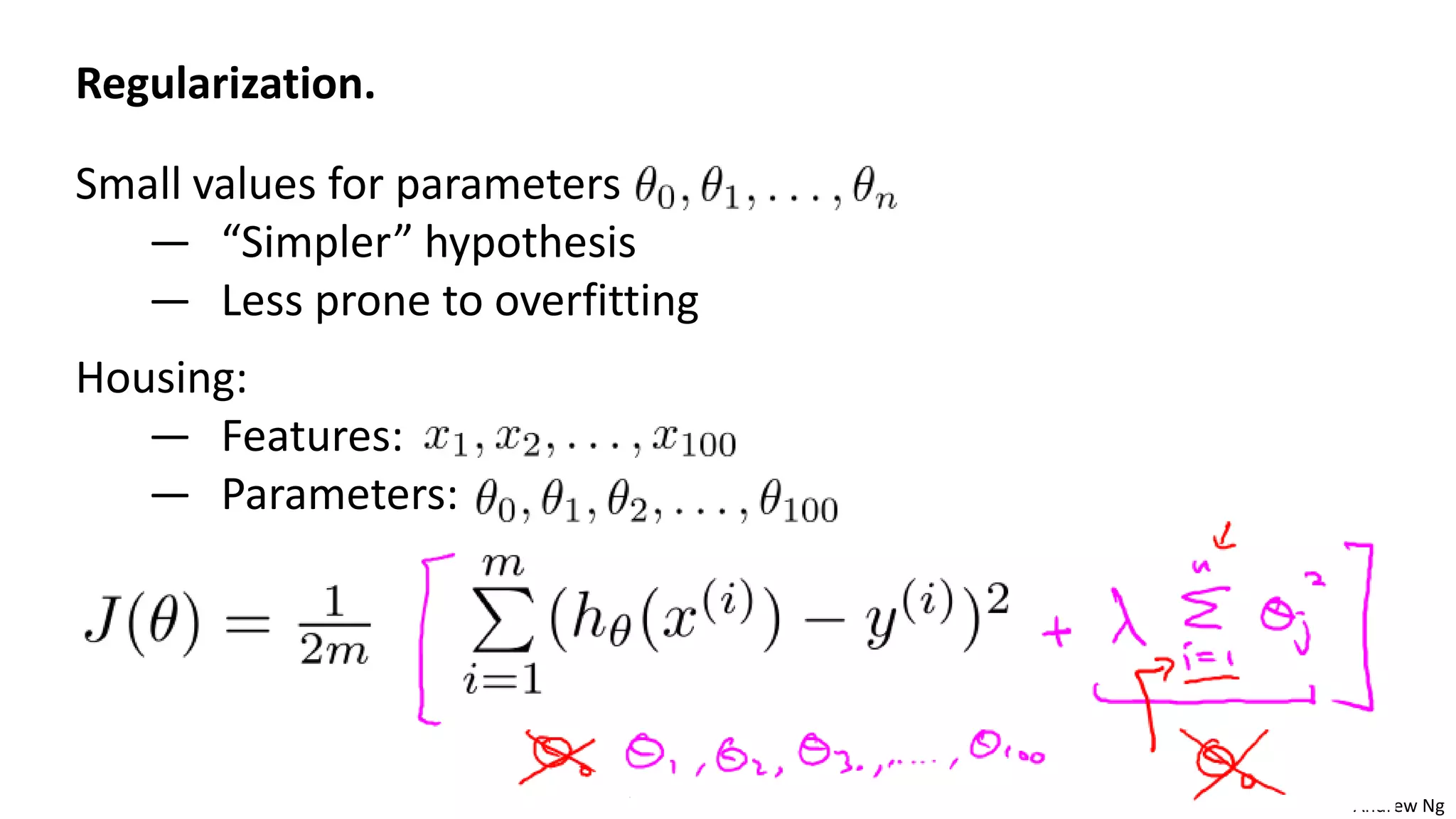

Mastering Linear Regression Regularization Techniques Course Hero This section provides the lecture notes from the course. There are two main types of regularization used in linear regression: the lasso or l1 penalty (see [1]), and the ridge or l2 penalty (see [2]). here, we will rather focus on the latter, despite the growing trend in machine learning in favor of the former. ‣ learning problems can be formulated as optimization problems of the form: loss regularization ‣ linear, large margin classification, along with many other learning problems, can be solved with stochastic gradient descent algorithms ‣ large margin linear classifier can be also obtained via solving a quadratic program (support vector. • logistic regression is the default classification decoder (e.g. it is the last layer of neural network classifiers) • linear regression is used to explain data or predict continuous variables in a wide range of applications.

Understanding Regularization In Linear Regression Prevent Course Hero ‣ learning problems can be formulated as optimization problems of the form: loss regularization ‣ linear, large margin classification, along with many other learning problems, can be solved with stochastic gradient descent algorithms ‣ large margin linear classifier can be also obtained via solving a quadratic program (support vector. • logistic regression is the default classification decoder (e.g. it is the last layer of neural network classifiers) • linear regression is used to explain data or predict continuous variables in a wide range of applications. Artificial intelligence ii (cs4442 & cs9542) overfitting, cross validation, and regularization boyu wang department of computer science university of western ontario. Lecture 4: regularization and bayesian statistics feng li shandong university [email protected] september 20, 2023. Linear h is the non realizable case (restricted h or missing inputs); suffers additional structural error – high degree polynomial h: realizable but redundant; learns slowly. Cmu school of computer science.

Notes On Regularization For Linear Regression Pdf A Short Note On Artificial intelligence ii (cs4442 & cs9542) overfitting, cross validation, and regularization boyu wang department of computer science university of western ontario. Lecture 4: regularization and bayesian statistics feng li shandong university [email protected] september 20, 2023. Linear h is the non realizable case (restricted h or missing inputs); suffers additional structural error – high degree polynomial h: realizable but redundant; learns slowly. Cmu school of computer science.

Understanding Regularization In Linear Models A Comprehensive Course Linear h is the non realizable case (restricted h or missing inputs); suffers additional structural error – high degree polynomial h: realizable but redundant; learns slowly. Cmu school of computer science.

Machine Learning Lecture6 Regularization Pptx

Comments are closed.