Lecture 3 Optimization For Machine Learning

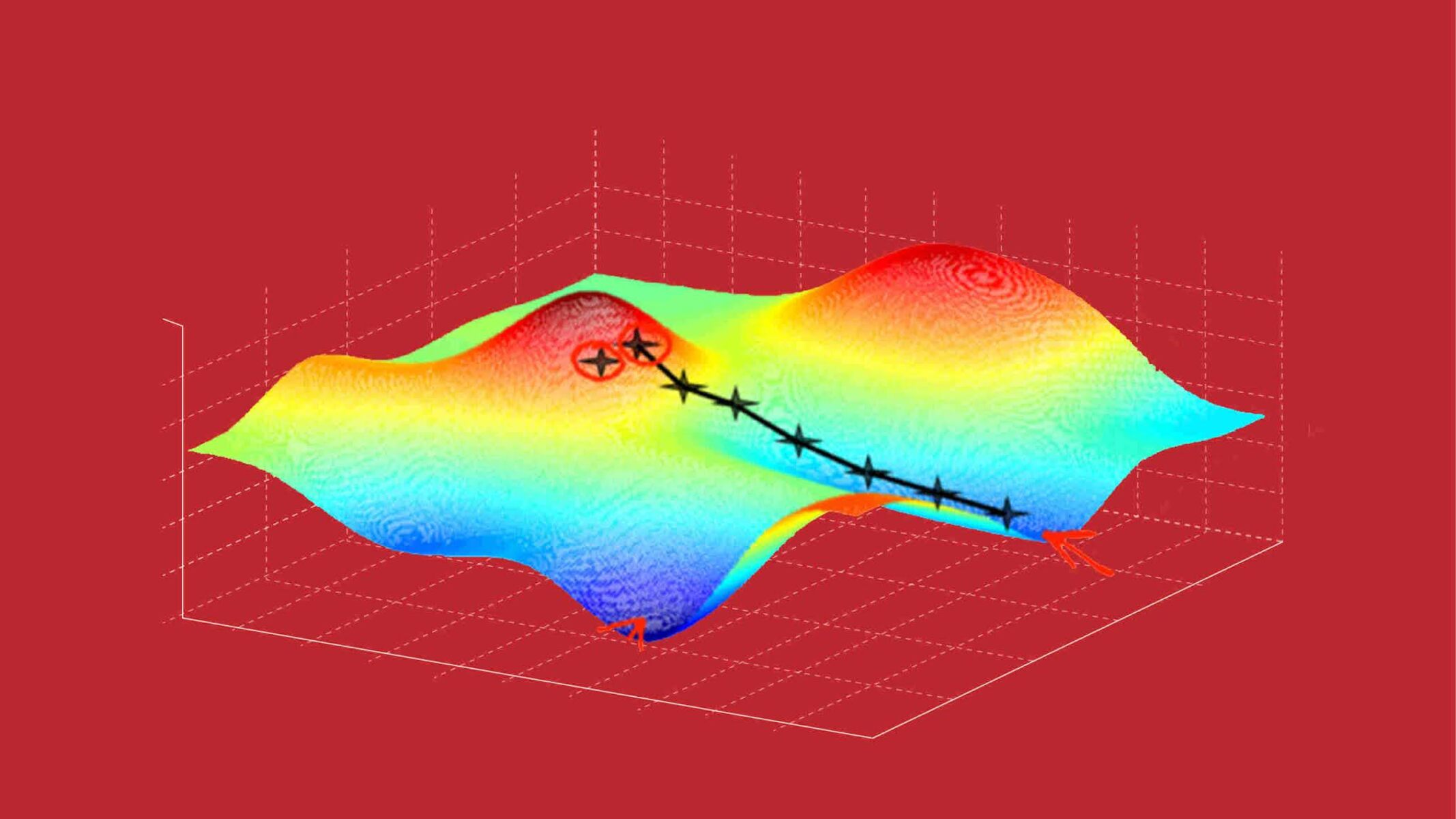

Optimization In Machine Learning Pdf Computational Science Below you can find slides and lecture notes. S a convex function, optimality can be characterised locally. theory and algorithm for convex optimisation have been developed since the 1950s. the methods particularly important for machine learning are those that can be implemented at scale.

Lecture 03 Pdf Mathematical Optimization Linear Programming Lecture 1: introduction to optimization for machine learning. lecture notes: above text. notes: chapters 1,2 in text. lecture 2: basic concepts in analysis and optimization . gradient. This course teaches an overview of modern mathematical optimization methods, for applications in machine learning and data science. in particular, scalability of algorithms to large datasets will be discussed in theory and in implementation. Boosting: general methods of converting rough rules of thumb (or weak classifier) into highly accurate prediction rule. each classifier is dependent on the previous one and focuses on the previous one’s errors. The aim of these courses is to provide mathematical optimization concepts that are useful in the design and anal ysis of methods for learning out of (large sets of) data.

Pdf Machine Learning Optimization Techniques Boosting: general methods of converting rough rules of thumb (or weak classifier) into highly accurate prediction rule. each classifier is dependent on the previous one and focuses on the previous one’s errors. The aim of these courses is to provide mathematical optimization concepts that are useful in the design and anal ysis of methods for learning out of (large sets of) data. A stochastic gradient method with an exponential convergence rate for strongly convex optimization with finite training sets. in advances in neural information processing systems (nips), 2012. Lecture notes on optimization for machine learning, derived from a course at princeton university and tutorials given in mlss, buenos aires, as well as simons foundation, berkeley. Lecture 3 optimization. notes. slides. source code. video. L. n. vicente, s. gratton, r. garmanjani, and t. giovannelli, concise lecture notes on optimization methods for machine learning and data science, ise department, lehigh university, april 2024.

What Is Optimization In Machine Learning Robots Net A stochastic gradient method with an exponential convergence rate for strongly convex optimization with finite training sets. in advances in neural information processing systems (nips), 2012. Lecture notes on optimization for machine learning, derived from a course at princeton university and tutorials given in mlss, buenos aires, as well as simons foundation, berkeley. Lecture 3 optimization. notes. slides. source code. video. L. n. vicente, s. gratton, r. garmanjani, and t. giovannelli, concise lecture notes on optimization methods for machine learning and data science, ise department, lehigh university, april 2024.

Comments are closed.