Lecture 1 Introduction To Task Based Parallelism

Lecture 2 Parallelism Pdf Process Computing Parallel Computing First lecture from the course "task based parallelism in scientific computing" which was given on 2021 05 10 by hpc2n snic. The purpose of the course is to learn when a code could benefit from task based parallelism, and how to apply it. a task based algorithm comprises of a set of self contained tasks that have well defined inputs and outputs.

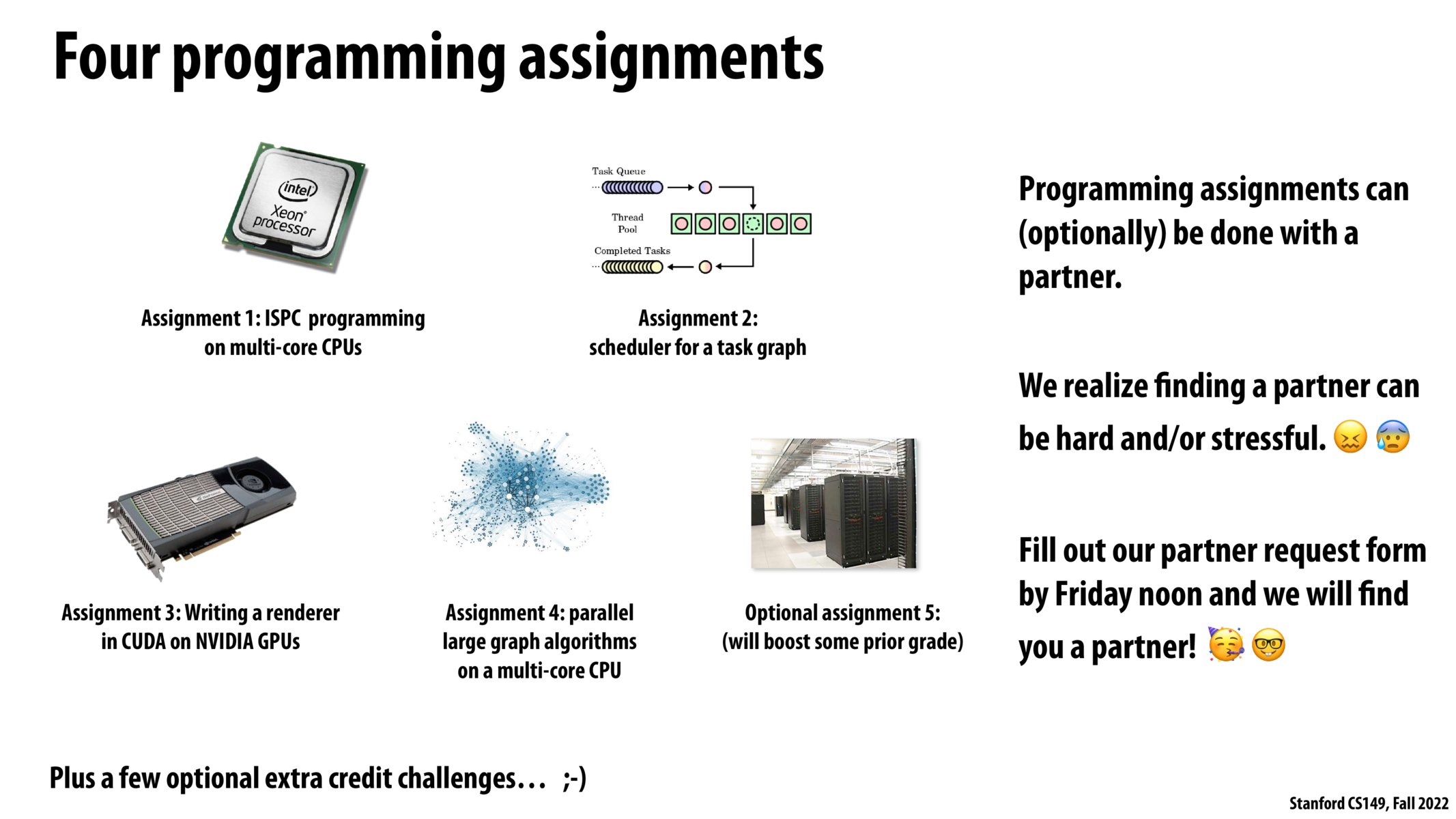

Task Based Parallelism Part 2 Techenablement Lecture #1 covers the fundamentals of parallel processing, emphasizing its importance in enhancing computational efficiency through simultaneous task execution. In the next set of slides, i will attempt to place you in the context of this broader computation space that is called task level parallelism. of course a proper treatment of parallel computing or distributed computing is worthy of an entire semester (or two) course of study. Goals learn how to program heterogeneous parallel computing systems and achieve high performance and energy efficiency functionality and maintainability scalability across future generations portability across vendor devices. The tutorial begins with a discussion on parallel computing what it is and how it's used, followed by a discussion on concepts and terminology associated with parallel computing. the topics of parallel memory architectures and programming models are then explored.

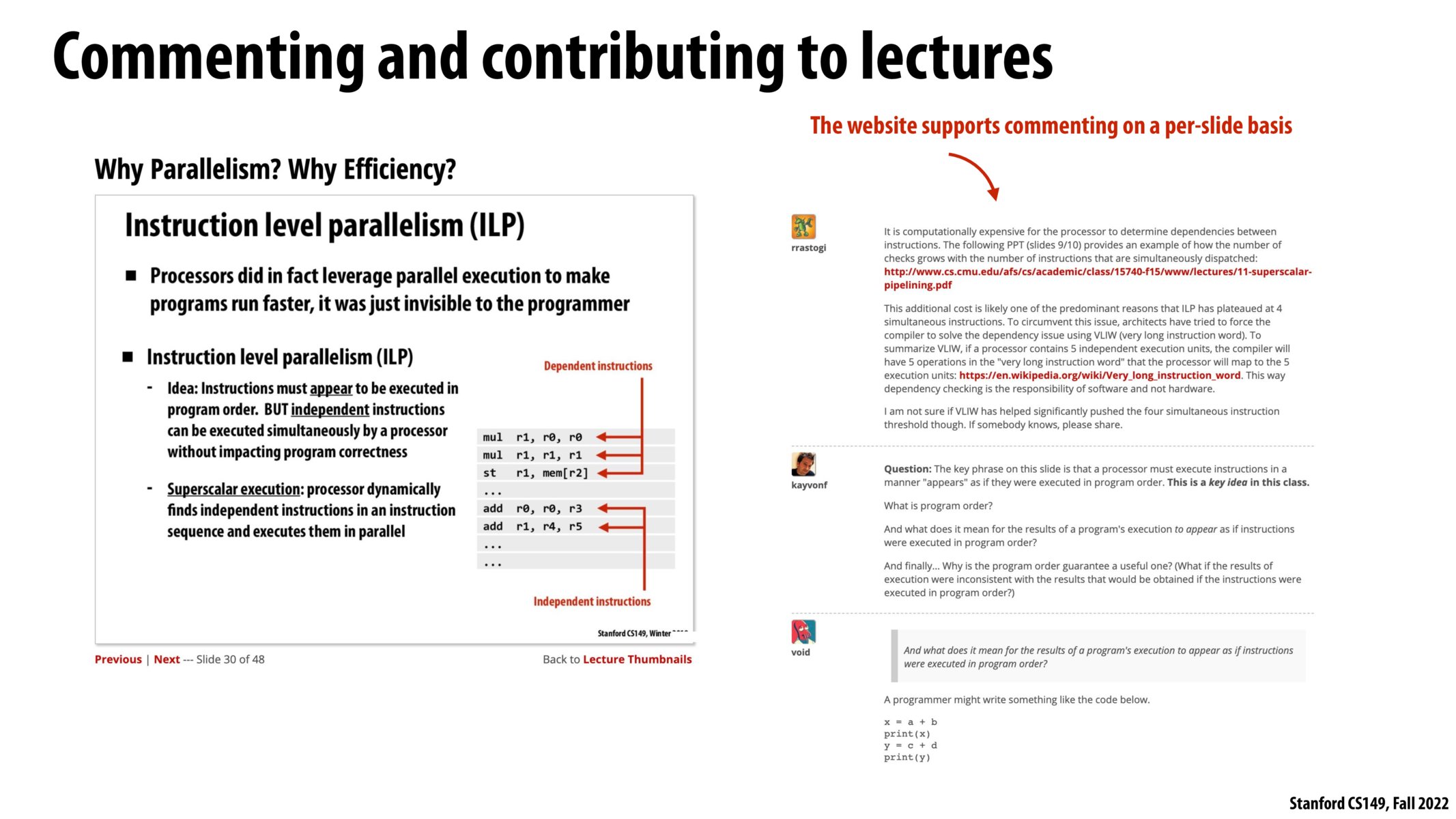

Back To Lecture Thumbnails Goals learn how to program heterogeneous parallel computing systems and achieve high performance and energy efficiency functionality and maintainability scalability across future generations portability across vendor devices. The tutorial begins with a discussion on parallel computing what it is and how it's used, followed by a discussion on concepts and terminology associated with parallel computing. the topics of parallel memory architectures and programming models are then explored. Assume for simplicity that each task takes 1 unit of time. identify the longest chain of dependences in the task graph and give a good lower bound for the parallel execution time. Parallel clusters can be built from cheap, commodity components. provide concurrency: a single compute resource can only do one thing at a time. multiple computing resources can be doing many things simultaneously. embarrassingly parallel – solving many similar but independent tasks simultaneously. requires very little communication. Distinguish between parallelism—improving performance by exploiting multiple processors—and concurrency—managing simultaneous access to shared resources. explain and justify the task based (vs. thread based) approach to parallelism. Idea #1: superscalar execution: processor automatically nds* independent instructions in an instruction sequence and executes them in parallel on multiple execution units!.

Back To Lecture Thumbnails Assume for simplicity that each task takes 1 unit of time. identify the longest chain of dependences in the task graph and give a good lower bound for the parallel execution time. Parallel clusters can be built from cheap, commodity components. provide concurrency: a single compute resource can only do one thing at a time. multiple computing resources can be doing many things simultaneously. embarrassingly parallel – solving many similar but independent tasks simultaneously. requires very little communication. Distinguish between parallelism—improving performance by exploiting multiple processors—and concurrency—managing simultaneous access to shared resources. explain and justify the task based (vs. thread based) approach to parallelism. Idea #1: superscalar execution: processor automatically nds* independent instructions in an instruction sequence and executes them in parallel on multiple execution units!.

Back To Lecture Thumbnails Distinguish between parallelism—improving performance by exploiting multiple processors—and concurrency—managing simultaneous access to shared resources. explain and justify the task based (vs. thread based) approach to parallelism. Idea #1: superscalar execution: processor automatically nds* independent instructions in an instruction sequence and executes them in parallel on multiple execution units!.

Back To Lecture Thumbnails

Comments are closed.