Least Squares Temporal Difference

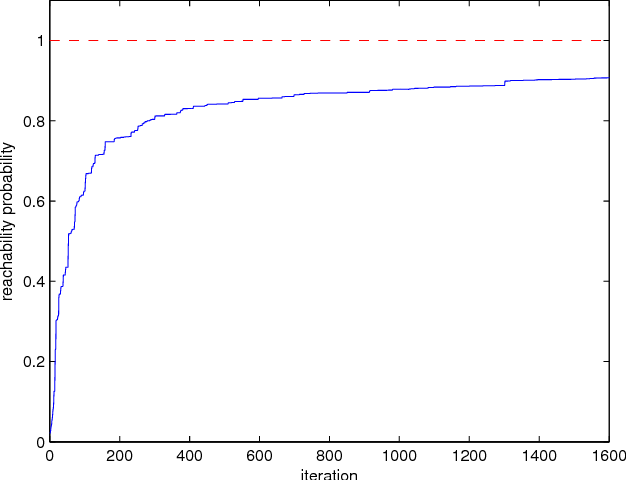

Least Squares Temporal Difference Actor Critic Methods With We investigate the sample complexities required to guarantee a predefined estimation error of the best linear coefficients for two widely used policy evaluation algorithms: the temporal. In this paper, we have presented a least squares temporal difference (lstd) based method called “multi trajectory greedy lstd” (mg lstd). it is an exploration enhanced recursive lstd algorithm with the policy improvement embedded within the lstd algorithm iterations.

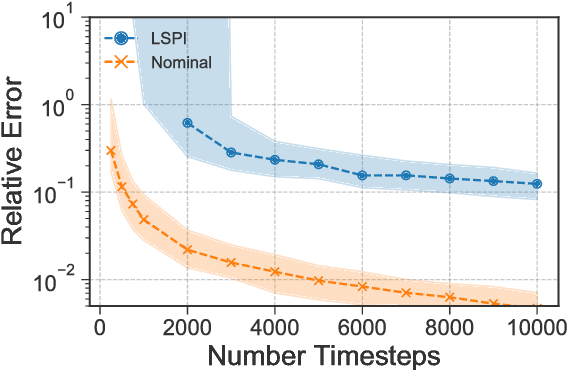

Least Squares Temporal Difference Learning For The Linear Quadratic For the case of linear value function approximations and λ = 0, the least squares td (lstd) algorithm of bradtke and barto (1996, machine learning, 22:1–3, 33–57) eliminates all stepsize parameters and improves data efficiency. this paper updates bradtke and barto's work in three significant ways. Section 4 below presents experimental results com paring the data efficiency of gradient based and least squares based td learning. In this paper, we shed light on this question by focusing on the classic least squares temporal difference (lstd) estimator (boyan, 1999; bradtke & barto, 1996). In this study, we propose a novel pe algorithm called least squares truncated temporal difference learning (lst 2 d), which utilises linear td and lstd to approximate the value function of a given policy with an adaptive truncation mechanism.

Least Squares Temporal Difference Actor Critic Methods With In this paper, we shed light on this question by focusing on the classic least squares temporal difference (lstd) estimator (boyan, 1999; bradtke & barto, 1996). In this study, we propose a novel pe algorithm called least squares truncated temporal difference learning (lst 2 d), which utilises linear td and lstd to approximate the value function of a given policy with an adaptive truncation mechanism. Temporal difference (td) and least squares temporal difference (lstd) are related methods to estimate the value function of a markov decision process (mdp). while td is a direct method using local data to update the value function estimate, lstd is a bellman projected equation method using full data to compute a one time estimate. In this paper, we present a novel kernel based least squares temporal difference (td) learning algorithm called kls td(λ), which can be viewed as the kernel version or nonlinear form of the previous linear ls td(λ) algorithms. Elm works by assigning randomly the weights of the hidden layer and optimizing only the output layer weights through least squares. this procedure can be seen as a mapping of the inputs to a feature space defined by the hidden nodes, and then computing the weights that linearly combine the features. Validation (loto cv) to search the space of values. unfortunately, this approach is too computa ionally expen sive for most practical applications. for least squares td (lstd) we show that loto cv can be implemented effi ciently to automatically tune and apply function optimiza tio.

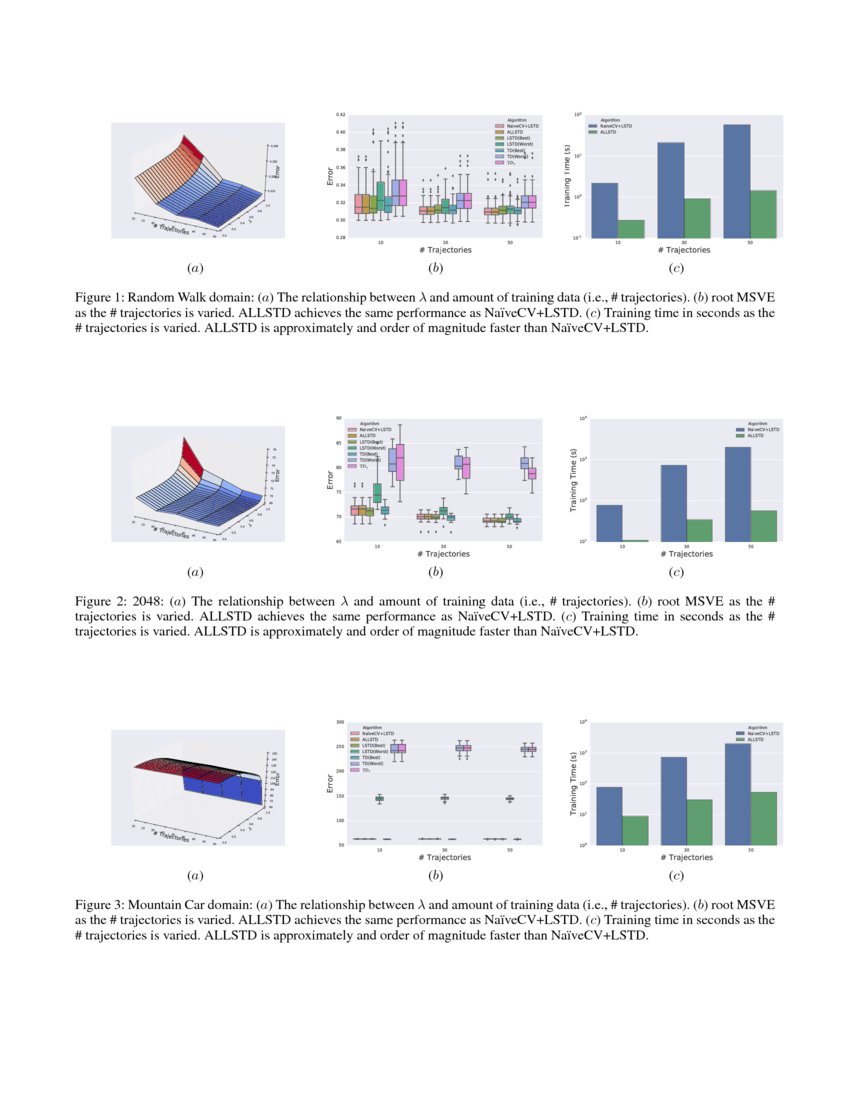

Adaptive Lambda Least Squares Temporal Difference Learning Deepai Temporal difference (td) and least squares temporal difference (lstd) are related methods to estimate the value function of a markov decision process (mdp). while td is a direct method using local data to update the value function estimate, lstd is a bellman projected equation method using full data to compute a one time estimate. In this paper, we present a novel kernel based least squares temporal difference (td) learning algorithm called kls td(λ), which can be viewed as the kernel version or nonlinear form of the previous linear ls td(λ) algorithms. Elm works by assigning randomly the weights of the hidden layer and optimizing only the output layer weights through least squares. this procedure can be seen as a mapping of the inputs to a feature space defined by the hidden nodes, and then computing the weights that linearly combine the features. Validation (loto cv) to search the space of values. unfortunately, this approach is too computa ionally expen sive for most practical applications. for least squares td (lstd) we show that loto cv can be implemented effi ciently to automatically tune and apply function optimiza tio.

Pdf Incremental Least Squares Temporal Difference Learning Elm works by assigning randomly the weights of the hidden layer and optimizing only the output layer weights through least squares. this procedure can be seen as a mapping of the inputs to a feature space defined by the hidden nodes, and then computing the weights that linearly combine the features. Validation (loto cv) to search the space of values. unfortunately, this approach is too computa ionally expen sive for most practical applications. for least squares td (lstd) we show that loto cv can be implemented effi ciently to automatically tune and apply function optimiza tio.

Pdf Incremental Least Squares Temporal Difference Learning

Comments are closed.