Learning Vector Quantization Geeksforgeeks

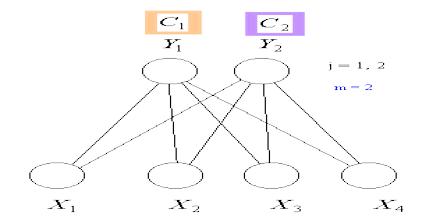

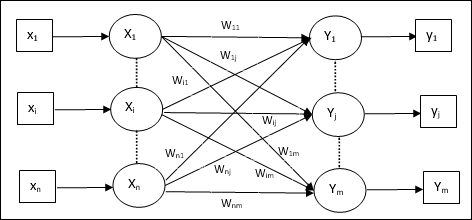

Learning Vector Quantization Assignment Point Learning vector quantization (lvq) is a type of artificial neural network that’s inspired by how our brain processes information. it's a supervised classification algorithm that uses a prototype based approach. Learning vector quantization (lvq), different from vector quantization (vq) and kohonen self organizing maps (ksom), basically is a competitive network which uses supervised learning. we may define it as a process of classifying the patterns where each output unit represents a class.

Github Nugrahari Learning Vector Quantization Klasifikasi Learning vector quantization in computer science, learning vector quantization (lvq) is a prototype based supervised classification algorithm. lvq is the supervised counterpart of vector quantization systems. By mapping input data points to prototype vectors representing various classes, lvq creates an intuitive and interpretable representation of the data distribution. throughout this article, we will. Learn how to use python to implement learning vector quantization from scratch with this easy to follow, yet detailed, tutorial and a dataset in sklearn. To understand vector quantization, it’s important to first grasp the basics of different data types, particularly how quantization reduces data size. floating point numbers represent real numbers in computing, allowing for the expression of an extensive range of values with varying precision.

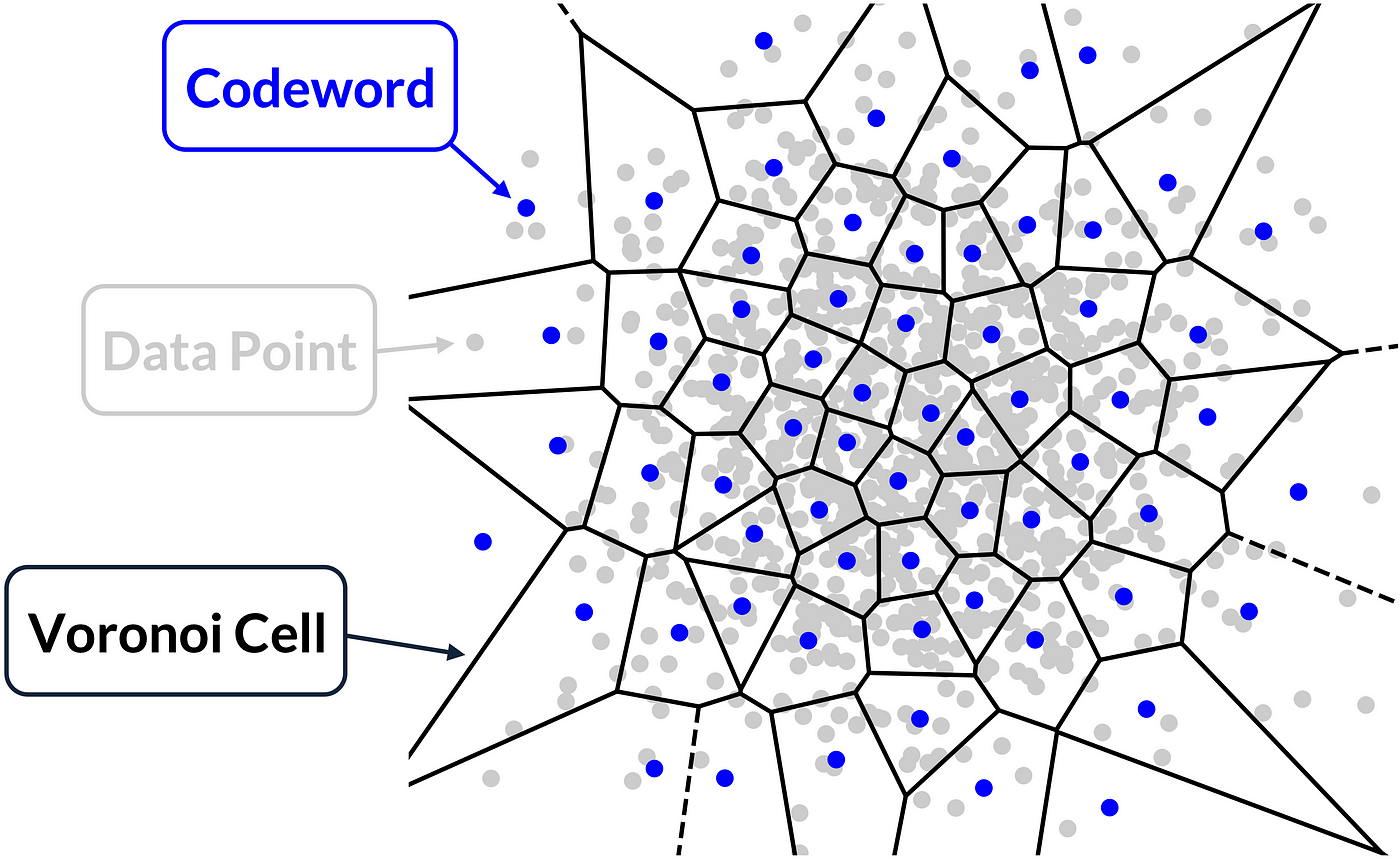

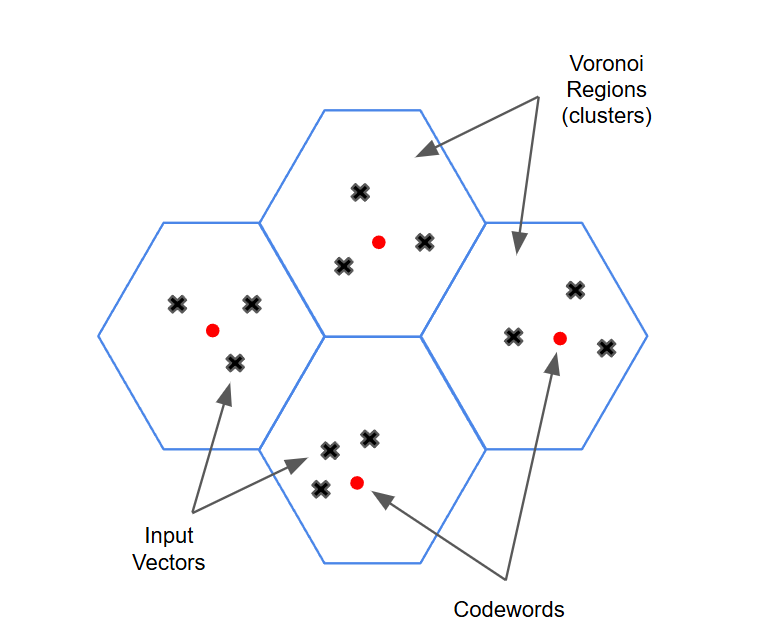

Learning Vector Quantization Learn how to use python to implement learning vector quantization from scratch with this easy to follow, yet detailed, tutorial and a dataset in sklearn. To understand vector quantization, it’s important to first grasp the basics of different data types, particularly how quantization reduces data size. floating point numbers represent real numbers in computing, allowing for the expression of an extensive range of values with varying precision. Learning vector quantization (lvq) is a supervised version of vector quantization that can be used when we have labelled input data. this learning technique uses the class information to reposition the voronoi vectors slightly, so as to improve the quality of the classifier decision regions. This repository is designed to be your comprehensive guide to understanding vector quantization. start with the basics, experiment with the examples, and build your intuition through hands on coding!. Quantization is a model optimization technique that reduces the precision of numerical values such as weights and activations in models to make them faster and more efficient. it helps lower memory usage, model size, and computational cost while maintaining almost the same level of accuracy. The learning vector quantization algorithm is a supervised neural network that uses a competitive (winner take all) learning strategy. it is related to other supervised neural networks such as the perceptron and the back propagation algorithm.

Learning Vector Quantization Learning vector quantization (lvq) is a supervised version of vector quantization that can be used when we have labelled input data. this learning technique uses the class information to reposition the voronoi vectors slightly, so as to improve the quality of the classifier decision regions. This repository is designed to be your comprehensive guide to understanding vector quantization. start with the basics, experiment with the examples, and build your intuition through hands on coding!. Quantization is a model optimization technique that reduces the precision of numerical values such as weights and activations in models to make them faster and more efficient. it helps lower memory usage, model size, and computational cost while maintaining almost the same level of accuracy. The learning vector quantization algorithm is a supervised neural network that uses a competitive (winner take all) learning strategy. it is related to other supervised neural networks such as the perceptron and the back propagation algorithm.

Learning Vector Quantization Quantization is a model optimization technique that reduces the precision of numerical values such as weights and activations in models to make them faster and more efficient. it helps lower memory usage, model size, and computational cost while maintaining almost the same level of accuracy. The learning vector quantization algorithm is a supervised neural network that uses a competitive (winner take all) learning strategy. it is related to other supervised neural networks such as the perceptron and the back propagation algorithm.

Learning Vector Quantization

Comments are closed.