Large Scale Data Processing With Python And Apache Spark

Using Apache Spark With Cassandra For Large Scale Data Processing Pyspark combines python’s learnability and ease of use with the power of apache spark to enable processing and analysis of data at any size for everyone familiar with python. In this tutorial, we will explore the powerful combination of python and pyspark for processing large datasets. pyspark is a python library that provides an interface for apache spark, a fast and general purpose cluster computing system.

Europython 2015 Pyspark Data Processing In Python On Top Of Apache Pyspark, the python api for apache spark, provides a scalable, distributed framework capable of handling datasets ranging from 100gb to 1tb (and beyond) with ease. in this guide, we’ll. In summary, pyspark is a versatile tool that combines the simplicity of python with the powerful capabilities of apache spark, making it ideal for large scale data processing and analysis. Pyspark is the python api for apache spark, designed for big data processing and analytics. it lets python developers use spark's powerful distributed computing to efficiently process large datasets across clusters. it is widely used in data analysis, machine learning and real time processing. With a focus on fundamentals, this extensively class tested textbook walks students through key principles and paradigms for working with large scale data, frameworks for large scale data analytics (hadoop, spark), and explains how to implement machine learning to exploit big data.

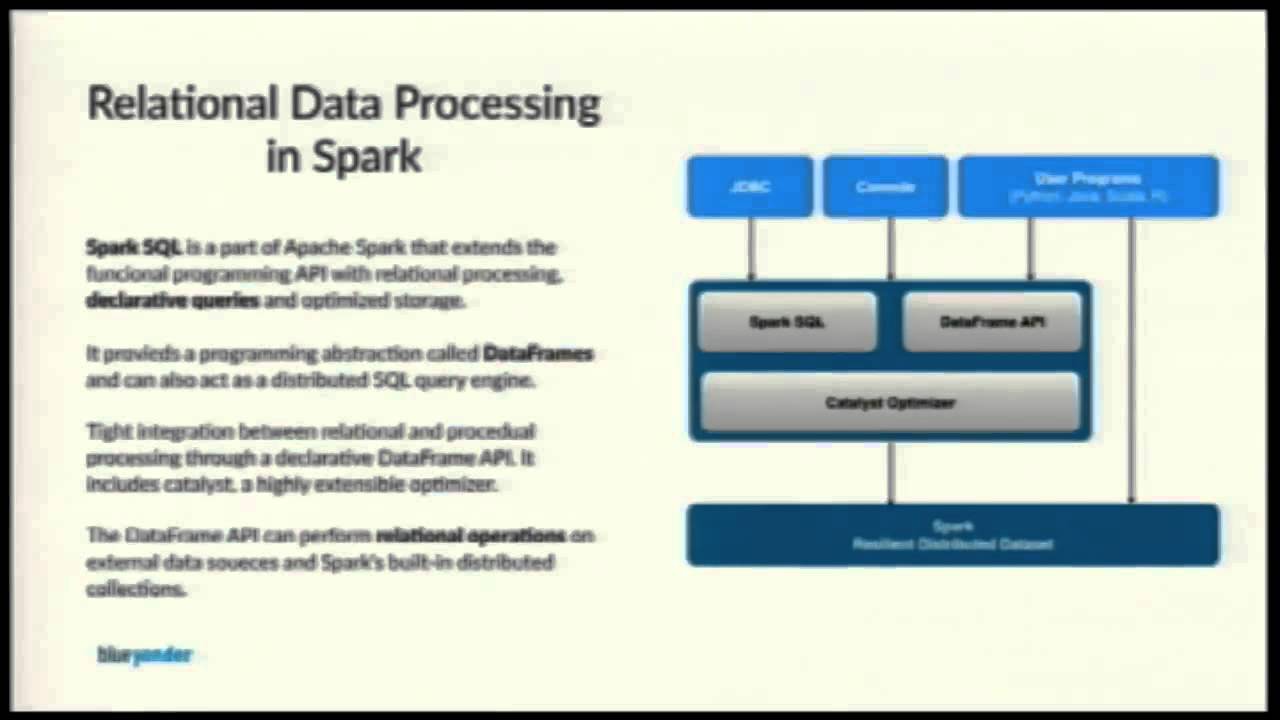

Big Data Processing With Apache Spark Coderprog Pyspark is the python api for apache spark, designed for big data processing and analytics. it lets python developers use spark's powerful distributed computing to efficiently process large datasets across clusters. it is widely used in data analysis, machine learning and real time processing. With a focus on fundamentals, this extensively class tested textbook walks students through key principles and paradigms for working with large scale data, frameworks for large scale data analytics (hadoop, spark), and explains how to implement machine learning to exploit big data. In this tutorial for python developers, you'll take your first steps with spark, pyspark, and big data processing concepts using intermediate python concepts. Apache spark with pyspark provides a scalable, accessible framework for big data analytics. beginners should focus on mastering dataframes and sql queries using moderately sized datasets before progressing to advanced topics. Processing big data in real time is challenging due to scalability, information consistency, and fault tolerance. big data processing with apache spark teaches you how to use spark to make your overall analytical workflow faster and more efficient. In this article, we will explore how to leverage pyspark to harness the capabilities of big data processing, enabling organizations to unlock the potential of their data for driving business success.

Pyvideo Org Pyspark Data Processing In Python On Top Of Apache Spark In this tutorial for python developers, you'll take your first steps with spark, pyspark, and big data processing concepts using intermediate python concepts. Apache spark with pyspark provides a scalable, accessible framework for big data analytics. beginners should focus on mastering dataframes and sql queries using moderately sized datasets before progressing to advanced topics. Processing big data in real time is challenging due to scalability, information consistency, and fault tolerance. big data processing with apache spark teaches you how to use spark to make your overall analytical workflow faster and more efficient. In this article, we will explore how to leverage pyspark to harness the capabilities of big data processing, enabling organizations to unlock the potential of their data for driving business success.

Optimizing Spark For Large Scale Data Processing Reintech Media Processing big data in real time is challenging due to scalability, information consistency, and fault tolerance. big data processing with apache spark teaches you how to use spark to make your overall analytical workflow faster and more efficient. In this article, we will explore how to leverage pyspark to harness the capabilities of big data processing, enabling organizations to unlock the potential of their data for driving business success.

Watch Apache Spark With Python Big Data With Pyspark And Spark Online

Comments are closed.