Large Language Models Can Learn Temporal Reasoning Ai Research Paper

Large Language Models Can Learn Temporal Reasoning Ai Research Paper Temporal reasoning (tr), in particular, presents a significant challenge for llms due to its reliance on diverse temporal concepts and intricate temporal logic. in this paper, we propose tg llm, a novel framework towards language based tr. Temporal reasoning (tr), in particular, presents a significant challenge for llms due to its reliance on diverse temporal concepts and intricate temporal logic. in this paper, we propose tg llm, a novel framework towards language based tr.

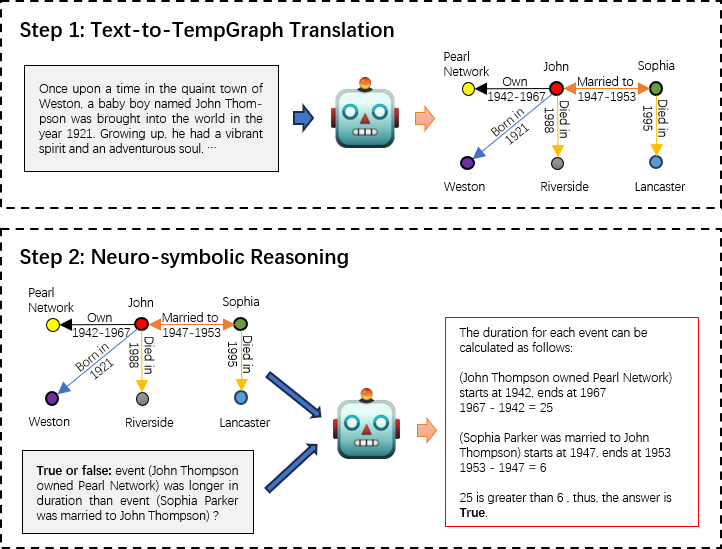

Large Language Models Can Learn Temporal Reasoning Ai Research Paper Temporal reasoning (tr), in particular, presents a significant challenge for llms due to its reliance on diverse temporal concepts and intricate temporal logic. in this paper, we propose tg llm, a novel framework towards language based tr. In this paper, we propose tg llm, a novel framework towards language based tr. instead of reasoning over the original context, we adopt a latent representation, temporal graph (tg) that enhances the learning of tr. This paper explores how large language models (llms) can learn to reason about temporal information and represent it in a structured format called a "tempgraph". Temporal reasoning (tr), in particular, presents a significant challenge for llms due to its reliance on diverse temporal concepts and intricate temporal logic. in this paper, we propose tg llm, a novel framework towards language based tr.

Large Language Models Are Reasoning Teachers Pdf Statistical This paper explores how large language models (llms) can learn to reason about temporal information and represent it in a structured format called a "tempgraph". Temporal reasoning (tr), in particular, presents a significant challenge for llms due to its reliance on diverse temporal concepts and intricate temporal logic. in this paper, we propose tg llm, a novel framework towards language based tr. While large language models (llms) have demonstrated remarkable reasoning capabili ties, they are not without their aws and inaccu racies. recent studies have introduced various methods to mitigate these limitations. In this paper, we build the connection between text based and temporal kg based temporal symbolic reasoning. this connection brings the potential to extend these kg based methods on text based tasks. In this paper, we build the connection between language based and temporal kg based temporal symbolic reasoning. this connection brings the potential for extending these kg based methods to language based tasks. Instead of reasoning over the original context, we adopt a latent representation, temporal graph (tg) that enhances the learning of tr. a synthetic dataset (tgqa), which is fully controllable and requires minimal supervision, is constructed for fine tuning llms on this text to tg translation task.

Temporal Reasoning In Language Models Ai Tutorial Next Electronics While large language models (llms) have demonstrated remarkable reasoning capabili ties, they are not without their aws and inaccu racies. recent studies have introduced various methods to mitigate these limitations. In this paper, we build the connection between text based and temporal kg based temporal symbolic reasoning. this connection brings the potential to extend these kg based methods on text based tasks. In this paper, we build the connection between language based and temporal kg based temporal symbolic reasoning. this connection brings the potential for extending these kg based methods to language based tasks. Instead of reasoning over the original context, we adopt a latent representation, temporal graph (tg) that enhances the learning of tr. a synthetic dataset (tgqa), which is fully controllable and requires minimal supervision, is constructed for fine tuning llms on this text to tg translation task.

4966 Large Language Models Can Lear Pdf Inductive Reasoning In this paper, we build the connection between language based and temporal kg based temporal symbolic reasoning. this connection brings the potential for extending these kg based methods to language based tasks. Instead of reasoning over the original context, we adopt a latent representation, temporal graph (tg) that enhances the learning of tr. a synthetic dataset (tgqa), which is fully controllable and requires minimal supervision, is constructed for fine tuning llms on this text to tg translation task.

Towards Large Reasoning Models A Survey Of Reinforced Reasoning With

Comments are closed.