Large Language Models Albert A Lite Bert For Self Supervised

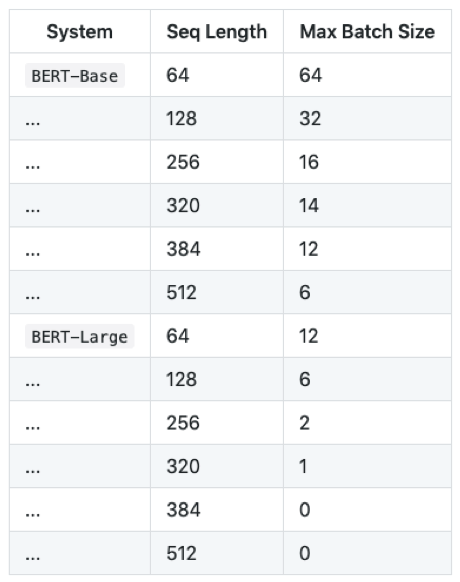

Large Language Models Albert A Lite Bert For Self Supervised To address these problems, we present two parameter reduction techniques to lower memory consumption and increase the training speed of bert. comprehensive empirical evidence shows that our proposed methods lead to models that scale much better compared to the original bert. Albert is "a lite" version of bert, a popular unsupervised language representation learning algorithm. albert uses parameter reduction techniques that allow for large scale configurations, overcome previous memory limitations, and achieve better behavior with respect to model degradation.

Large Language Models Albert A Lite Bert For Self Supervised In this article, we will discuss albert which was invented in 2020 with an objective of significant reduction of bert parameters. to understand the underlying mechanisms in albert, we are going to refer to its official paper. for the most part, albert derives the same architecture from bert. Ever since the advent of bert a year ago, natural language research has embraced a new paradigm, leveraging large amounts of existing text to pretrain a model’s parameters using self supervision, with no data annotation required. In this article, we will discuss albert which was invented in 2020 with an objective of significant reduction of bert parameters. to understand the underlying mechanisms in albert, we are. This work presents two parameter reduction techniques to lower memory consumption and increase the training speed of bert, and uses a self supervised loss that focuses on modeling inter sentence coherence.

Albert A Lite Bert For Self Supervised Learning Of Language In this article, we will discuss albert which was invented in 2020 with an objective of significant reduction of bert parameters. to understand the underlying mechanisms in albert, we are. This work presents two parameter reduction techniques to lower memory consumption and increase the training speed of bert, and uses a self supervised loss that focuses on modeling inter sentence coherence. The albert model was proposed in albert: a lite bert for self supervised learning of language representations by zhenzhong lan, mingda chen, sebastian goodman, kevin gimpel, piyush sharma, radu soricut. Keywords: memory, nlp, representation learning, self supervised learning, transformer. Albert (a lite bert for self supervised learning of language representations) is a streamlined version of google’s bert, designed to reduce computational and memory costs while maintaining high performance. Albert is a model designed to improve parameter efficiency in language representation through factorized embeddings and parameter sharing.

Albert A Lite Bert For Self Supervised Learning Of Language The albert model was proposed in albert: a lite bert for self supervised learning of language representations by zhenzhong lan, mingda chen, sebastian goodman, kevin gimpel, piyush sharma, radu soricut. Keywords: memory, nlp, representation learning, self supervised learning, transformer. Albert (a lite bert for self supervised learning of language representations) is a streamlined version of google’s bert, designed to reduce computational and memory costs while maintaining high performance. Albert is a model designed to improve parameter efficiency in language representation through factorized embeddings and parameter sharing.

Nlp Albert A Lite Bert For Self Supervised Learning Of Language Albert (a lite bert for self supervised learning of language representations) is a streamlined version of google’s bert, designed to reduce computational and memory costs while maintaining high performance. Albert is a model designed to improve parameter efficiency in language representation through factorized embeddings and parameter sharing.

Albert A Lite Bert For Self Supervised Learning Of Language

Comments are closed.