Knn Classification Pdf

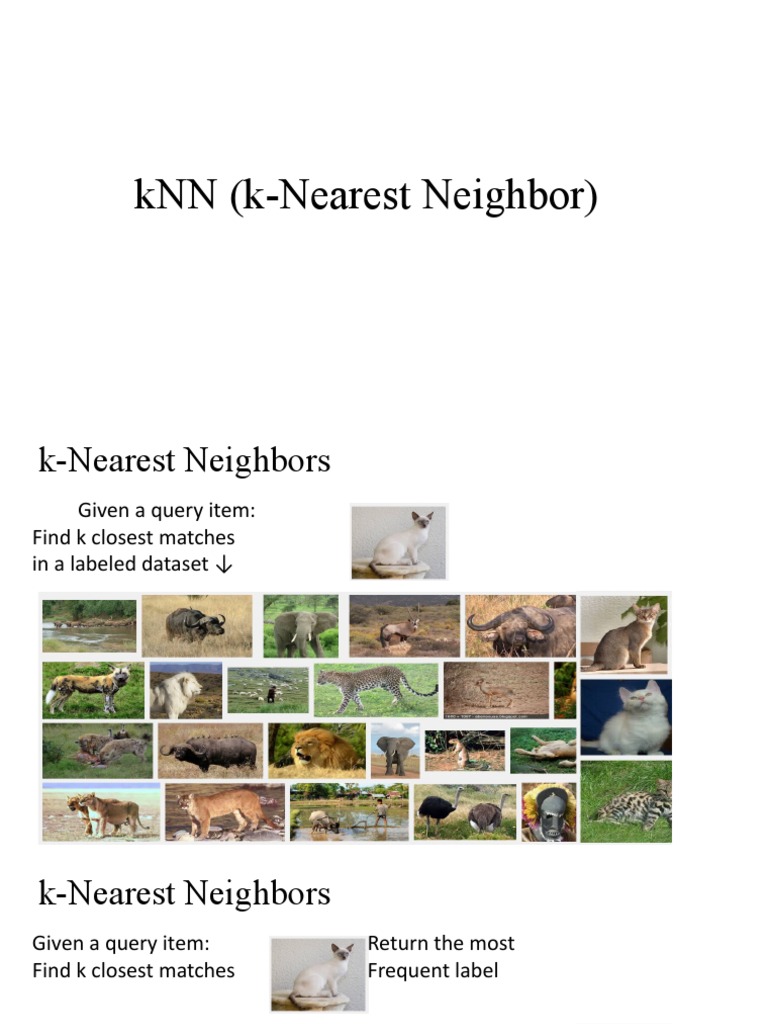

Knn Classification Pdf Pdf | the k nearest neighbours (knn) is a simple but effective method for classification. This article presents an overview of techniques for nearest neighbour classification focusing on: mechanisms for assessing similarity (distance), computational issues in identifying nearest neighbours, and mechanisms for reducing the dimension of the data.

Part A 3 Knn Classification Pdf Applied Mathematics Algorithms Knn classification vs regression for classification, prediction is usually the mode or most common class of the returned labels for regression, prediction is usually the arithmetic mean (average, informally) of the returned values. Ers to navigate the field. this review paper aims to provide a comprehensive overview of the latest developments in the k nn algorithm, including its strengths and weaknesses, applications, benchmarks, and available software with corresponding publicat. The knn classification algorithm let k be the number of nearest neighbors and d be the set of training examples. Consider knn performance as dimensionality increases: given 1000 points uniformly distributed in a unit hypercube: a) in 2d: what’s the expected distance to nearest neighbor? b) in 10d: how does this distance change? c) why does knn performance degrade in high dimensions? d) what preprocessing steps can help mitigate this?.

Knn Model Based Approach In Classification Pdf Statistical The knn classification algorithm let k be the number of nearest neighbors and d be the set of training examples. Consider knn performance as dimensionality increases: given 1000 points uniformly distributed in a unit hypercube: a) in 2d: what’s the expected distance to nearest neighbor? b) in 10d: how does this distance change? c) why does knn performance degrade in high dimensions? d) what preprocessing steps can help mitigate this?. Abstract: an instance based learning method called the k nearest neighbor or k nn algorithm has been used in many applications in areas such as data mining, statistical pattern recognition, image processing. suc cessful applications include recognition of handwriting, satellite image and ekg pattern. x = (x1, x2, . . . , xn). This article presents an overview of techniques for nearest neighbour classification focusing on: mechanisms for assessing similarity (distance), computational issues in identifying nearest. • in hw1, you will implement cv and use it to select k for a knn classifier • can use the “one standard error” rule*, where we pick the simplest model whose error is no more than 1 se above the best. In this post, we have investigated the theory behind the k nearest neighbor algorithm for classification. we observed its pros and cons and described how it works in practice.

Knn Presentation Pdf Statistical Classification Artificial Abstract: an instance based learning method called the k nearest neighbor or k nn algorithm has been used in many applications in areas such as data mining, statistical pattern recognition, image processing. suc cessful applications include recognition of handwriting, satellite image and ekg pattern. x = (x1, x2, . . . , xn). This article presents an overview of techniques for nearest neighbour classification focusing on: mechanisms for assessing similarity (distance), computational issues in identifying nearest. • in hw1, you will implement cv and use it to select k for a knn classifier • can use the “one standard error” rule*, where we pick the simplest model whose error is no more than 1 se above the best. In this post, we have investigated the theory behind the k nearest neighbor algorithm for classification. we observed its pros and cons and described how it works in practice.

Knn Updated Pdf Statistical Classification Statistical Data Types • in hw1, you will implement cv and use it to select k for a knn classifier • can use the “one standard error” rule*, where we pick the simplest model whose error is no more than 1 se above the best. In this post, we have investigated the theory behind the k nearest neighbor algorithm for classification. we observed its pros and cons and described how it works in practice.

Efficient Knn Classification With Different Numbers Of Nearest

Comments are closed.