Knn Classification Ailephant

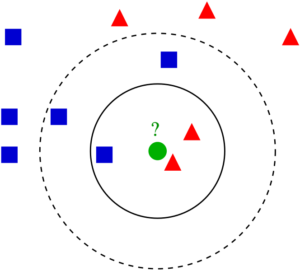

Knn Classification Pdf Leave a reply your email address will not be published.required fields are marked *. Knn knn is a simple, supervised machine learning (ml) algorithm that can be used for classification or regression tasks and is also frequently used in missing value imputation. it is based on the idea that the observations closest to a given data point are the most "similar" observations in a data set, and we can therefore classify unforeseen points based on the values of the closest.

Knn Classification Ailephant Machine learning [algorithm]: knn neighbors introduction the knn algorithm discussed in this article is one of the classification methods in supervised learning. the so called supervised learning and unsupervised learning refer to whether th. Implementing a k nearest neighbor (knn) classifier. the file cities.zip contains a dataset of weather, climate and pollution measurements from five chinese cities. the target variable is called "pm high" and represents the event that the pollution level, measured as a concentration of particles called pm2.5, is higher than 100 ug m^3. applying the classifier to the chinese city pollution. K‑nearest neighbor (knn) is a simple and widely used machine learning technique for classification and regression tasks. it works by identifying the k closest data points to a given input and making predictions based on the majority class or average value of those neighbors. Kneighborsclassifier # class sklearn.neighbors.kneighborsclassifier(n neighbors=5, *, weights='uniform', algorithm='auto', leaf size=30, p=2, metric='minkowski', metric params=none, n jobs=none) [source] # classifier implementing the k nearest neighbors vote. read more in the user guide. parameters: n neighborsint, default=5 number of neighbors to use by default for kneighbors queries. weights.

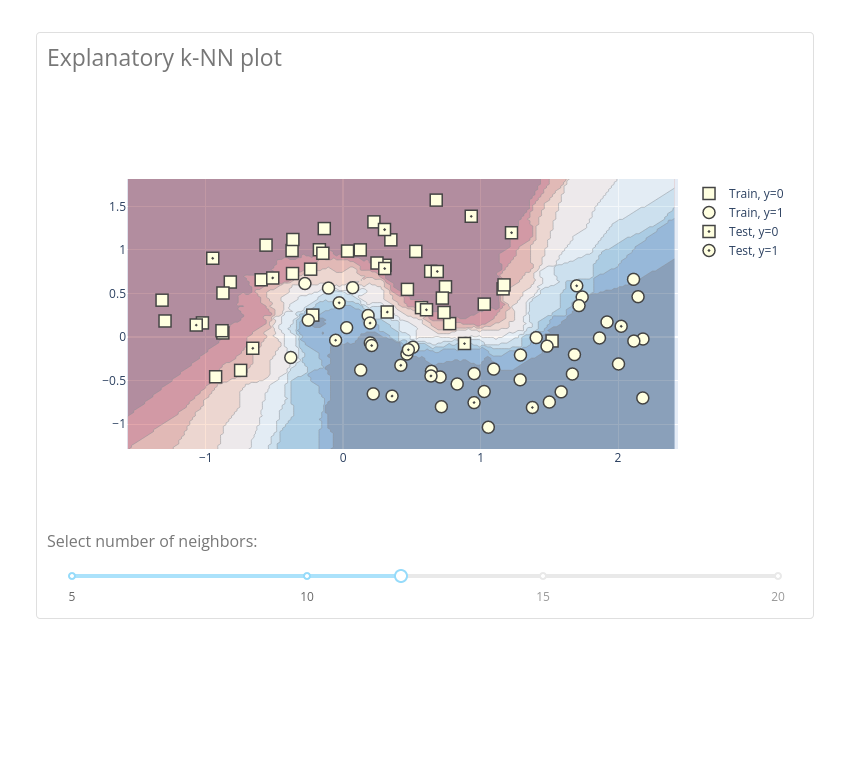

Dash K‑nearest neighbor (knn) is a simple and widely used machine learning technique for classification and regression tasks. it works by identifying the k closest data points to a given input and making predictions based on the majority class or average value of those neighbors. Kneighborsclassifier # class sklearn.neighbors.kneighborsclassifier(n neighbors=5, *, weights='uniform', algorithm='auto', leaf size=30, p=2, metric='minkowski', metric params=none, n jobs=none) [source] # classifier implementing the k nearest neighbors vote. read more in the user guide. parameters: n neighborsint, default=5 number of neighbors to use by default for kneighbors queries. weights. View lec01 2 knn slides.pdf from csc c311 at university of toronto. csc311 introduction to machine learning supervised learning and nearest neighbours alice gao and marina tawfik learning outcomes. The k nearest neighbors (knn) classifier stands out as a fundamental algorithm in machine learning, offering an intuitive and effective approach to classification tasks. The k nearest neighbors (knn) algorithm is a non parametric, supervised learning classifier, which uses proximity to make classifications or predictions about the grouping of an individual data point. it is one of the popular and simplest classification and regression classifiers used in machine learning today. We will introduce a simple technique for classification called k nearest neighbors classification (knn). before doing that, we are going to scale up our problem with a slightly more realistic.

Github Ruthravi Knn Classification Knn Classification In Garment View lec01 2 knn slides.pdf from csc c311 at university of toronto. csc311 introduction to machine learning supervised learning and nearest neighbours alice gao and marina tawfik learning outcomes. The k nearest neighbors (knn) classifier stands out as a fundamental algorithm in machine learning, offering an intuitive and effective approach to classification tasks. The k nearest neighbors (knn) algorithm is a non parametric, supervised learning classifier, which uses proximity to make classifications or predictions about the grouping of an individual data point. it is one of the popular and simplest classification and regression classifiers used in machine learning today. We will introduce a simple technique for classification called k nearest neighbors classification (knn). before doing that, we are going to scale up our problem with a slightly more realistic.

Comments are closed.