Kl Divergence Relative Entropy

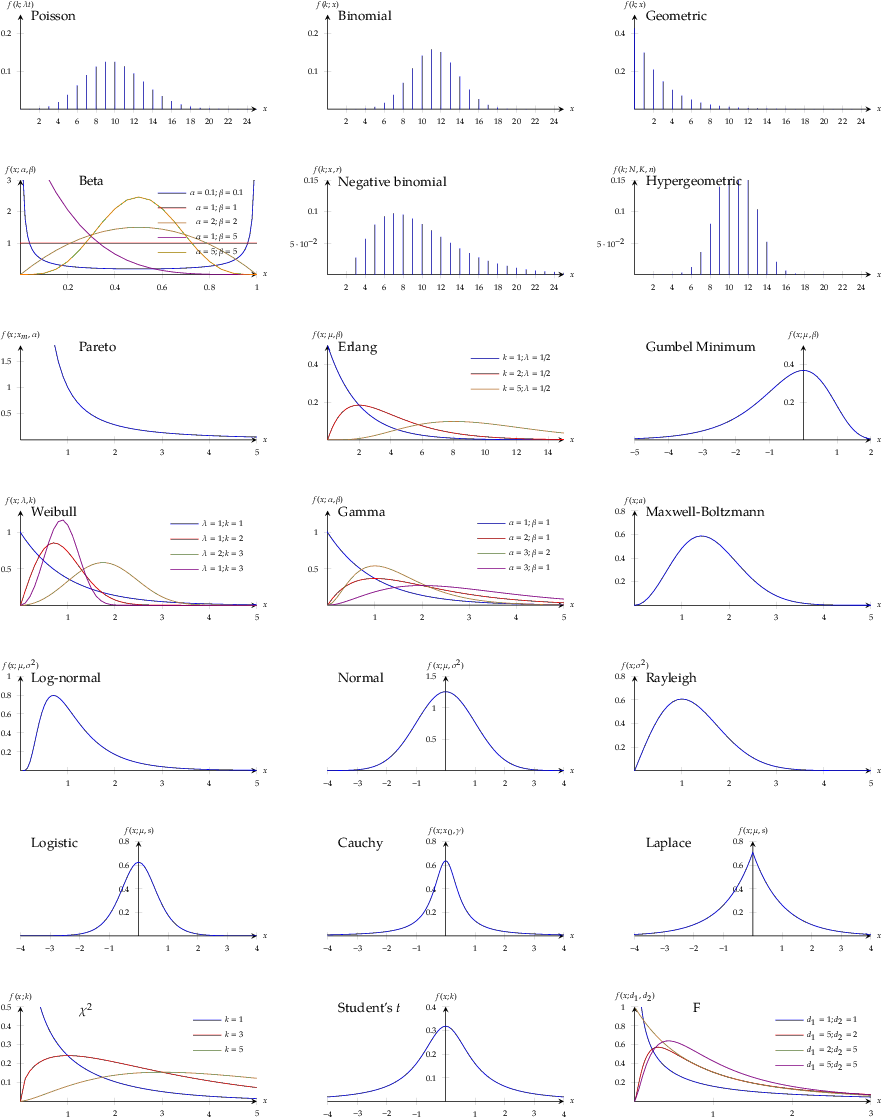

Kl Divergence Or Relative Entropy The Stanford Nlp In particular, it is the natural extension of the principle of maximum entropy from discrete to continuous distributions, for which shannon entropy ceases to be so useful (see differential entropy), but the relative entropy continues to be just as relevant. Kullback leibler divergence is a measure from information theory that quantifies the difference between two probability distributions. it tells us how much information is lost when we approximate a true distribution p with another distribution q.

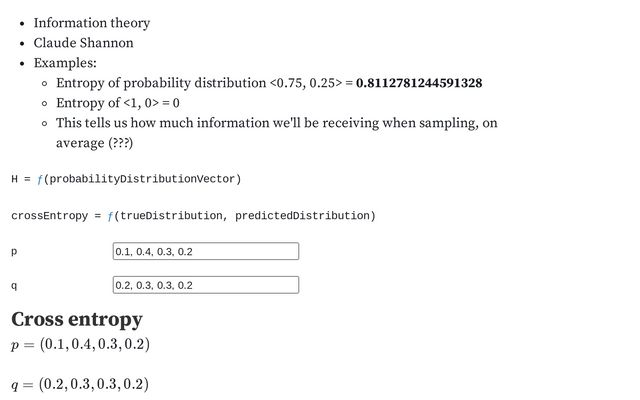

Entropy Cross Entropy And Kl Divergence Daniel Manning Observable This brings us to an important point kl divergence is often called relative entropy. therefore, we are in fact calculating the kl divergence since the entropy module is calculating the relative entropy between the two distributions. Kl divergence can be seen as the difference between the entropy of p and the "cross entropy" between p and q. thus, kl divergence measures the extra uncertainty introduced by using q instead of p. The kullback leibler (kl) divergence is a distance like measure between the distribution of two random variables. it is defined as d (p \parallel q) = \int\limits {x} p (x) \log \left (\frac {p (x)} {q (x)}\right) dx. The similarity to the em algorithm is not incidental see for example neal and hinton “a new view of the em algorithm” for a view of the expectation maximization algorithm that emphasizes the alternating minimization of kl divergences.

Cross Entropy And Kl Divergence The kullback leibler (kl) divergence is a distance like measure between the distribution of two random variables. it is defined as d (p \parallel q) = \int\limits {x} p (x) \log \left (\frac {p (x)} {q (x)}\right) dx. The similarity to the em algorithm is not incidental see for example neal and hinton “a new view of the em algorithm” for a view of the expectation maximization algorithm that emphasizes the alternating minimization of kl divergences. Kullback leibler (kl) divergence, also known as relative entropy, is a mathematical concept with extensive practical applications across various domains. it serves as a measure of the difference or information loss when comparing two probability distributions. Kullback–leibler divergence (kl divergence), also known as relative entropy, is a fundamental concept in statistics and information theory. it measures how one probability distribution diverges. The kl divergence, which is closely related to relative entropy, informa tion divergence, and information for discrimination, is a non symmetric mea sure of the difference between two probability distributions p(x) and q(x). The kullback leibler (kl) divergence (also called relative entropy), denoted d {kl} (p||q), is a type of statistical distance: a measure of how much a model probability distribution q is different from a true probability distribution p.

Summary Of Interpretations About Shannon S Entropy And Kl Divergence Kullback leibler (kl) divergence, also known as relative entropy, is a mathematical concept with extensive practical applications across various domains. it serves as a measure of the difference or information loss when comparing two probability distributions. Kullback–leibler divergence (kl divergence), also known as relative entropy, is a fundamental concept in statistics and information theory. it measures how one probability distribution diverges. The kl divergence, which is closely related to relative entropy, informa tion divergence, and information for discrimination, is a non symmetric mea sure of the difference between two probability distributions p(x) and q(x). The kullback leibler (kl) divergence (also called relative entropy), denoted d {kl} (p||q), is a type of statistical distance: a measure of how much a model probability distribution q is different from a true probability distribution p.

Kl Divergence Relative Entropy In Deep Learning Gowri Shankar The kl divergence, which is closely related to relative entropy, informa tion divergence, and information for discrimination, is a non symmetric mea sure of the difference between two probability distributions p(x) and q(x). The kullback leibler (kl) divergence (also called relative entropy), denoted d {kl} (p||q), is a type of statistical distance: a measure of how much a model probability distribution q is different from a true probability distribution p.

Comments are closed.