Jacobi Forcing Faster Parallel Llm Decoding

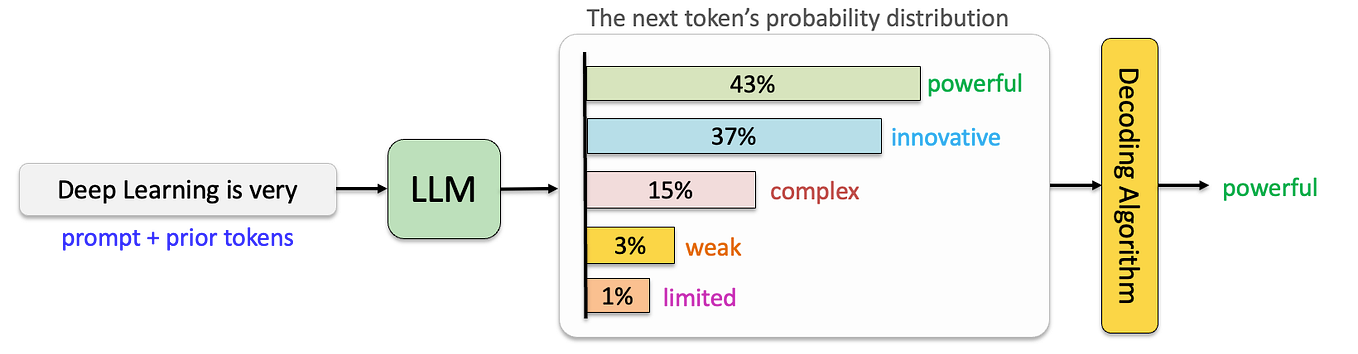

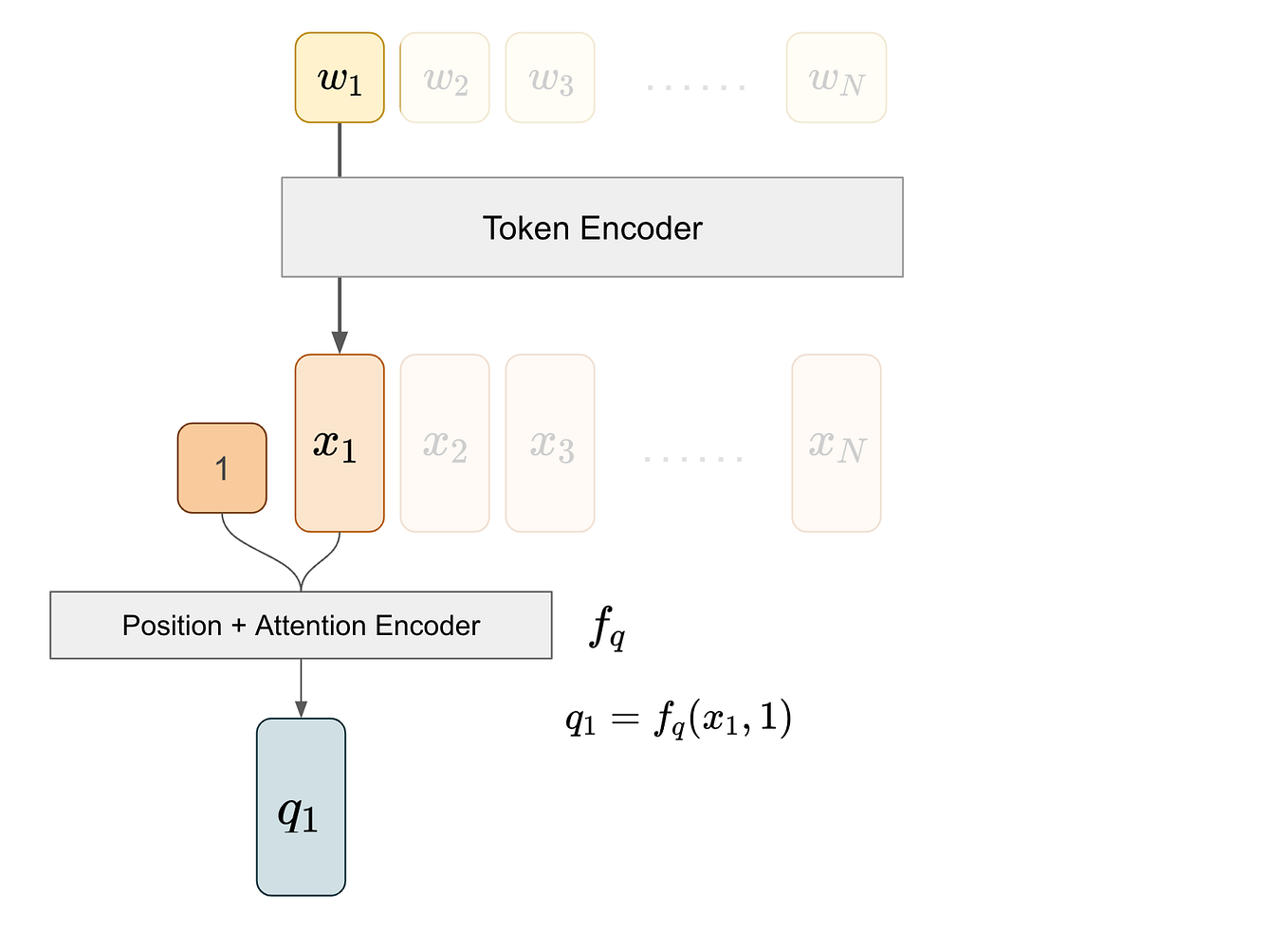

Lookahead Decoding A Parallel Decoding Algorithm To Accelerate Llm To address this, we introduce jacobi forcing, a progressive distillation paradigm where models are trained on their own generated parallel decoding trajectories, smoothly shifting ar models into efficient parallel decoders while preserving their pretrained causal inference property. This blog introduces jacobi forcing, a new training technique that converts llms into native causal parallel decoders.

Speculative Decoding Make Llm Inference Faster Medium Ai Science Jacobi forcing is a new training technique that converts llms into native causal parallel decoders. jacobi forcing keeps the causal ar backbone and fixes the ar to diffusion mismatch by training the model to handle noisy future blocks along its own jacobi decoding trajectories. Jacobi forcing enables fast and more accurate causal parallel decoding for autoregressive transformers, offering near ar quality and improved token throughput. This paper introduces jacobi forcing, a progressive distillation method that turns standard causal llms into efficient causal parallel decoders while preserving the pretrained causal. The paper introduces jacobi forcing, a progressive distillation framework that enables fast causal parallel decoding while preserving the autoregressive model structure.

Speculative Decoding Make Llm Inference Faster Medium Ai Science This paper introduces jacobi forcing, a progressive distillation method that turns standard causal llms into efficient causal parallel decoders while preserving the pretrained causal. The paper introduces jacobi forcing, a progressive distillation framework that enables fast causal parallel decoding while preserving the autoregressive model structure. To address this, we introduce jacobi forcing, a progressive distillation paradigm where models are trained on their own generated parallel decoding trajectories, smoothly shifting ar models. What is jacobi forcing and how does it speed decoding? jacobi forcing is a progressive distillation regime that teaches an autoregressive decoder to behave like a fast parallel sampler by distilling on its own parallel generation trajectories. This page describes the core jacobi forcing technique and methodology, including the fundamental problem it solves (the ar to diffusion mismatch), the concept of jacobi decoding trajectories, and how noise conditioned training enables native causal parallel decoding. Jacobi forcing is a novel training technique introduced by hao ai lab that can convert large language models (llms) into native causal parallel decoders.

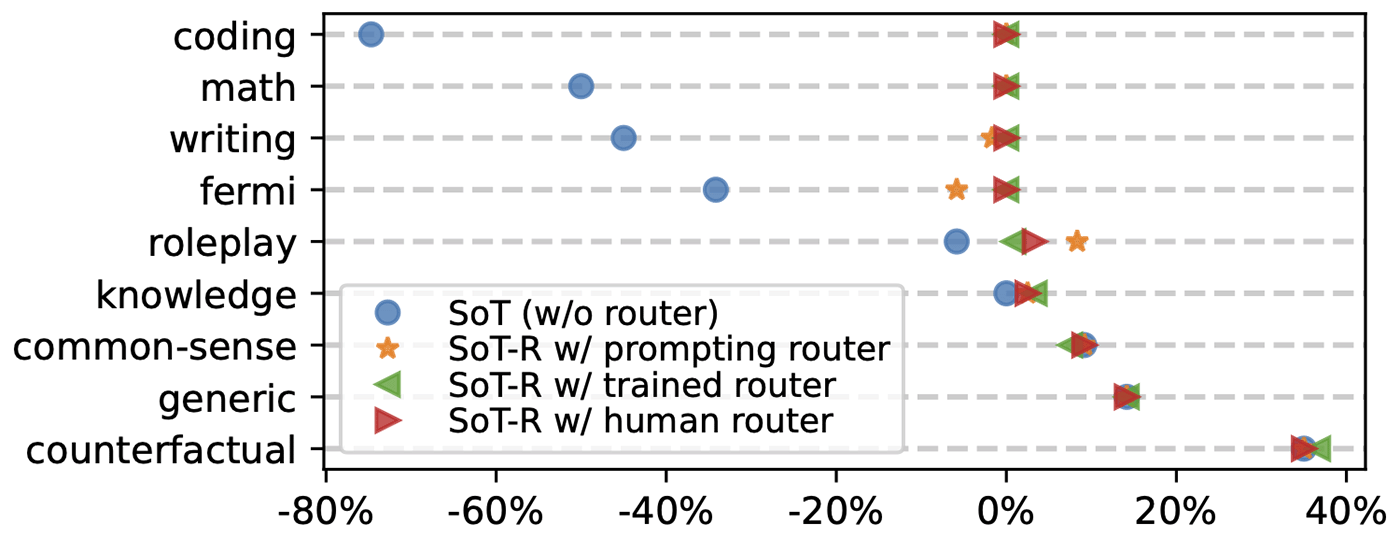

Skeleton Of Thought Parallel Decoding Speeds Up And Improves Llm To address this, we introduce jacobi forcing, a progressive distillation paradigm where models are trained on their own generated parallel decoding trajectories, smoothly shifting ar models. What is jacobi forcing and how does it speed decoding? jacobi forcing is a progressive distillation regime that teaches an autoregressive decoder to behave like a fast parallel sampler by distilling on its own parallel generation trajectories. This page describes the core jacobi forcing technique and methodology, including the fundamental problem it solves (the ar to diffusion mismatch), the concept of jacobi decoding trajectories, and how noise conditioned training enables native causal parallel decoding. Jacobi forcing is a novel training technique introduced by hao ai lab that can convert large language models (llms) into native causal parallel decoders.

Skeleton Of Thought Parallel Decoding Speeds Up And Improves Llm This page describes the core jacobi forcing technique and methodology, including the fundamental problem it solves (the ar to diffusion mismatch), the concept of jacobi decoding trajectories, and how noise conditioned training enables native causal parallel decoding. Jacobi forcing is a novel training technique introduced by hao ai lab that can convert large language models (llms) into native causal parallel decoders.

Comments are closed.