Interpretable Deep Gaussian Processes

Interpretable Deep Gaussian Processes Deepai We propose interpretable deep gaussian processes (gps) that combine the expres siveness of deep neural networks (nns) with quantified uncertainty of deep gps. our approach is based on approximating deep gp as a gp, which allows explicit, analytic forms for compositions of a wide variety of kernels. We propose interpretable dgp based on approximating dgp as a gp by calculating the exact moments, which additionally identify the heavy tailed nature of some dgp distributions.

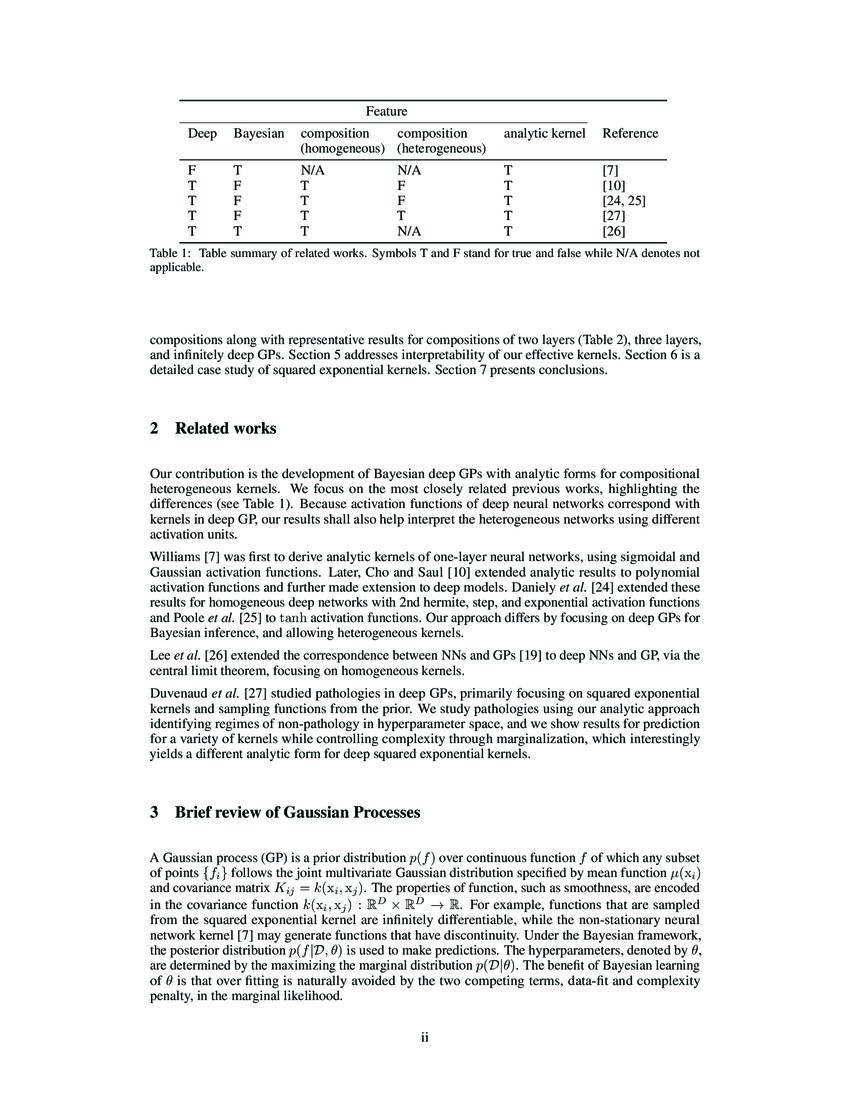

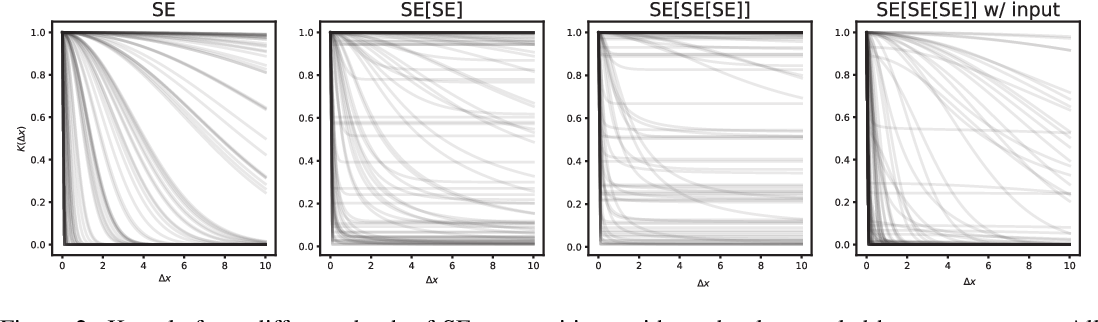

Interpretable Deep Gaussian Processes This work proposes interpretable deep gaussian processes (gps) that combine the expressiveness of deep neural networks (nns) with quantified uncertainty of deep gps and provides general recipes for deriving the effective kernels. Deep gaussian processes (dgps) combine the expressiveness of deep neural networks (dnns) with quanti ed uncertainty of gaus sian processes (gps). expressive power and intractable inference both result from the non gaussian distribution over composition functions. In this paper, we introduce gplasdi, a novel lasdi based framework that relies on gaussian process (gp) for latent space ode interpolations. using gps offers two significant advantages. We propose interpretable dgp based on approximating dgp as a gp by calculating the exact moments, which additionally identify the heavy tailed nature of some dgp distributions.

Chi Ken Lu Scott Cheng Hsin Yang Xiaoran Hao Patrick Shafto In this paper, we introduce gplasdi, a novel lasdi based framework that relies on gaussian process (gp) for latent space ode interpolations. using gps offers two significant advantages. We propose interpretable dgp based on approximating dgp as a gp by calculating the exact moments, which additionally identify the heavy tailed nature of some dgp distributions. Published with hugo blox builder — the free, open source website builder that empowers creators. Unfortunately, both methods are susceptible to particular pathologies which may hinder fitting and limit their interpretability.this work proposes a novel synthesis of both previous approaches: {thin and deep gp} (tdgp). This paper introduces a novel structure for deep gaussian processes (dgps) and a method for determining their depths. the proposed framework enables faster convergence of their parameters and reduces computational cost to optimize them while maintaining performance comparable to that of conventional dgp models. We propose interpretable deep gaussian processes (gps) that combine the expressiveness of deep neural networks (nns) with quantified uncertainty of deep gps. our approach is based on approximating deep gp as a gp, which allows explicit, analytic forms for compositions of a wide variety of kernels.

Deep Gaussian Process Emulation And Uncertainty Quantification For Published with hugo blox builder — the free, open source website builder that empowers creators. Unfortunately, both methods are susceptible to particular pathologies which may hinder fitting and limit their interpretability.this work proposes a novel synthesis of both previous approaches: {thin and deep gp} (tdgp). This paper introduces a novel structure for deep gaussian processes (dgps) and a method for determining their depths. the proposed framework enables faster convergence of their parameters and reduces computational cost to optimize them while maintaining performance comparable to that of conventional dgp models. We propose interpretable deep gaussian processes (gps) that combine the expressiveness of deep neural networks (nns) with quantified uncertainty of deep gps. our approach is based on approximating deep gp as a gp, which allows explicit, analytic forms for compositions of a wide variety of kernels.

Comments are closed.