Install Onnx Runtime Onnxruntime Pdf Runtime System Computing

Install Onnx Runtime Onnxruntime Pdf Runtime System Computing Unless stated otherwise, the installation instructions in this section refer to pre built packages designed to perform on device training. if the pre built training package supports your model but is too large, you can create a custom training build. This document provides instructions for installing onnx runtime (ort) for various platforms and languages. it discusses requirements, then provides separate sections for installing ort for python, c# c c winml, web mobile, and on device training.

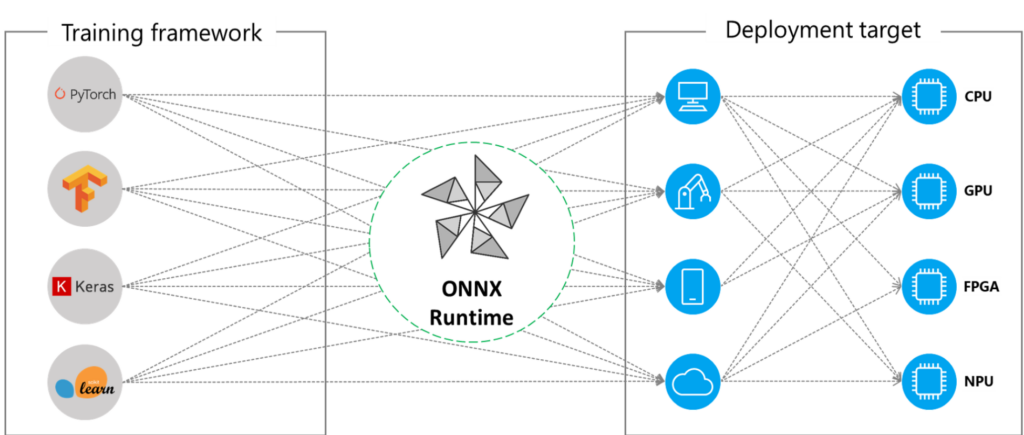

Onnx Runtime Overview Unless stated otherwise, the installation instructions in this section refer to pre built packages that include support for selected operators and onnx opset versions based on the requirements of popular models. Onnx runtime training can accelerate the model training time on multi node nvidia gpus for transformer models with a one line addition for existing pytorch training scripts. Onnx runtime will go thru the list in order to check each provider’s capability and assign nodes to it if it can run the nodes. a hardware accelerator interface to query its capability and get corresponding executables. Onnx runtime is a performance focused scoring engine for open neural network exchange (onnx) models. for more information on onnx runtime, please see aka.ms onnxruntime or the github project.

Onnx Runtime Pynomial Onnx runtime will go thru the list in order to check each provider’s capability and assign nodes to it if it can run the nodes. a hardware accelerator interface to query its capability and get corresponding executables. Onnx runtime is a performance focused scoring engine for open neural network exchange (onnx) models. for more information on onnx runtime, please see aka.ms onnxruntime or the github project. Onnx runtime is a high performance inference engine for onnx (open neural network exchange) models. this page covers the architecture, deployment options, and usage of onnx runtime for running machine learning models in various environments. Refer to this section to install onnx via the pip installation method. ensure that the following prerequisite installations are successful before proceeding to install onnx runtime for use with rocm™ on radeon™ gpus. radeon software for linux (with rocm) is installed. migraphx is installed. To use onnx runtime training in a custom environment, like on prem nvidia dgx 2 clusters, you can use these build instructions to generate the python package to integrate into existing trainer code. Availability of onnx go live tool, which automates the process of shipping onnx models by combining model conversion, correctness tests, and performance tuning into a single pipeline as a series of docker images.

Onnx Runtime Production Grade Ai Engine For Accelerated Training And Onnx runtime is a high performance inference engine for onnx (open neural network exchange) models. this page covers the architecture, deployment options, and usage of onnx runtime for running machine learning models in various environments. Refer to this section to install onnx via the pip installation method. ensure that the following prerequisite installations are successful before proceeding to install onnx runtime for use with rocm™ on radeon™ gpus. radeon software for linux (with rocm) is installed. migraphx is installed. To use onnx runtime training in a custom environment, like on prem nvidia dgx 2 clusters, you can use these build instructions to generate the python package to integrate into existing trainer code. Availability of onnx go live tool, which automates the process of shipping onnx models by combining model conversion, correctness tests, and performance tuning into a single pipeline as a series of docker images.

Comments are closed.