Input Embeddings Positional Encoding The Forgotten Foundations Of

Input Embeddings Positional Encoding The Forgotten Foundations Of We’ve come a long way in this article, starting from how raw text is tokenized, to how those tokens are mapped into vectors through embeddings, and finally how transformers preserve word order through positional encoding. While studying this, i realized positional encoding is what makes transformers unique. instead of relying on recurrence, they encode position mathematically, which lets them scale to long.

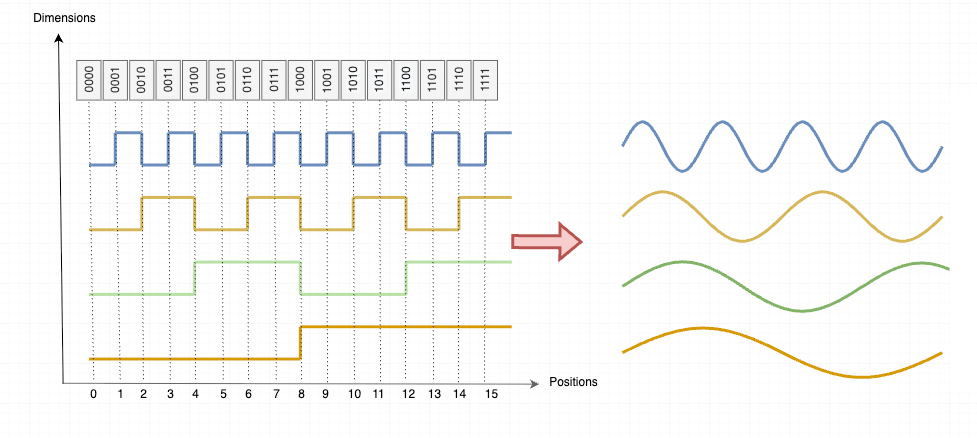

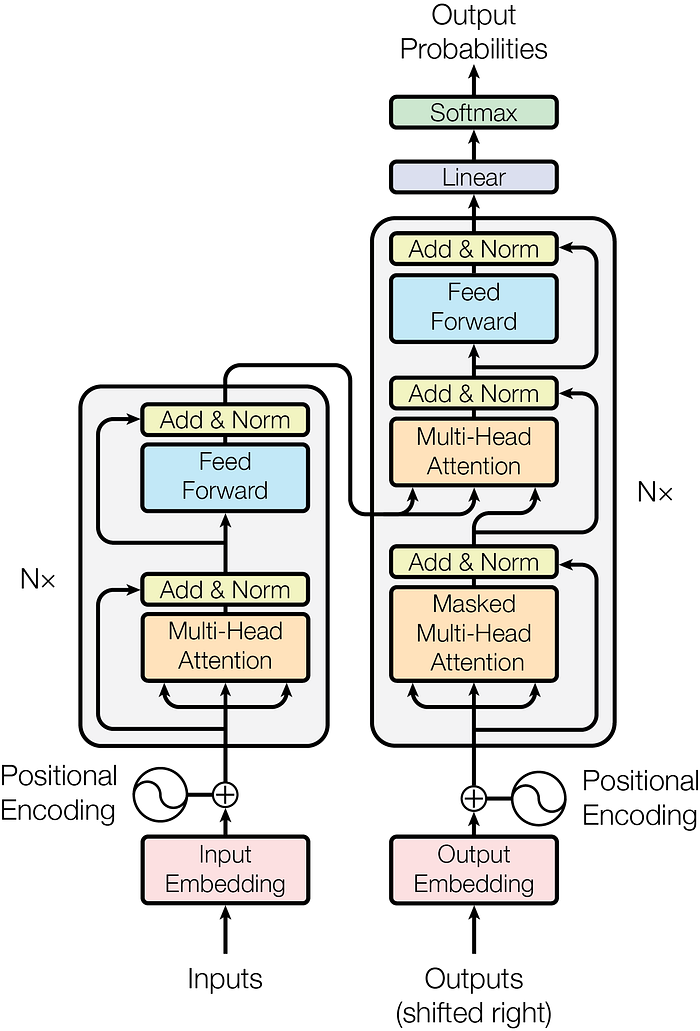

Input Embeddings Positional Encoding The Forgotten Foundations Of This document describes the embedding and positional encoding components of the transformer model. these components are critical for converting input tokens into continuous vector representations and incorporating sequence order information into the model. A rigorous mathematical exploration of transformer positional encodings, revealing how sinusoidal functions elegantly encode sequence order through linear transformations, inner product properties, and asymptotic decay behaviors that balance local and global attention. Positional encoding is a technique that adds information about the position of each token in the sequence to the input embeddings. this helps transformers to understand the relative or absolute position of tokens which is important for differentiating between words in different positions and capturing the structure of a sentence. Third, the best achievable approximation to an information optimal encoding is constructed via classical multidimensional scaling (mds) on the hellinger distance between positional distributions; the quality of any encoding is measured by a single number, the stress (proposition 5, algorithm 1).

Input Embeddings Positional Encoding The Forgotten Foundations Of Positional encoding is a technique that adds information about the position of each token in the sequence to the input embeddings. this helps transformers to understand the relative or absolute position of tokens which is important for differentiating between words in different positions and capturing the structure of a sentence. Third, the best achievable approximation to an information optimal encoding is constructed via classical multidimensional scaling (mds) on the hellinger distance between positional distributions; the quality of any encoding is measured by a single number, the stress (proposition 5, algorithm 1). This transformation flows through several key layers: tokenized text, token ids, token embeddings, positional embeddings, and the final input embeddings. let’s explore each layer in. In the above animation, we create our positional encoding vector for the token chased \color {#699c52}\text {chased} chased from the index and add it to our token embedding. the embedding values here are a subset of the real values from llama 3.2 1b. We will first look at the input embedding layer, responsible for converting input tokens into continuous vector representations. the primary focus will then shift to techniques for encoding positional information. Input embeddings: converting input tokens (like words or subwords) into dense vectors. positional encoding: adding information about the position of each token in the sequence.

Comments are closed.