Ingest Postgresql Metadata On Datahub

Ingest Postgresql Metadata On Datahub The datahub integration for postgres covers core metadata entities such as datasets tables views, schema fields, and containers. depending on module capabilities, it can also capture features such as lineage, usage, profiling, ownership, tags, and stateful deletion detection. Datahub is an open source metadata platform for the modern data stack. we have integrated the sqlflow into datahub so that the sqlflow data lineage is enabled in the datahub ui.

Ingest Postgresql Metadata On Datahub In this blog, we’ll take a closer look at how you can ingest a postgresql database into datahub, explore its key features, and see how it can help you manage and visualize your data with ease. A hands on tutorial on building dbt models on a postgres database, ingesting the resulting metadata into datahub. In order to # activate it, we must import it so that it can hook into sqlalchemy. while # we don't use the geometry type that we import, we do care about the side # effects of the import. Pull based integrations allow you to "crawl" or "ingest" metadata from the data systems by connecting to them and extracting metadata in a batch or incremental batch manner.

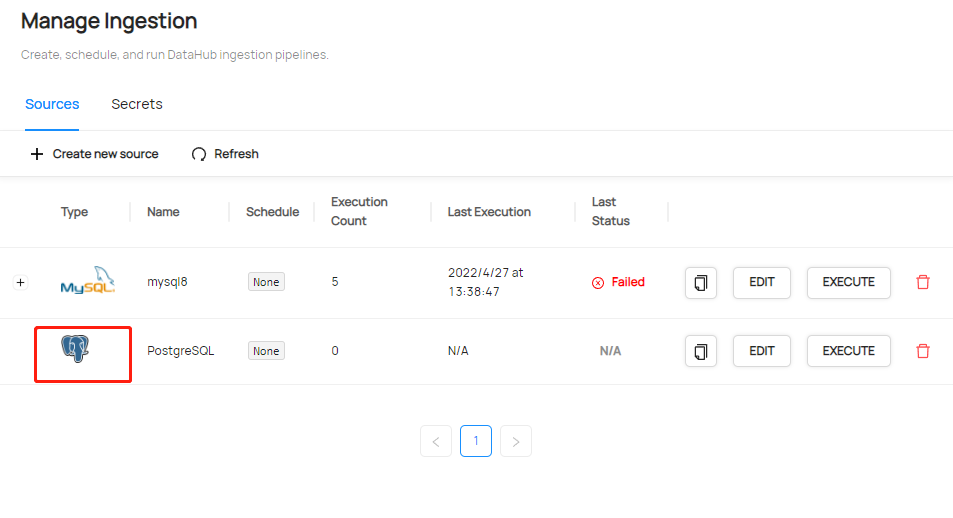

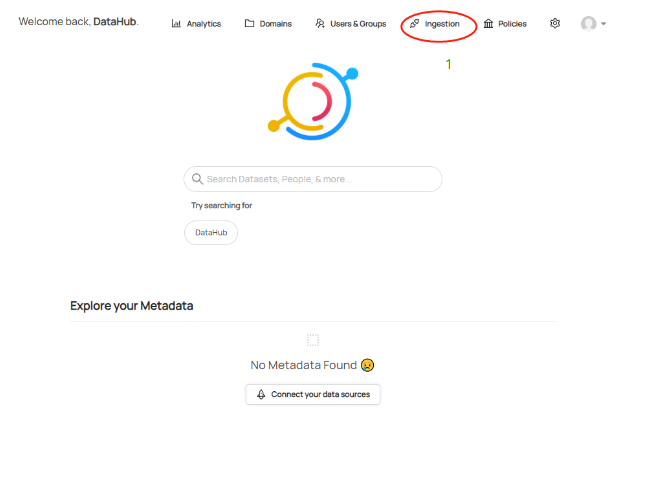

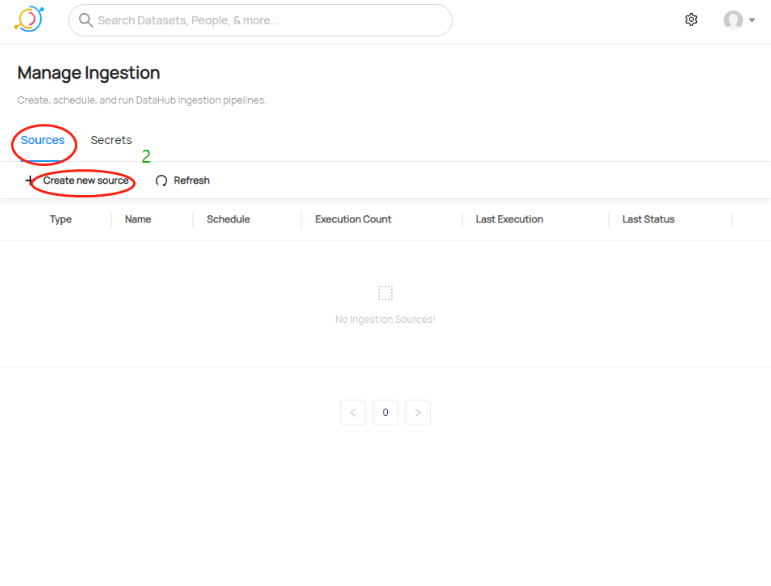

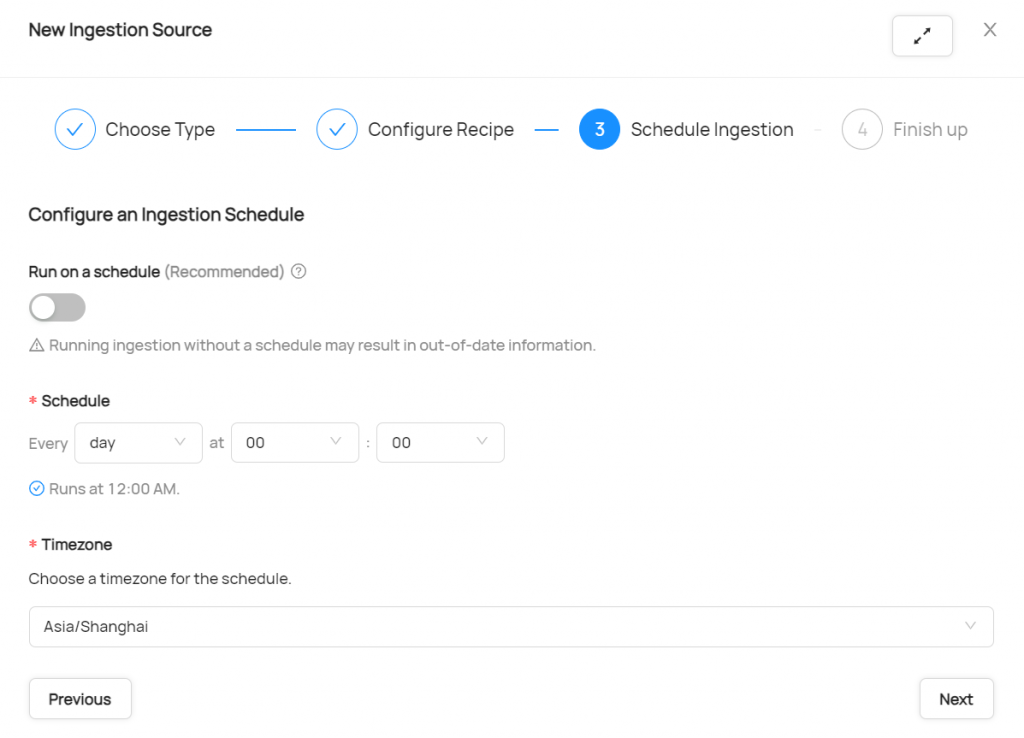

Ingest Postgresql Metadata On Datahub In order to # activate it, we must import it so that it can hook into sqlalchemy. while # we don't use the geometry type that we import, we do care about the side # effects of the import. Pull based integrations allow you to "crawl" or "ingest" metadata from the data systems by connecting to them and extracting metadata in a batch or incremental batch manner. Starter recipe check out the following recipe to get started with ingestion! see below for full configuration options. for general pointers on writing and running a recipe, see our main recipe guide. As the errors are similar in both tools i think that it could be a docker network error, so that the postgres container can not be seen by other containers (like datahub or openmetadata). Ingestion settings: configure ingestion behavior including profiling, stale metadata handling, and other operational settings. the defaults represent best practices for most use cases. Starting in version 0.8.25, datahub supports creating, configuring, scheduling, & executing batch metadata ingestion using the datahub user interface. this makes getting metadata into datahub easier by minimizing the overhead required to operate custom integration pipelines.

Ingest Postgresql Metadata On Datahub Starter recipe check out the following recipe to get started with ingestion! see below for full configuration options. for general pointers on writing and running a recipe, see our main recipe guide. As the errors are similar in both tools i think that it could be a docker network error, so that the postgres container can not be seen by other containers (like datahub or openmetadata). Ingestion settings: configure ingestion behavior including profiling, stale metadata handling, and other operational settings. the defaults represent best practices for most use cases. Starting in version 0.8.25, datahub supports creating, configuring, scheduling, & executing batch metadata ingestion using the datahub user interface. this makes getting metadata into datahub easier by minimizing the overhead required to operate custom integration pipelines.

Comments are closed.