Inference Optimization Envoy Ai Gateway

Envoy Ai Gateway Envoy ai gateway offers smart ways to improve the speed and reliability of your ai llm tasks. this section explains how it uses intelligent routing and load balancing to manage inference requests across different backend endpoints efficiently. Envoy ai gateway is an open source project for using envoy gateway to handle request traffic from application clients to generative ai services. when using envoy ai gateway, we refer to a two tier gateway pattern.

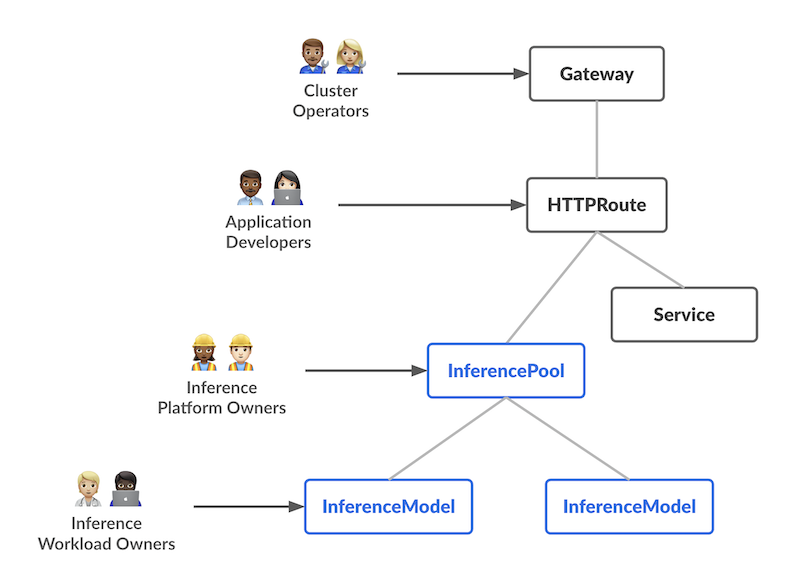

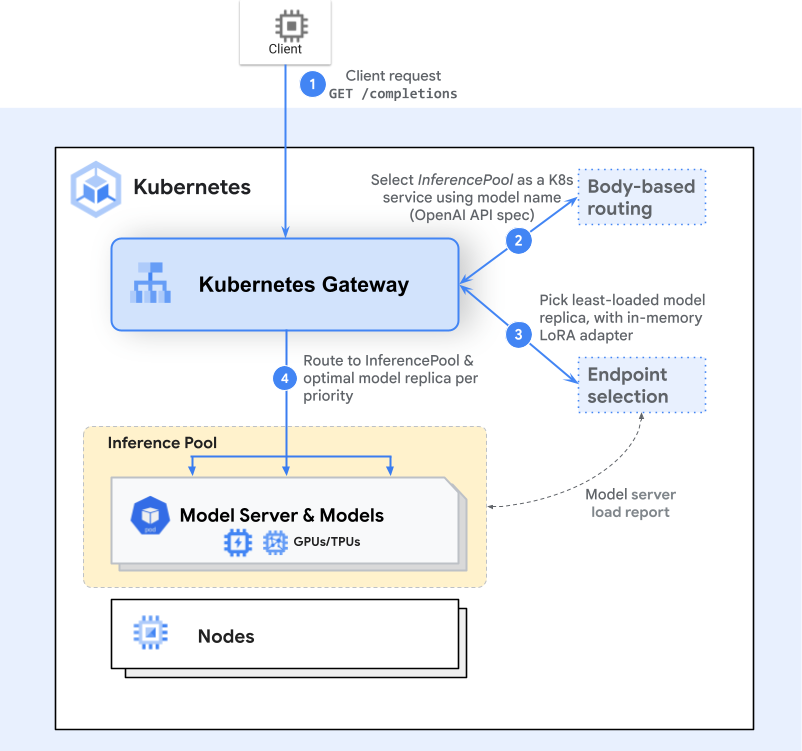

Envoy Ai Gateway In this tutorial you'll deploy an llminferenceservice that creates a router and an inference pool, and configure ai gateway to route openai compatible requests to it while tracking token usage. It provides optimized load balancing for self hosted generative ai models on kubernetes. the project’s goal is to improve and standardize routing to inference workloads across the ecosystem. Two tier architecture — a reference architecture with a centralized entry gateway (tier 1) for auth and global routing, and per cluster gateways (tier 2) for inference optimization. cncf ecosystem native — runs on kubernetes, composes with existing envoy filters, wasm plugins, and standard kubernetes gateway api resources. This solution leverages envoy gateway, envoy ai gateway, and inferencepool to address the key challenges enterprises face when deploying generative ai services in production environments. these challenges include vendor lock in, weak security controls, limited cost visibility, and complex o&m.

Envoy Ai Gateway Two tier architecture — a reference architecture with a centralized entry gateway (tier 1) for auth and global routing, and per cluster gateways (tier 2) for inference optimization. cncf ecosystem native — runs on kubernetes, composes with existing envoy filters, wasm plugins, and standard kubernetes gateway api resources. This solution leverages envoy gateway, envoy ai gateway, and inferencepool to address the key challenges enterprises face when deploying generative ai services in production environments. these challenges include vendor lock in, weak security controls, limited cost visibility, and complex o&m. Envoy ai gateway is an open source project for using envoy gateway to handle request traffic from application clients to generative ai services. when using envoy ai gateway, we refer to a two tier gateway pattern. This article is your hands on guide to installing envoy gateway ai (v 0.3.0) on kubernetes with terraform, step by step. an ai gateway is the layer between your apps and your ai model. The envoy ai gateway is specifically engineered to address the unique challenges of managing ai inference traffic, building directly upon the proven envoy gateway framework. This guide demonstrates how to use inferencepool with aigatewayroute for advanced ai specific inference routing. this approach provides enhanced features like model based routing, token rate limiting, and advanced observability.

Inference Optimization Envoy Ai Gateway Envoy ai gateway is an open source project for using envoy gateway to handle request traffic from application clients to generative ai services. when using envoy ai gateway, we refer to a two tier gateway pattern. This article is your hands on guide to installing envoy gateway ai (v 0.3.0) on kubernetes with terraform, step by step. an ai gateway is the layer between your apps and your ai model. The envoy ai gateway is specifically engineered to address the unique challenges of managing ai inference traffic, building directly upon the proven envoy gateway framework. This guide demonstrates how to use inferencepool with aigatewayroute for advanced ai specific inference routing. this approach provides enhanced features like model based routing, token rate limiting, and advanced observability.

Inferencepool Support Envoy Ai Gateway The envoy ai gateway is specifically engineered to address the unique challenges of managing ai inference traffic, building directly upon the proven envoy gateway framework. This guide demonstrates how to use inferencepool with aigatewayroute for advanced ai specific inference routing. this approach provides enhanced features like model based routing, token rate limiting, and advanced observability.

Blog Envoy Ai Gateway

Comments are closed.