Improving Stream Data Quality With Protobuf Schema Validation Confluent

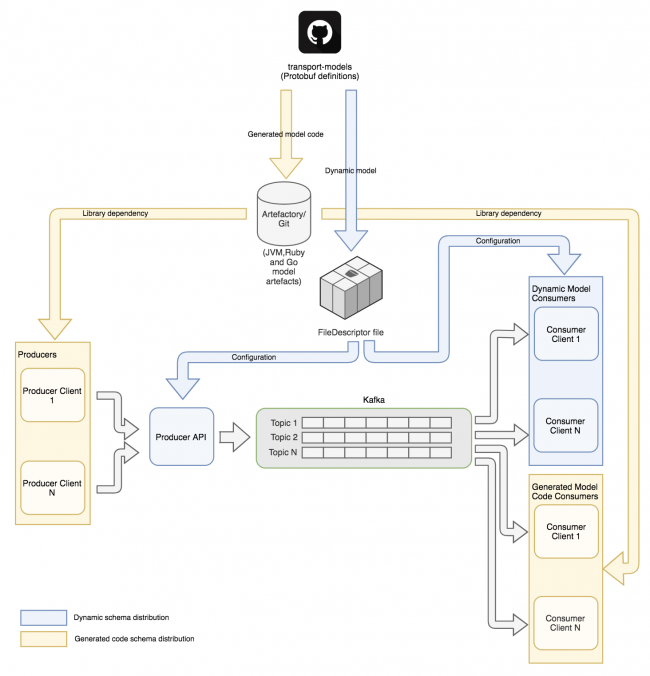

Improving Stream Data Quality With Protobuf Schema Validation Confluent This article describes how we came to implement a flexible, managed repository for the protobuf schemas flowing on franz, and how we have designed a way to provide a reliable schema contract between producer and consumer applications. Consume streaming protobuf messages with schemas managed by the confluent schema registry, handling schema evolution gracefully. demultiplex (demux) messages into multiple game specific, append only silver streaming tables.

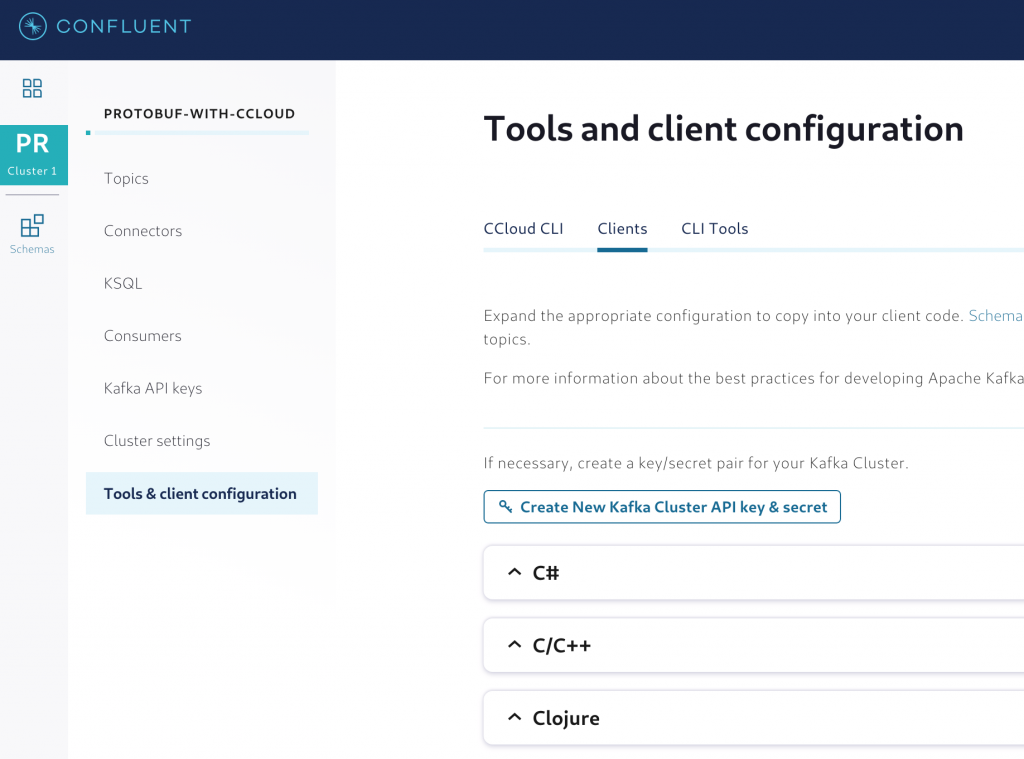

Schema Validation With Confluent Platform 5 4 This article describes how we came to implement a flexible, managed repository for the protobuf schemas flowing on franz, and how we have designed a way to provide a reliable schema contract between producer and consumer applications. The compact and efficient serialization of protobuf, along with the schema management capabilities of the confluent schema registry, make it a great choice for handling structured data in a kafka environment. This page details how protocol buffers (protobuf) schemas are supported in confluent's schema registry. it covers the architecture, implementation, and usage of protobuf schemas within the schema registry ecosystem. for information about other schema formats, see avro schemas and json schemas. The purpose of this intro is to demonstrate how a producer can register schemas with an instance of the confluent schema registry running locally in a docker container, together with the kafka message broker.

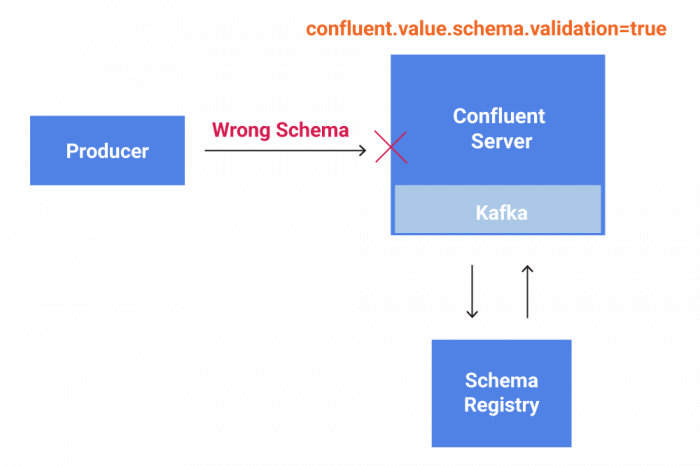

Using Data Contracts To Ensure Data Quality And Reliability This page details how protocol buffers (protobuf) schemas are supported in confluent's schema registry. it covers the architecture, implementation, and usage of protobuf schemas within the schema registry ecosystem. for information about other schema formats, see avro schemas and json schemas. The purpose of this intro is to demonstrate how a producer can register schemas with an instance of the confluent schema registry running locally in a docker container, together with the kafka message broker. Practical strategies to manage schema changes in streaming systems using avro, protobuf, and confluent schema registry. This article demonstrates how to leverage apache spark structured streaming to process data streams stored in kafka topics, even when the data is serialized using protobuf format. All in all, combining a comprehensive schema generator with a mutation engine and consistently getting the same response from confluent’s and warpstream’s schema registries over hundreds of thousands of tests gave us exceptional confidence in the correctness of our protobuf schema registry. Now, with the introduction of our schema aware brokers for bufstream, we've simplified the streaming data quality problem by focusing on protobuf driven semantic validation at the broker rather than pushing the problem onto the producers and consumers.

Improving Stream Data Quality With Protobuf Schema Validation Practical strategies to manage schema changes in streaming systems using avro, protobuf, and confluent schema registry. This article demonstrates how to leverage apache spark structured streaming to process data streams stored in kafka topics, even when the data is serialized using protobuf format. All in all, combining a comprehensive schema generator with a mutation engine and consistently getting the same response from confluent’s and warpstream’s schema registries over hundreds of thousands of tests gave us exceptional confidence in the correctness of our protobuf schema registry. Now, with the introduction of our schema aware brokers for bufstream, we've simplified the streaming data quality problem by focusing on protobuf driven semantic validation at the broker rather than pushing the problem onto the producers and consumers.

How To Use Protobuf In Confluent Cloud All in all, combining a comprehensive schema generator with a mutation engine and consistently getting the same response from confluent’s and warpstream’s schema registries over hundreds of thousands of tests gave us exceptional confidence in the correctness of our protobuf schema registry. Now, with the introduction of our schema aware brokers for bufstream, we've simplified the streaming data quality problem by focusing on protobuf driven semantic validation at the broker rather than pushing the problem onto the producers and consumers.

Comments are closed.