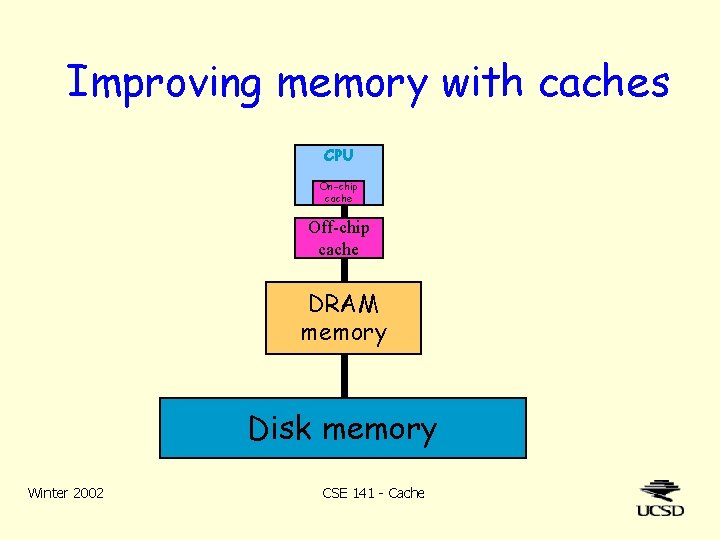

Improving Memory With Caches Cpu Onchip Cache Offchip

Improving Memory With Caches Cpu Onchip Cache Offchip Taking advantage of temporal locality: bring data into cache whenever its referenced kick out something that hasn’t been used recently taking advantage of spatial locality: bring in a block of contiguous data (cacheline), not just the requested data. The novelty of this study lies in its adaptive approach to cache memory implementation, which integrates advanced cache replacement policies, predictive memory access techniques, and.

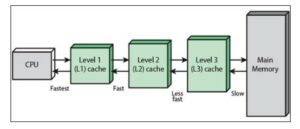

Types Of Cache Memory In A Cpu The advantage of storing knowledge in cache over ram is that it has faster retrieval times, but it has the downside of consuming on chip energy. the performance of cache memory is evaluated in this research using these three variables: miss rate, miss penalty, and cache time interval. In this paper, we revisit the cache line size trade offs with a focus on off chip caches to understand whether commonly used line sizes, which are typically in the range 64–256 bytes, are good trade offs in light of energy dissipation. Such organisation of caches is known as two level cache design: the first level (li) internal cache (primary) is within the cpu chip (on chip), and the second level (l2) or secondary cache is on the cpu board (off chip). Rather than treating cache as single monolithic block, divide into independent banks to support simultaneous accesses the arm cortex a8 supports one to four banks in its l2 cache;.

Onchip Vs Offchip Memory Good To Know Of Another Form Factor Ki Wook Such organisation of caches is known as two level cache design: the first level (li) internal cache (primary) is within the cpu chip (on chip), and the second level (l2) or secondary cache is on the cpu board (off chip). Rather than treating cache as single monolithic block, divide into independent banks to support simultaneous accesses the arm cortex a8 supports one to four banks in its l2 cache;. The cache is implemented in fpga registers rather than on chip memory. by pulling memory accesses preferentially from the register cache, the loop carried dependency is broken. This paper investigates the system level impact of soft errors occurring in cache memory and proposes a novel cache memory design approach for improving the soft error resilience. We present a technique for efficiently exploiting on chip scratch pad memory by partitioning the application's scalar and arrayed variables into off chip dram and on chip scratch pad sram, with the goal of minimizing the total execution time of embedded applications. When the cpu asks for a value from memory, and that value is already in the cache, it can get it quickly. this is called a cache hit when the cpu asks for a value from memory, and that value is not already in the cache, it will have to go off the chip to get it. this is called a cache miss transferred in much smaller quantities, each called.

Ppt An Adaptive Nonuniform Cache Structure For Wiredelay Dominated The cache is implemented in fpga registers rather than on chip memory. by pulling memory accesses preferentially from the register cache, the loop carried dependency is broken. This paper investigates the system level impact of soft errors occurring in cache memory and proposes a novel cache memory design approach for improving the soft error resilience. We present a technique for efficiently exploiting on chip scratch pad memory by partitioning the application's scalar and arrayed variables into off chip dram and on chip scratch pad sram, with the goal of minimizing the total execution time of embedded applications. When the cpu asks for a value from memory, and that value is already in the cache, it can get it quickly. this is called a cache hit when the cpu asks for a value from memory, and that value is not already in the cache, it will have to go off the chip to get it. this is called a cache miss transferred in much smaller quantities, each called.

Ppt An Adaptive Nonuniform Cache Structure For Wiredelay Dominated We present a technique for efficiently exploiting on chip scratch pad memory by partitioning the application's scalar and arrayed variables into off chip dram and on chip scratch pad sram, with the goal of minimizing the total execution time of embedded applications. When the cpu asks for a value from memory, and that value is already in the cache, it can get it quickly. this is called a cache hit when the cpu asks for a value from memory, and that value is not already in the cache, it will have to go off the chip to get it. this is called a cache miss transferred in much smaller quantities, each called.

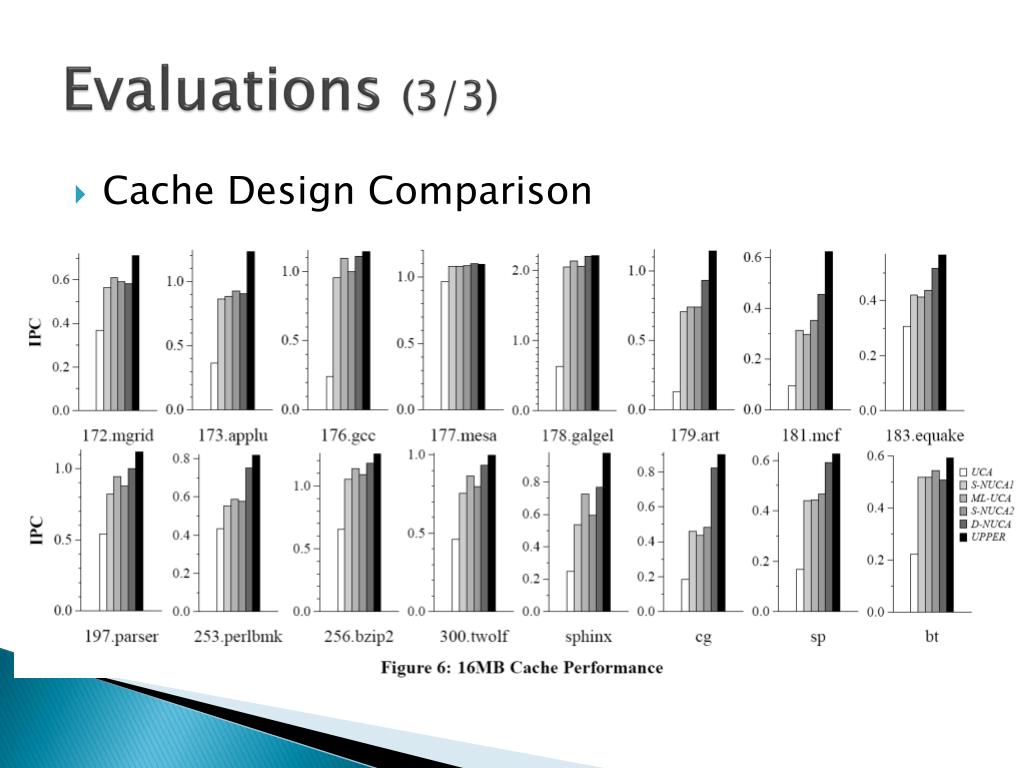

Ppt An Adaptive Nonuniform Cache Structure For Wiredelay Dominated

Comments are closed.