Improving Llm Code Generation With Grammar Augmentation

Improving Llm Code Generation With Grammar Augmentation We present syncode, a novel framework for efficient and general syntactical decoding with llms, to address this challenge. syncode ensures soundness and completeness with respect to the cfg of a formal language, effectively retaining valid tokens while filtering out invalid ones. Syncode is a novel framework for the grammar guided generation of large language models (llms) that is scalable to general purpose programming languages and has soundness and completeness guarantees.

Github Taufikus Fine Tuning Llm Code Generation This Project Focuses The submission presents a technically sound and empirically validated framework—syncode—for improving syntactic correctness in llm generation via grammar constrained decoding. Very recently, researchers have proposed new techniques for grammar guided generation to enhance the syntactical accuracy of llms by modifying the decoding algorithm. We present syncode a novel framework for efficient and general syntactical decoding of code with large language models (llms). syncode leverages the grammar of a programming language, utilizing an offline constructed efficient lookup table called dfa mask store based on language grammar terminals. This work presents syncode, a novel framework for efficient and general syntactical decoding with llms, to address the challenge of instructing llms to adhere to specified syntax, and demonstrates its substantial impact on enhancing syntactical precision in llm generation.

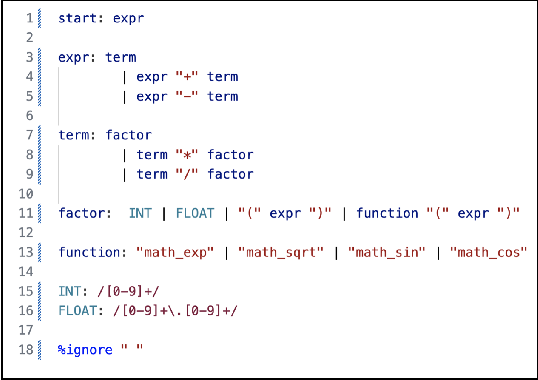

Figure 3 From Syncode Llm Generation With Grammar Augmentation We present syncode a novel framework for efficient and general syntactical decoding of code with large language models (llms). syncode leverages the grammar of a programming language, utilizing an offline constructed efficient lookup table called dfa mask store based on language grammar terminals. This work presents syncode, a novel framework for efficient and general syntactical decoding with llms, to address the challenge of instructing llms to adhere to specified syntax, and demonstrates its substantial impact on enhancing syntactical precision in llm generation. Our experiments evaluating the effectiveness of syncode for json generation demonstrate that syncode eliminates all syntax errors and significantly outperforms state of the art baselines. Our goal is to make grammar guided generation precise and efficient by imposing formal grammar constraints on llm generations, ensuring the output adheres strictly to the predefined syntax. The paper proposes a grammar augmentation technique to improve the code generation capabilities of large language models (llms). the core idea is to provide the llm with additional information about the structure of code, in the form of a context free grammar (cfg), during the training process. Syncode dramatically improves llm generated code quality by integrating programming language grammar, cutting syntax errors by 96% in python and go tests.

How To Perform Code Generation With Llm Models Our experiments evaluating the effectiveness of syncode for json generation demonstrate that syncode eliminates all syntax errors and significantly outperforms state of the art baselines. Our goal is to make grammar guided generation precise and efficient by imposing formal grammar constraints on llm generations, ensuring the output adheres strictly to the predefined syntax. The paper proposes a grammar augmentation technique to improve the code generation capabilities of large language models (llms). the core idea is to provide the llm with additional information about the structure of code, in the form of a context free grammar (cfg), during the training process. Syncode dramatically improves llm generated code quality by integrating programming language grammar, cutting syntax errors by 96% in python and go tests.

Comments are closed.