Implementing Text Summarisation Using Language Models With Hallucination Detection

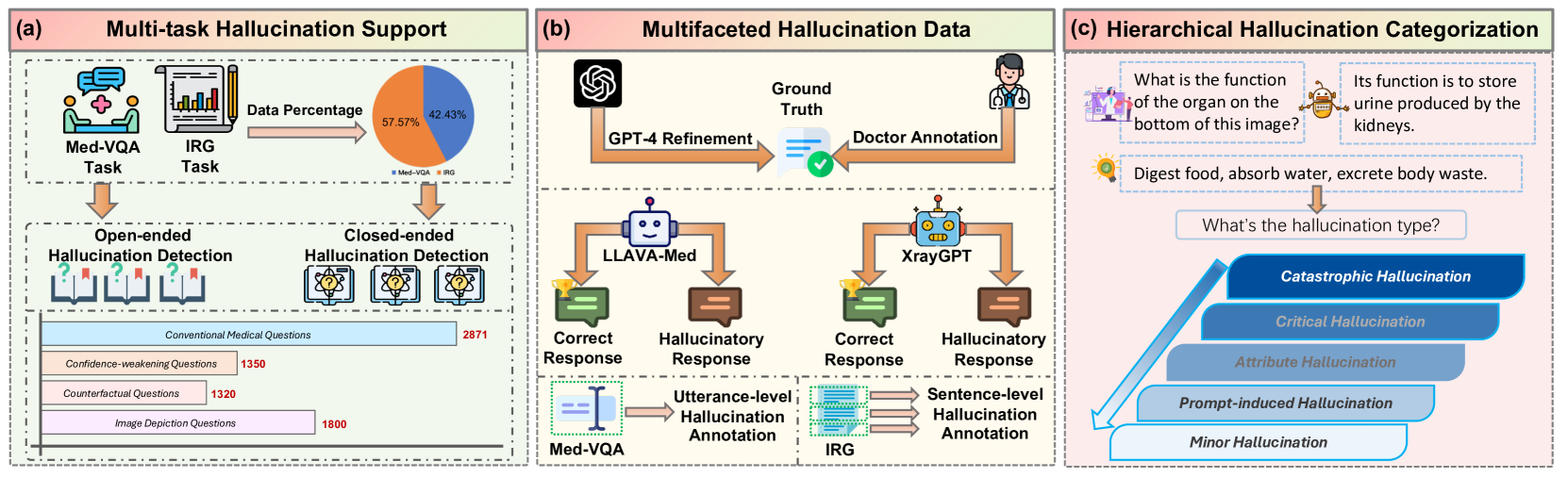

Unified Hallucination Detection For Multimodal Large Language Models Recent advancements in large language models (llms) have significantly propelled the field of automatic text summarization. nevertheless, the domain continues to face substantial. This framework establishes quantitative measures for hallucination detection and leverages the iterative optimization capabilities of large language models (llms) to not only enhance the factual consistency of summaries effectively but also improve their interpretability and reliability.

Fine Grained Hallucination Detection And Editing For Language Models This paper introduces a hallucination detection and mitigation framework that employs a question answer generation, sorting, and evaluation (q s e) methodology to enable the quantitative detection of hallucinations in summaries. To address this challenge, this paper introduces a structured, operational framework for the systematic management of hallucinations in llms and large reasoning models (lrms). the framework is built on a continuous improvement cycle centered on two core activities: detection and mitigation. This study aims to systematically evaluate entity hallucination across state of the art summarization models and address the gap in existing evaluation metrics by proposing a novel solution. Huang et al. proposed a cl2sum method that integrates contextual learning with in context learning (icl) to mitigate hallucination issues in text summarization utilizing large language models (llms).

Fine Grained Hallucination Detection And Editing For Language Models This study aims to systematically evaluate entity hallucination across state of the art summarization models and address the gap in existing evaluation metrics by proposing a novel solution. Huang et al. proposed a cl2sum method that integrates contextual learning with in context learning (icl) to mitigate hallucination issues in text summarization utilizing large language models (llms). This paper presents a hallucination detection and mitigation framework that employs the q s e methodology to enable the quantitative detection of hallucinations in summaries. Evaluation on the llm aggrefact benchmark demonstrates hallutree’s effectiveness: it achieves performance competitive with top tier black box models, including bespoke minicheck, while providing transparent and auditable reasoning programs for every inferential judgment. Race demonstrates that effective hallucination detection for modern reasoning models must evaluate both what the model answers and how it reasons, and pioneers the direction of black box hallucination detection for lrms. Stay updated. get involved.

Unified Hallucination Detection For Multimodal Large Language Models This paper presents a hallucination detection and mitigation framework that employs the q s e methodology to enable the quantitative detection of hallucinations in summaries. Evaluation on the llm aggrefact benchmark demonstrates hallutree’s effectiveness: it achieves performance competitive with top tier black box models, including bespoke minicheck, while providing transparent and auditable reasoning programs for every inferential judgment. Race demonstrates that effective hallucination detection for modern reasoning models must evaluate both what the model answers and how it reasons, and pioneers the direction of black box hallucination detection for lrms. Stay updated. get involved.

Unified Hallucination Detection For Multimodal Large Language Models Race demonstrates that effective hallucination detection for modern reasoning models must evaluate both what the model answers and how it reasons, and pioneers the direction of black box hallucination detection for lrms. Stay updated. get involved.

Reference Free Hallucination Detection For Large Vision Language Models

Comments are closed.