Implementing Model Pruning Techniques For Android App Optimization

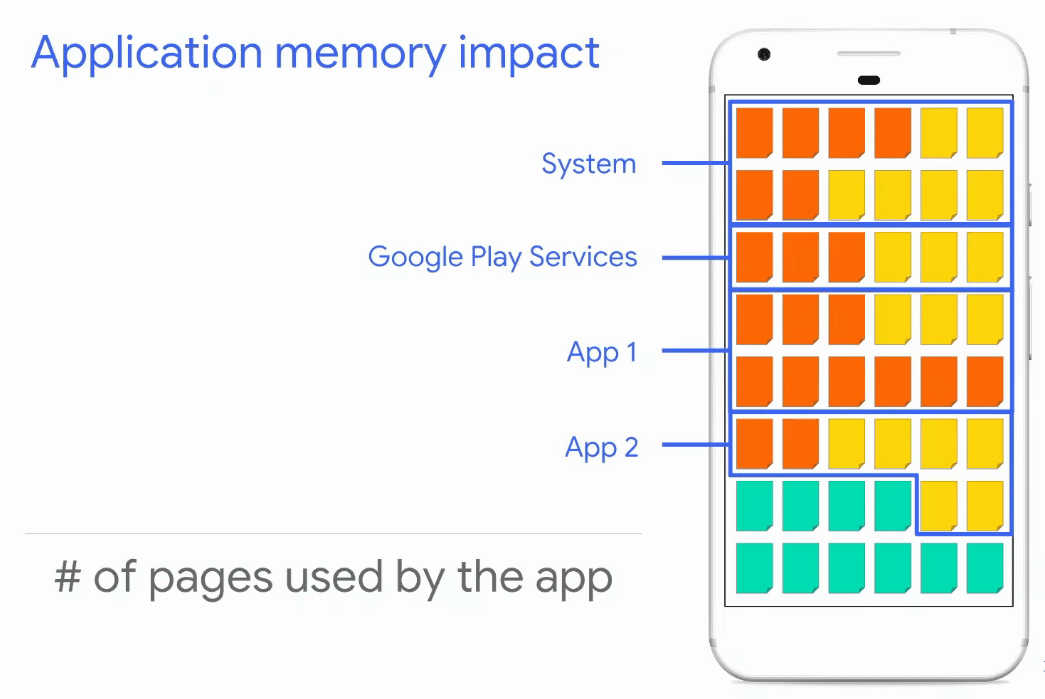

Implementing Model Pruning Techniques For Android App Optimization This article will guide you through the process of implementing model pruning for android app optimization, ensuring your applications run smoothly while maintaining their functionality. Pruning can reduce llm model size by up to 90% while retaining 95% accuracy, making mobile deployment feasible without cloud dependency. implementation requires balancing sparsity with fine tuning complexity; unstructured pruning is simple but needs sparse kernels for speedup.

2019 12 Classification Of Pruning Methodologies For Model Development It's recommended that you consider model optimization during your application development process. this document outlines some best practices for optimizing tensorflow models for deployment to edge hardware. Following the instructions of tflite model benchmarking tool, we build the tool, upload it to the android device together with dense and pruned tflite models, and benchmark both models on the. Whether it is weight pruning, structured pruning, or dynamic pruning, each method offers unique advantages and challenges. while pruning can result in faster, smaller, and more power efficient models, it must be done carefully to ensure minimal loss in accuracy. The tensorflow model optimization toolkit is a suite of tools for optimizing ml models for deployment and execution. among many uses, the toolkit supports techniques used to: reduce latency and inference cost for cloud and edge devices (e.g. mobile, iot).

Exploring Android App Performance Optimization Techniques Moldstud Whether it is weight pruning, structured pruning, or dynamic pruning, each method offers unique advantages and challenges. while pruning can result in faster, smaller, and more power efficient models, it must be done carefully to ensure minimal loss in accuracy. The tensorflow model optimization toolkit is a suite of tools for optimizing ml models for deployment and execution. among many uses, the toolkit supports techniques used to: reduce latency and inference cost for cloud and edge devices (e.g. mobile, iot). Let’s dive in! 1. quantize your models for mobile efficiency quantization is the single most impactful optimization you can apply when you run llms on mobile devices. it reduces the memory footprint and computational load of your model by representing weights with fewer bits—typically from 32 bit floating point down to 8 bit or even 4 bit. Learn how to integrate trained ai models in mobile app development process. discover the best practices, and real world examples to create intelligent and innovative mobile solutions. Learn about optimization techniques to improve gen ai model performance such as pruning, quantization, model compilation, speculative decoding, and artifact storage. Today, we’ll explore one of the two critical techniques, quantization, that can significantly reduce model size and improve computational speed, making them ideal for deployment on edge devices.

Android App Optimization Top Techniques To Reduce Android App Size And Let’s dive in! 1. quantize your models for mobile efficiency quantization is the single most impactful optimization you can apply when you run llms on mobile devices. it reduces the memory footprint and computational load of your model by representing weights with fewer bits—typically from 32 bit floating point down to 8 bit or even 4 bit. Learn how to integrate trained ai models in mobile app development process. discover the best practices, and real world examples to create intelligent and innovative mobile solutions. Learn about optimization techniques to improve gen ai model performance such as pruning, quantization, model compilation, speculative decoding, and artifact storage. Today, we’ll explore one of the two critical techniques, quantization, that can significantly reduce model size and improve computational speed, making them ideal for deployment on edge devices.

Implementing Model Pruning Techniques For Memory Efficiency In Android Learn about optimization techniques to improve gen ai model performance such as pruning, quantization, model compilation, speculative decoding, and artifact storage. Today, we’ll explore one of the two critical techniques, quantization, that can significantly reduce model size and improve computational speed, making them ideal for deployment on edge devices.

Comments are closed.