Image Classification Computer Vision With Hugging Face Transformers Google Vit Python Ml Tutorial

Google Vit Base Image Classification A Hugging Face Space By Mksaad Vision transformer (vit) is a transformer adapted for computer vision tasks. an image is split into smaller fixed sized patches which are treated as a sequence of tokens, similar to words for nlp tasks. In this tutorial, you will learn how to finetune the state of the art vision transformer (vit) on your custom image classification dataset using the huggingface transformers library in python.

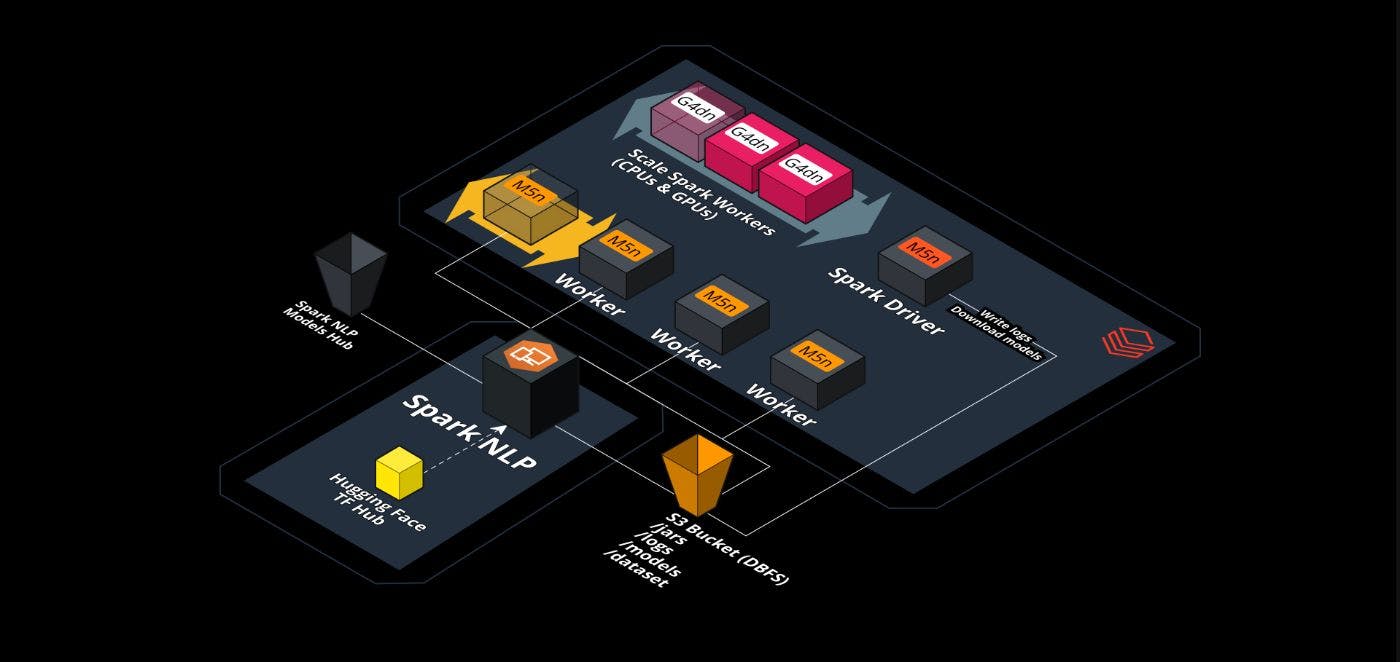

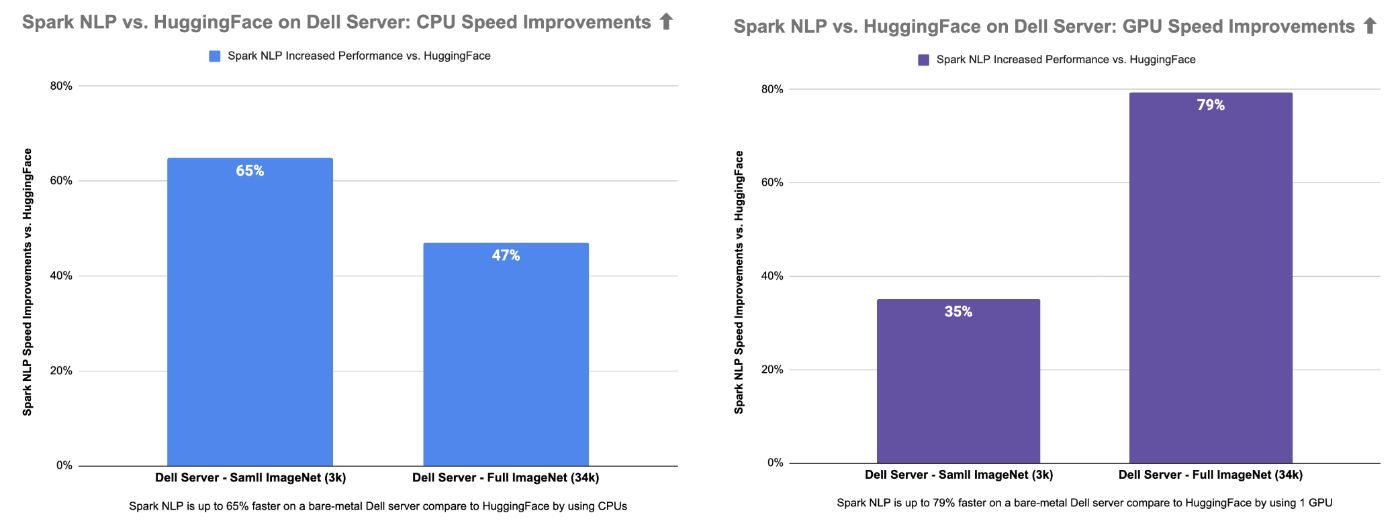

Scale Vision Transformers Vit Beyond Hugging Face Hackernoon In this notebook, we'll walk through how to leverage 🤗 datasets to download and process image classification datasets, and then use them to fine tune a pre trained vit with 🤗 transformers. In this blog post we walked through a real python script for vision transformer image classification using hugging face. we saw how to load an image, transform it properly, feed it. Learn how vision transformers (vits) leverage patch embeddings and self attention to beat cnns in modern image classification. this in depth tutorial breaks down the vit architecture, provides step by step python code, and shows you when to choose vits for real world computer vision projects. Learn image classification using hugging face transformers such as vit, swin, convnext, and clip for efficient vision tasks with python.

Image Classification With Vit A Hugging Face Space By Mstftmk Learn how vision transformers (vits) leverage patch embeddings and self attention to beat cnns in modern image classification. this in depth tutorial breaks down the vit architecture, provides step by step python code, and shows you when to choose vits for real world computer vision projects. Learn image classification using hugging face transformers such as vit, swin, convnext, and clip for efficient vision tasks with python. This directory contains several notebooks that illustrate how to use google's vit both for fine tuning on custom data as well as inference. it currently includes the following notebooks: there's also the official huggingface image classification notebook, which can be found here. For this tutorial, we will use a model from the hugging face model hub. the hub contains thousands of models covering dozens of different machine learning tasks. expand the tasks category on the left sidebar and select "image classification" as our task of interest. Learn how to *train vision transformers (vits) on your custom dataset* using *google colab* in this step by step tutorial! 🚀 this video walks you through the entire process, from setting. Specifically, we’re going to talk about how a vit model works and how we can fine tune it on our own custom dataset with the help of huggingface library for an image classification task. so, as the first step, let’s get started with the dataset that we’re going to use in this article.

Damerajee Vit Pytorch Eye Classification Hugging Face This directory contains several notebooks that illustrate how to use google's vit both for fine tuning on custom data as well as inference. it currently includes the following notebooks: there's also the official huggingface image classification notebook, which can be found here. For this tutorial, we will use a model from the hugging face model hub. the hub contains thousands of models covering dozens of different machine learning tasks. expand the tasks category on the left sidebar and select "image classification" as our task of interest. Learn how to *train vision transformers (vits) on your custom dataset* using *google colab* in this step by step tutorial! 🚀 this video walks you through the entire process, from setting. Specifically, we’re going to talk about how a vit model works and how we can fine tune it on our own custom dataset with the help of huggingface library for an image classification task. so, as the first step, let’s get started with the dataset that we’re going to use in this article.

Github Pytholic Vit Classification Huggingface Image Classification Learn how to *train vision transformers (vits) on your custom dataset* using *google colab* in this step by step tutorial! 🚀 this video walks you through the entire process, from setting. Specifically, we’re going to talk about how a vit model works and how we can fine tune it on our own custom dataset with the help of huggingface library for an image classification task. so, as the first step, let’s get started with the dataset that we’re going to use in this article.

Scale Vision Transformers Vit Beyond Hugging Face Hackernoon

Comments are closed.