Illustrating Reinforcement Learning From Human Feedback Rlhf

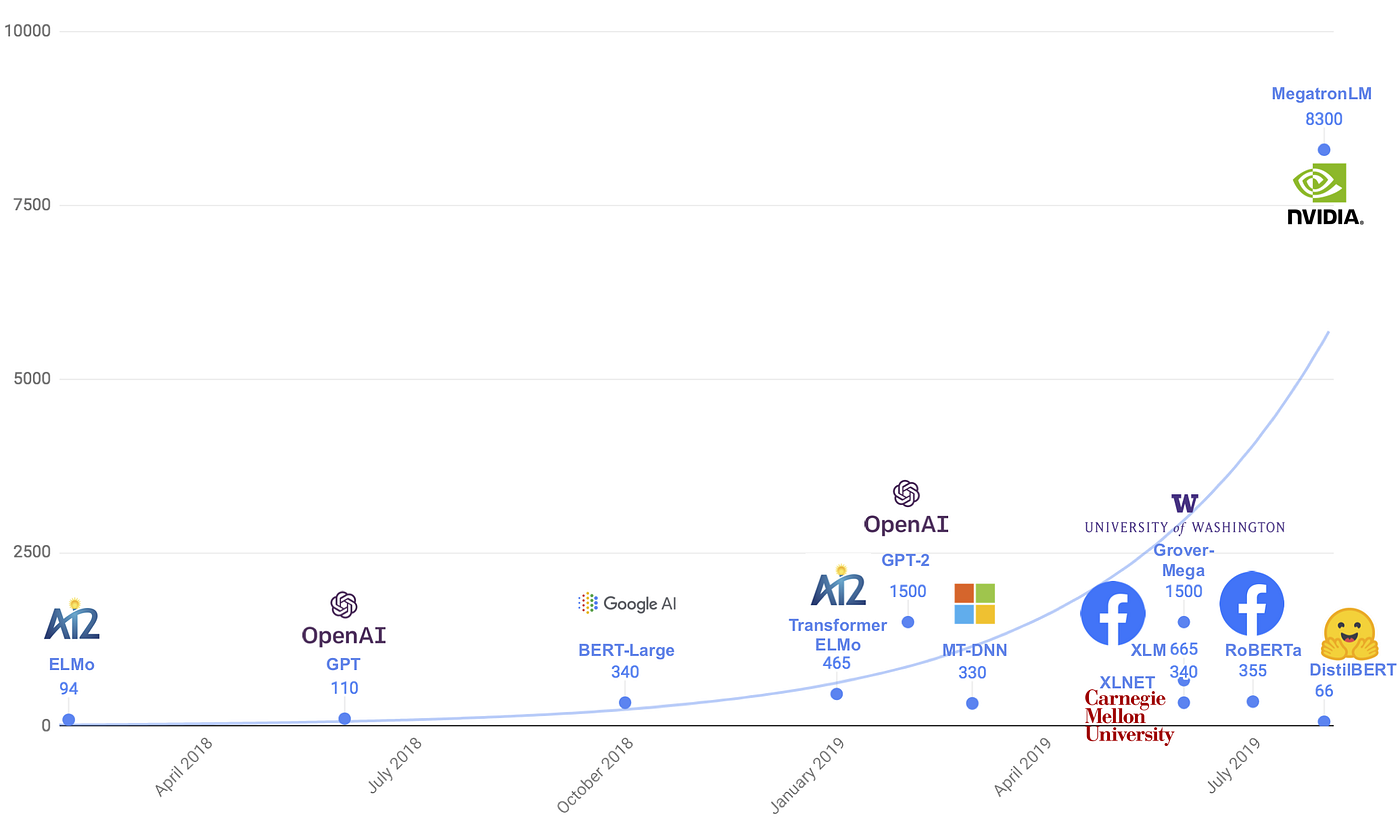

Illustrating Reinforcement Learning From Human Feedback Rlhf That's the idea of reinforcement learning from human feedback (rlhf); use methods from reinforcement learning to directly optimize a language model with human feedback. rlhf has enabled language models to begin to align a model trained on a general corpus of text data to that of complex human values. The core of the book details every optimization stage in using rlhf, from starting with instruction tuning to training a reward model and finally all of rejection sampling, reinforcement learning, and direct alignment algorithms.

Illustrating Reinforcement Learning From Human Feedback Rlhf Rlhf (reinforcement learning from human feedback) solves this problem by training models to match human values and expectations. this guide shows you how to implement rlhf from scratch, covering reward model creation, preference data collection, and policy optimization. In machine learning, reinforcement learning from human feedback (rlhf) is a technique to align an intelligent agent with human preferences. it involves training a reward model to represent preferences, which can then be used to train other models through reinforcement learning. That's the idea of reinforcement learning from human feedback (rlhf); use methods from reinforcement learning to directly optimize a language model with human feedback. rlhf has enabled language models to begin to align a model trained on a general corpus of text data to that of complex human values. Reinforcement learning from human feedback (rlhf) is a training approach used to align machine learning models specially large language models with human preferences and values.

Rlhf 101 Reinforcement Learning From Human Feedback For Llm Ais That's the idea of reinforcement learning from human feedback (rlhf); use methods from reinforcement learning to directly optimize a language model with human feedback. rlhf has enabled language models to begin to align a model trained on a general corpus of text data to that of complex human values. Reinforcement learning from human feedback (rlhf) is a training approach used to align machine learning models specially large language models with human preferences and values. The methodologies for implementing llms with human feedback, such as advanced reward design and iterative model refinement, are explained, with a number of use cases. A technical guide to reinforcement learning from human feedback (rlhf). this article covers its core concepts, training pipeline, key alignment algorithms, and 2025 2026 developments including dpo, grpo, and rlaif. Reinforcement learning from human feedback (rlhf) has become an important technical and storytelling tool to deploy the latest machine learning systems. in this book, we hope to give a gentle introduction to the core methods for people with some level of quantitative background. An in depth guide to fine tuning large language models with reinforcement learning from human feedback (rlhf). covers new rlhf algorithms (dpo, rlaif), open datasets, tools like.

Reinforcement Learning Rl From Human Feedback Rlhf Primo Ai The methodologies for implementing llms with human feedback, such as advanced reward design and iterative model refinement, are explained, with a number of use cases. A technical guide to reinforcement learning from human feedback (rlhf). this article covers its core concepts, training pipeline, key alignment algorithms, and 2025 2026 developments including dpo, grpo, and rlaif. Reinforcement learning from human feedback (rlhf) has become an important technical and storytelling tool to deploy the latest machine learning systems. in this book, we hope to give a gentle introduction to the core methods for people with some level of quantitative background. An in depth guide to fine tuning large language models with reinforcement learning from human feedback (rlhf). covers new rlhf algorithms (dpo, rlaif), open datasets, tools like.

Reinforcement Learning From Human Feedback Rlhf Reinforcement learning from human feedback (rlhf) has become an important technical and storytelling tool to deploy the latest machine learning systems. in this book, we hope to give a gentle introduction to the core methods for people with some level of quantitative background. An in depth guide to fine tuning large language models with reinforcement learning from human feedback (rlhf). covers new rlhf algorithms (dpo, rlaif), open datasets, tools like.

Comments are closed.