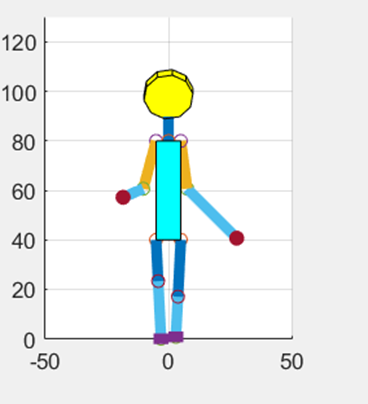

Humanoid Walking Simulation

Github Laithetrung Robot Humanoid Simulation A Project Of Robot And Training a humanoid robot for locomotion using reinforcement learning rohanpsingh learninghumanoidwalking. The goal of this example is to train a humanoid robot to walk, and you can use various methods to train the robot. the example shows the genetic algorithm and reinforcement learning methods.

Humanoid Walking Using Evoultionary Algorithms Simulation Ipynb At Main We have presented a natural walking controller learned purely in simulation using end to end reinforcement learning. this enables the fleet of figure robots to quickly learn robust, proprioceptive locomotion strategies and enables rapid engineering iteration cycles. In this study, we have integrated a reinforcement learning algorithm and a musculoskeletal model including trunk, pelvis, and leg segments to develop control modes that drive the model to walk. Although classical controllers for humanoid robots have shown impressive results in a number of settings, they are challenging to generalize and adapt to new environments. here, we present a fully learning based approach for real world humanoid locomotion. In this paper, we explore the application of sim to real deep reinforcement learning (rl) for the design of bipedal locomotion controllers for humanoid robots on compliant and uneven terrains.

Walking Simulation By Jswgames Although classical controllers for humanoid robots have shown impressive results in a number of settings, they are challenging to generalize and adapt to new environments. here, we present a fully learning based approach for real world humanoid locomotion. In this paper, we explore the application of sim to real deep reinforcement learning (rl) for the design of bipedal locomotion controllers for humanoid robots on compliant and uneven terrains. In this blog, we will go over the biomechanics of walking, limitations of classical control theory like model predictive controllers, how reinforcement learning fixes this and how we apply our techniques to train a unitree g1 bipedal robot in isaac gym with sim2sim evaluation in mujoco. In this study, we have integrated a reinforcement learning algorithm and a musculoskeletal model including trunk, pelvis, and leg segments to develop control modes that drive the model to walk. Simulation results show that the proposed framework significantly enhances the maximum walking capability of the humanoid robot, demonstrating its feasibility and effectiveness. The difficult task of creating reliable mobility for humanoid robots has been studied for decades. even though several different walking strategies have been put forth and walking performance has substantially increased, stability still needs to catch up to expectations.

Humanoid Robot Walking Stable Diffusion Online In this blog, we will go over the biomechanics of walking, limitations of classical control theory like model predictive controllers, how reinforcement learning fixes this and how we apply our techniques to train a unitree g1 bipedal robot in isaac gym with sim2sim evaluation in mujoco. In this study, we have integrated a reinforcement learning algorithm and a musculoskeletal model including trunk, pelvis, and leg segments to develop control modes that drive the model to walk. Simulation results show that the proposed framework significantly enhances the maximum walking capability of the humanoid robot, demonstrating its feasibility and effectiveness. The difficult task of creating reliable mobility for humanoid robots has been studied for decades. even though several different walking strategies have been put forth and walking performance has substantially increased, stability still needs to catch up to expectations.

Comments are closed.