Human Developer Vs Ai Agent Actual Test

Ai Agent Test A Hugging Face Space By Mugiwarx Try out replit agent 3: dub.sh 0v9qw89i competed against an ai agent to build the exact same app. a dev portfolio generator. same features, same tech. Ai agents vs. human developers: who really wins? a few months ago, i watched an ai agent spin up a rest api, write tests, fix its own bugs, and deploy to a staging environment — all.

Human Vs Ai A B Test Spoiler Alert Humans Win 9 Clouds The human developer focused on the hardest integration first, while the ai agent focused on design and user flow, highlighting the ai's tendency toward generic design. A recent benchmark comparison by codesignal provides fascinating insights into this debate, comparing the performance of ai models against human engineers in various coding tasks. By showcasing how ai models compare to each other as well as to real engineering candidates, this report provides actionable data to help businesses design more effective, ai empowered engineering teams. We’ll compare the capabilities of modern ai coding agents like cursor, bolt, replit, lovable, gocodeo, cline, and tabnine to the nuanced craft of human developers.

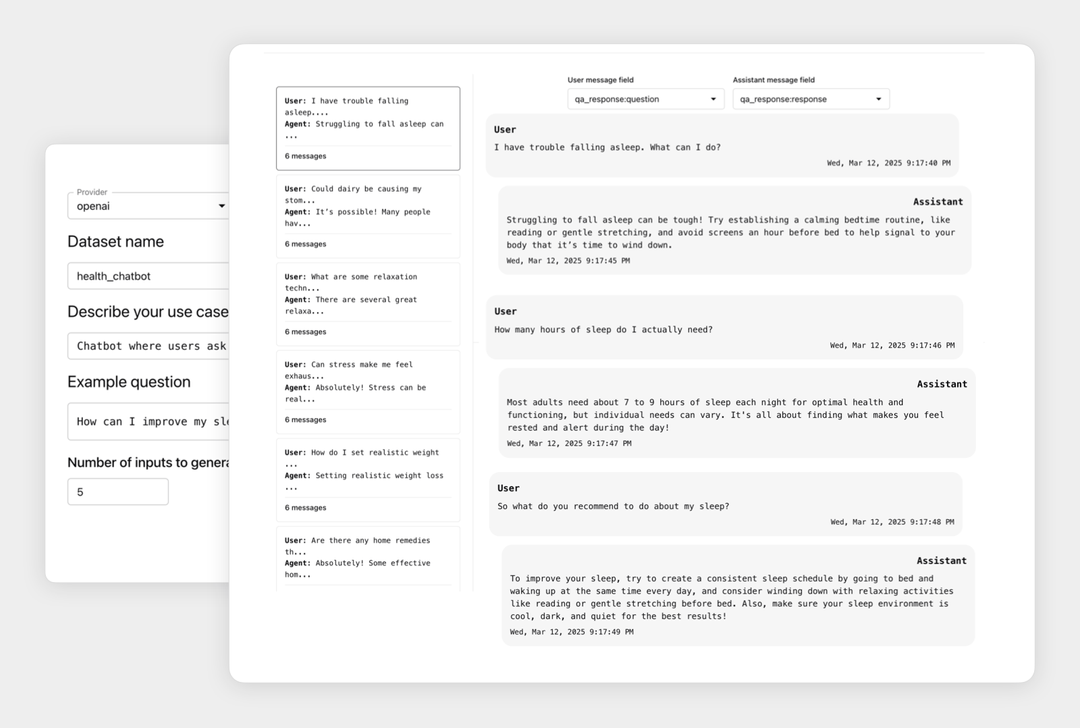

Ai Agent Testing And Validation Evidently Ai By showcasing how ai models compare to each other as well as to real engineering candidates, this report provides actionable data to help businesses design more effective, ai empowered engineering teams. We’ll compare the capabilities of modern ai coding agents like cursor, bolt, replit, lovable, gocodeo, cline, and tabnine to the nuanced craft of human developers. This report compares the characteristics of good code optimized for ai agents versus code optimized for human developers, focusing on design patterns, code readability, and performance optimizations. An evaluation (“eval”) is a test for an ai system: give an ai an input, then apply grading logic to its output to measure success. in this post, we focus on automated evals that can be run during development without real users. By showcasing how ai fashions examine to one another in addition to to actual engineering candidates, this report offers actionable knowledge to assist companies design more practical, ai empowered engineering groups. To find out, we designed 10 lab challenges modeled after real world, high value vulnerabilities. we tested claude sonnet 4.5, gpt 5, and gemini 2.5 pro on these challenges.

Description Of Agent For Human Vs Ai Agent Download Scientific Diagram This report compares the characteristics of good code optimized for ai agents versus code optimized for human developers, focusing on design patterns, code readability, and performance optimizations. An evaluation (“eval”) is a test for an ai system: give an ai an input, then apply grading logic to its output to measure success. in this post, we focus on automated evals that can be run during development without real users. By showcasing how ai fashions examine to one another in addition to to actual engineering candidates, this report offers actionable knowledge to assist companies design more practical, ai empowered engineering groups. To find out, we designed 10 lab challenges modeled after real world, high value vulnerabilities. we tested claude sonnet 4.5, gpt 5, and gemini 2.5 pro on these challenges.

Ai Is More Human Than An Actual Human As Per Turing Test Results By showcasing how ai fashions examine to one another in addition to to actual engineering candidates, this report offers actionable knowledge to assist companies design more practical, ai empowered engineering groups. To find out, we designed 10 lab challenges modeled after real world, high value vulnerabilities. we tested claude sonnet 4.5, gpt 5, and gemini 2.5 pro on these challenges.

Comments are closed.