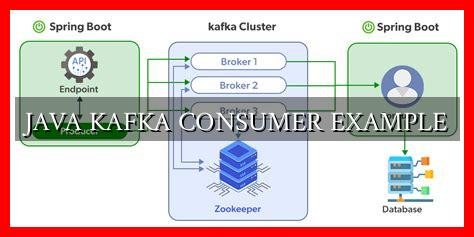

How To Write A Basic Consumer Java Application For Kafka

Kafka Consumer Example Src Main Java Com Javatechie Now data for the consumers is going to be read in order within each partition. in this article, we are going to discuss the step by step implementation of how to create an apache kafka consumer using java. step by step implementation step 1: create a new apache kafka project in intellij. In this tutorial, learn how to create a kafka consumer application in java, with step by step instructions and supporting code.

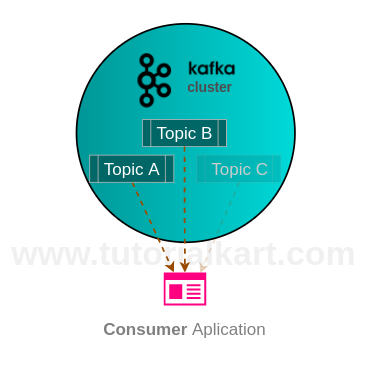

Kafka Consumer With Example Java Application In this tutorial, we’ll learn how to create a kafka listener and consume messages from a topic using kafka’s consumer api. after that, we’ll test our implementation using the producer api and testcontainers. Kafka is often used for building powerful data consumers that can handle high throughput and are fault tolerant. this tutorial focuses on building a basic kafka consumer using java. In this apache kafka tutorial – kafka consumer with example java application, we have learnt about kafka consumer, and presented a step by step guide to realize a kafka consumer application using java. This post will show you how to create a kafka producer and consumer in java. it will also show you the various configuration options, and how to tune them for a production setup.

Java Kafka Consumer Example Wadaef In this apache kafka tutorial – kafka consumer with example java application, we have learnt about kafka consumer, and presented a step by step guide to realize a kafka consumer application using java. This post will show you how to create a kafka producer and consumer in java. it will also show you the various configuration options, and how to tune them for a production setup. Learn how to build your first kafka consumer. this tutorial covers the consumer api, subscribing to topics, and polling for messages using java. Learn how to write your first kafka producer and consumer using java with basic code examples. learn to send different types of messages, use custom serializers, and handle retries. understand polling, offset management, auto manual commits, and consumer groups. In this blog post, we’ve explored the essentials of creating kafka consumer applications, covering everything from handling offsets and errors to fine tuning performance and minimizing the impact of rebalances. Learn about constructing kafka consumers, how to use java to write a consumer to receive and process records received from topics, and the logging setup.

Comments are closed.