How To Run Llms Locally Full Guide

Easiest Way To Run Llms Locally A comprehensive guide covering the local llm stack from hardware requirements to production deployment. compare ollama, lm studio, llama.cpp and build your first local ai application. Running llms locally offers several advantages including privacy, offline access, and cost efficiency. this repository provides step by step guides for setting up and running llms using various frameworks, each with its own strengths and optimization techniques.

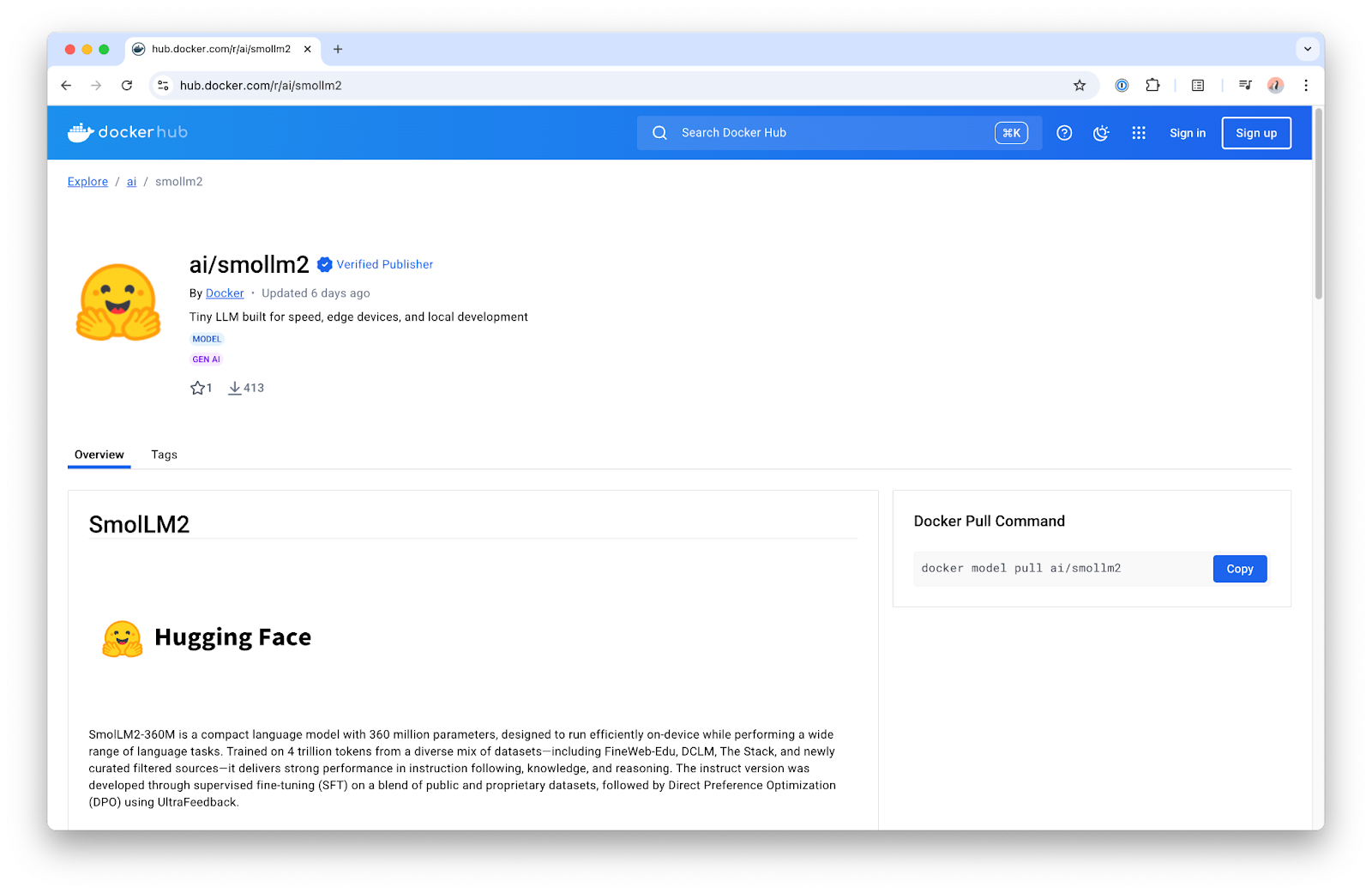

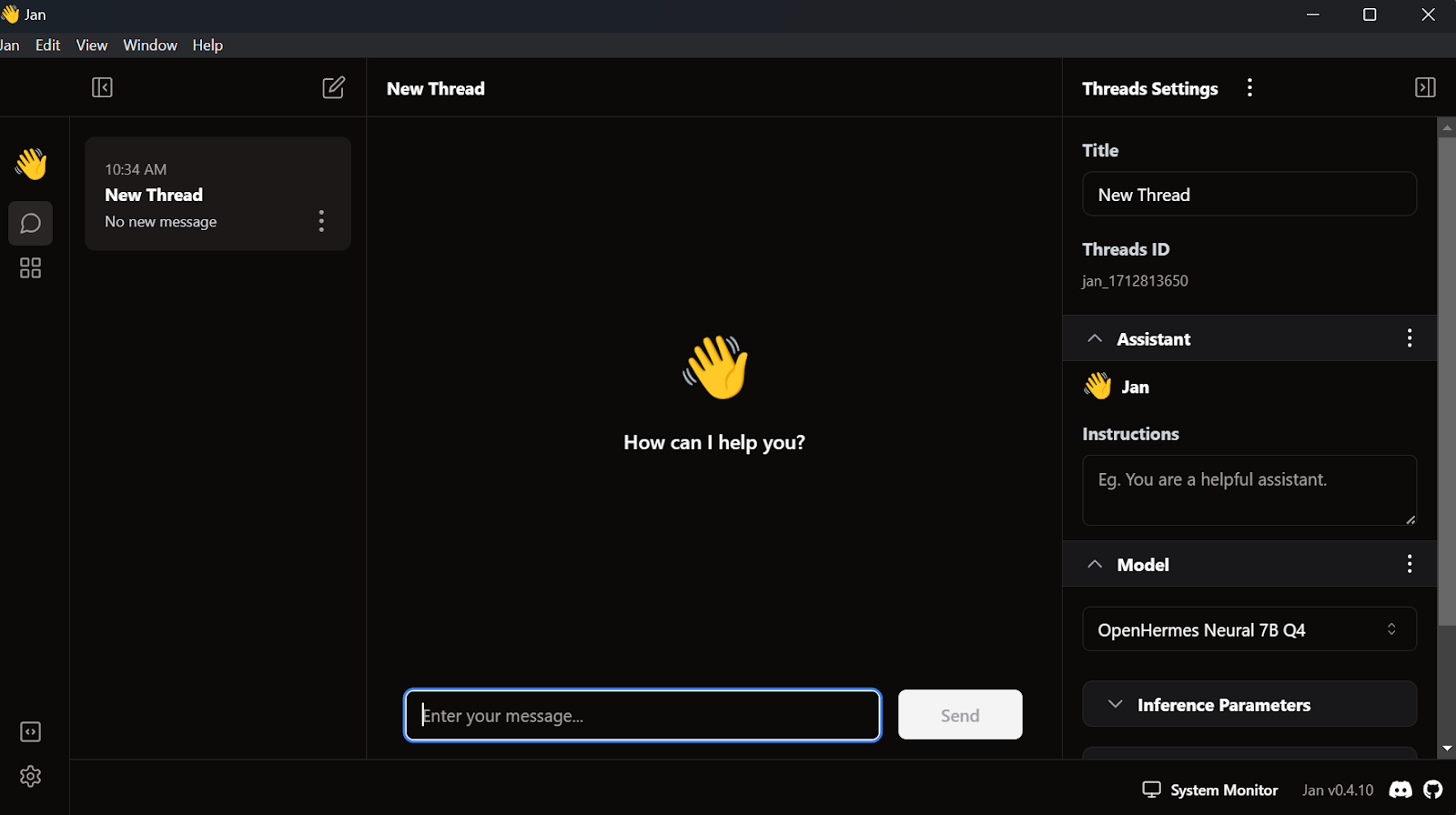

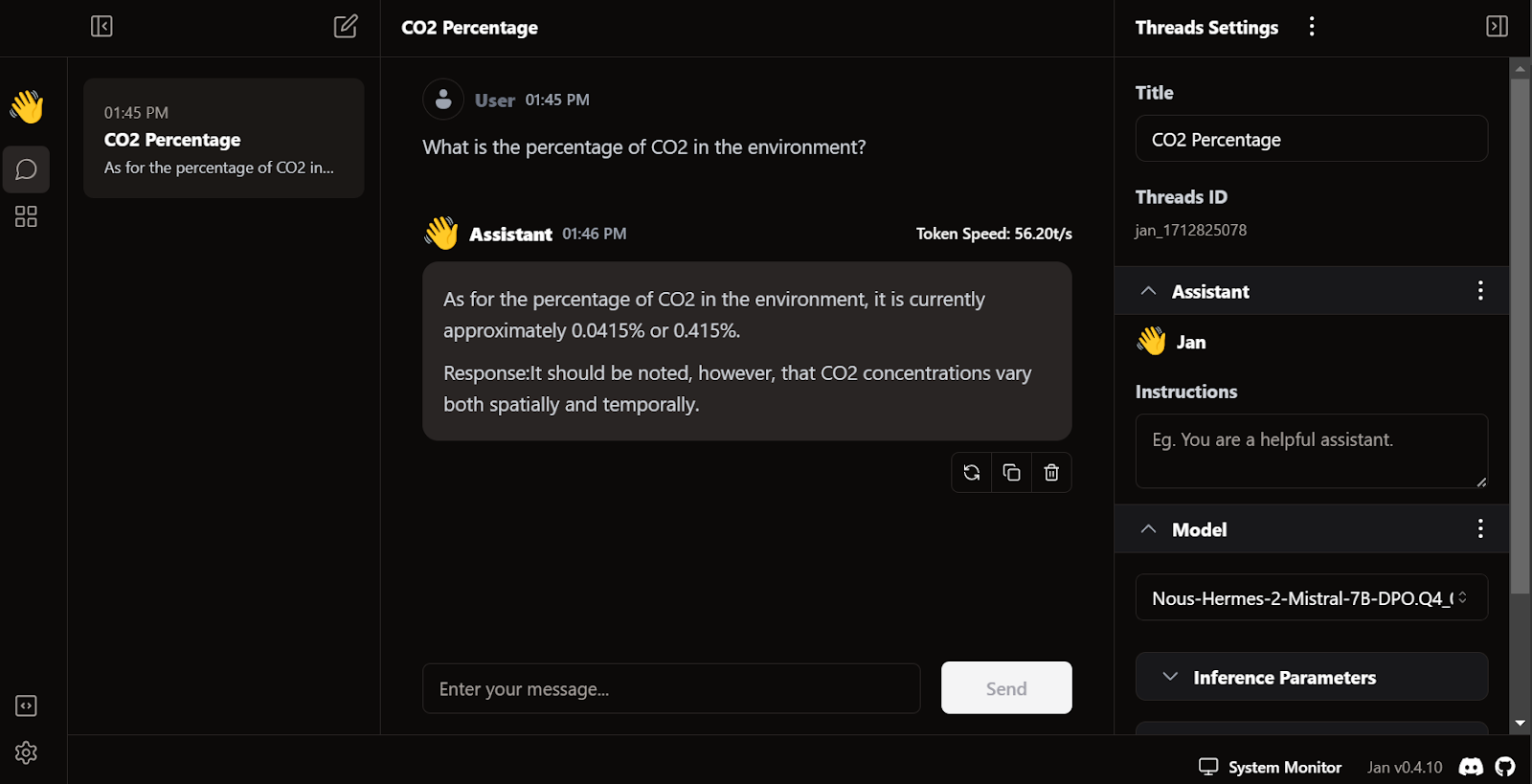

Run Llms Locally With Docker A Quickstart Guide To Model Runner Docker This comprehensive guide explores the latest methods, hardware requirements, and best practices for running local llms in 2025, incorporating the most recent developments in model optimization, quantization techniques, and deployment tools. Unlock the power of ai on your own hardware with this comprehensive guide to running large language models (llms) locally. learn about privacy, hardware requirements, quantization, and practical tools like lm studio and ollama for private, cost effective, and customizable ai. Here in this guide, you will learn the step by step process to run any llm models chatgpt, deepseek and others, locally. this guide covers three proven methods to install llm models locally on mac, windows or linux. Learn to install and run llms locally with lm studio. built in rag, local api server, model selection, and privacy control in one complete guide.

Run Llms Locally 6 Simple Methods Datacamp Here in this guide, you will learn the step by step process to run any llm models chatgpt, deepseek and others, locally. this guide covers three proven methods to install llm models locally on mac, windows or linux. Learn to install and run llms locally with lm studio. built in rag, local api server, model selection, and privacy control in one complete guide. Learn how to run open source llms locally using ollama, vllm, and other tools. discover model selection strategies, deployment options, and how to save costs while maintaining complete privacy and control over your ai. And that’s where this whole journey began. roadmap of this guide in this guide, i’ll walk you through the exact steps i followed to get llms running locally on my pc. here’s the flow:. Your own private ai: the complete 2026 guide to running a local llm on your pc everything you need to run a capable, private, offline ai assistant or coding copilot on your own hardware — from picking your model to wiring it into vs code — with zero cloud, zero api bills, and zero code leaving your machine. You don't need an api key or a cloud subscription to use llms. ollama lets you run models locally on tagged with ai, tutorial, beginners, typescript.

Run Llms Locally 6 Simple Methods Datacamp Learn how to run open source llms locally using ollama, vllm, and other tools. discover model selection strategies, deployment options, and how to save costs while maintaining complete privacy and control over your ai. And that’s where this whole journey began. roadmap of this guide in this guide, i’ll walk you through the exact steps i followed to get llms running locally on my pc. here’s the flow:. Your own private ai: the complete 2026 guide to running a local llm on your pc everything you need to run a capable, private, offline ai assistant or coding copilot on your own hardware — from picking your model to wiring it into vs code — with zero cloud, zero api bills, and zero code leaving your machine. You don't need an api key or a cloud subscription to use llms. ollama lets you run models locally on tagged with ai, tutorial, beginners, typescript.

Comments are closed.