How To Make A Custom Activation Function With Only Python In Tensorflow

How To Make A Custom Activation Function With Only Python In Tensorflow While tensorflow provides many built in activation functions like relu, sigmoid and tanh it also supports custom activations for advanced use cases. a custom activation function can be created using a simple python function or by subclassing tf.keras.layers.layer if more control is needed. Creating a custom activation function in tensorflow using only python involves defining your activation function as a python function and then registering it using the tf.keras.utils.get custom objects () function. here's how you can do it:.

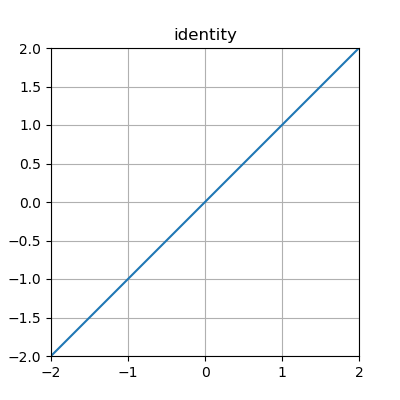

4 Activation Functions In Python To Know Askpython Suppose you need to make an activation function which is not possible using only pre defined tensorflow building blocks, what can you do? so in tensorflow it is possible to make your own activation function. Create a custom activation function in python using the tensorflow library with step by step tutorial and example python code. Explore libraries to build advanced models or methods using tensorflow, and access domain specific application packages that extend tensorflow. this is a sample of the tutorials available for these projects. In this post, i am introducing a combination of relu 6 and leaky relu activation function, which is not available as a pre implemented function in tensorflow library.

Overview Of Builtin Activation Functions Neat Python 1 1 0 Documentation Explore libraries to build advanced models or methods using tensorflow, and access domain specific application packages that extend tensorflow. this is a sample of the tutorials available for these projects. In this post, i am introducing a combination of relu 6 and leaky relu activation function, which is not available as a pre implemented function in tensorflow library. In the following notebooks i showcase how easy difficult it is to port an activation function using custom layers in keras and tensorflow! link to main notebook > activations.ipynb. I'm using keras and i wanted to add my own activation function myf to tensorflow backend. how to define the new function and make it operational. so instead of the line of code: i'll write. model.add(layers.conv2d(64, (3, 3), activation='myf')). I will explain two ways to use the custom activation function here. the first one is to use a lambda layer. the lambda layer defines the function right in the layer. for example in the following model, the lambda layer takes the output from the simplelinear method and takes its absolute values of it so we do not get any negatives. In this article, we explored how to create a custom activation function in tensorflow using python 3. we learned that activation functions are essential for introducing non linearity in neural networks and discussed the process of creating a custom activation function.

Python Ceiling Function Numpy Shelly Lighting In the following notebooks i showcase how easy difficult it is to port an activation function using custom layers in keras and tensorflow! link to main notebook > activations.ipynb. I'm using keras and i wanted to add my own activation function myf to tensorflow backend. how to define the new function and make it operational. so instead of the line of code: i'll write. model.add(layers.conv2d(64, (3, 3), activation='myf')). I will explain two ways to use the custom activation function here. the first one is to use a lambda layer. the lambda layer defines the function right in the layer. for example in the following model, the lambda layer takes the output from the simplelinear method and takes its absolute values of it so we do not get any negatives. In this article, we explored how to create a custom activation function in tensorflow using python 3. we learned that activation functions are essential for introducing non linearity in neural networks and discussed the process of creating a custom activation function.

Comments are closed.