How To Get Started With Mistral 7b Tutorial Automate Bard

How To Get Started With Mistral 7b Tutorial Automate Bard Once you have the full power of a large language model like mistral 7b on your personal computer, there are many ways you can harness their power to create new programs and automation. This article contains a step by step procedure on running mistral 7b on personal computers. we will be using two frameworks to run mistral 7b, huggingface transformers and langchain.

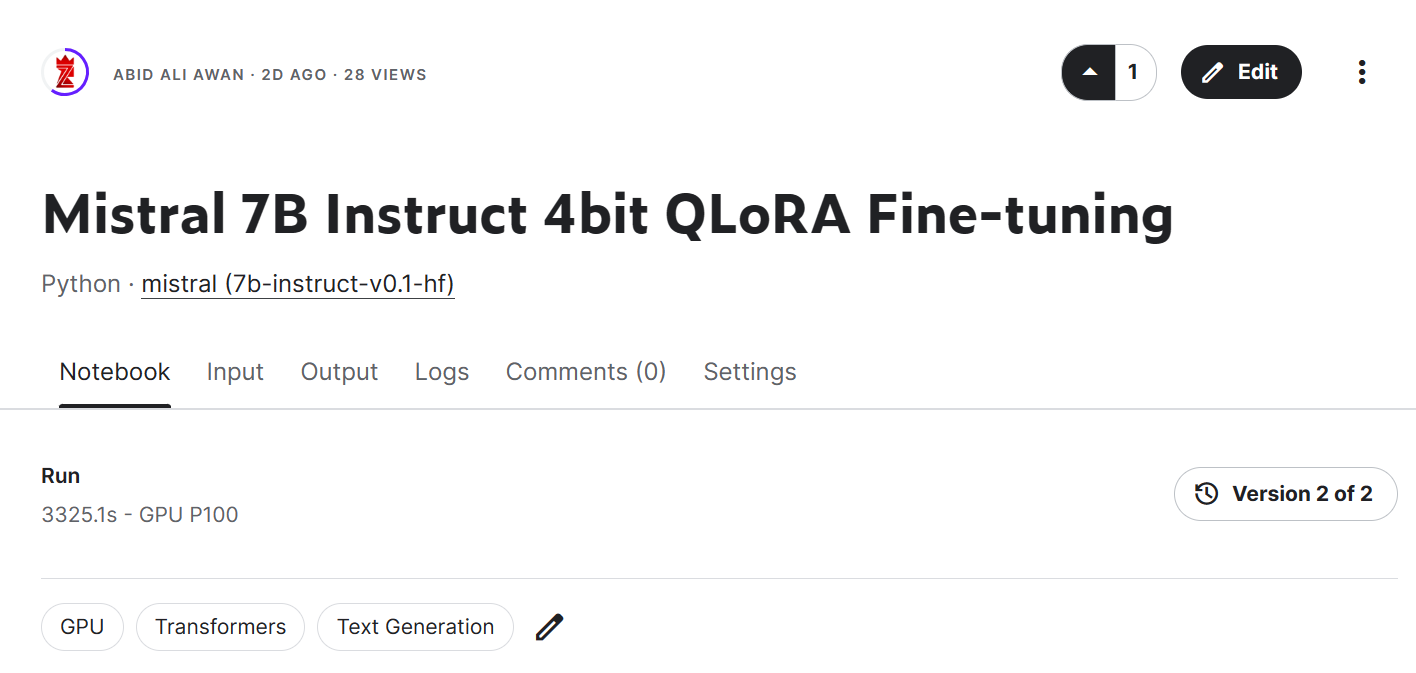

How To Get Started With Mistral 7b Instruct V0 2 Tutorial Automate Bard In this tutorial, you will get an overview of how to use and fine tune the mistral 7b model to enhance your natural language processing projects. you will learn how to load the model in kaggle, run inference, quantize, fine tune, merge it, and push the model to the hugging face hub. Learn how to deploy and use mistral ai's large language models with our comprehensive documentation, guides, and tutorials. Now we can encode the message with our tokenizer using mistraltokenizer. and run generate to get a response. don't forget to pass the eos id! finally, we can decode the generated tokens. In this guide, we provide a step by step process to effectively use mistral 7b, a powerful open source ai model. learn how to set it up, integrate it into your workflow, and optimize it for tasks like content creation and coding.

How To Program A Conversational Ai Chatbot Using Mistral 7b Instruct Now we can encode the message with our tokenizer using mistraltokenizer. and run generate to get a response. don't forget to pass the eos id! finally, we can decode the generated tokens. In this guide, we provide a step by step process to effectively use mistral 7b, a powerful open source ai model. learn how to set it up, integrate it into your workflow, and optimize it for tasks like content creation and coding. Mistral ai has gained attention for producing highly efficient language models that punch above their weight class. this guide walks through setting up mistral locally on your own hardware. Getting started with mistral introduces learners to mistral ai’s open source and commercial large language models (llms), including mistral 7b, mixtral 8x7b, and mixtral 8x22b. This video covers searching for mistral 7b variants, downloading the model files, and using the model for text generation. In this guide, we provide an overview of the mistral 7b llm and how to prompt with it. it also includes tips, applications, limitations, papers, and additional reading materials related to mistral 7b and finetuned models. mistral 7b is a 7 billion parameter language model released by mistral ai.

Mistral 7b Tutorial A Step By Step Guide To Using And Fine Tuning Mistral ai has gained attention for producing highly efficient language models that punch above their weight class. this guide walks through setting up mistral locally on your own hardware. Getting started with mistral introduces learners to mistral ai’s open source and commercial large language models (llms), including mistral 7b, mixtral 8x7b, and mixtral 8x22b. This video covers searching for mistral 7b variants, downloading the model files, and using the model for text generation. In this guide, we provide an overview of the mistral 7b llm and how to prompt with it. it also includes tips, applications, limitations, papers, and additional reading materials related to mistral 7b and finetuned models. mistral 7b is a 7 billion parameter language model released by mistral ai.

Comments are closed.