How To Build An Api With Python Llm Integration Fastapi Ollama More

Free Video How To Build An Api With Python For Llm Integration Using Whether you’re building a chatbot, a text generator, or a custom ai powered api, this combo is a game changer. let’s get started!. Learn how to build a production ready api for local llms using fastapi and ollama. complete guide with streaming, conversation management.

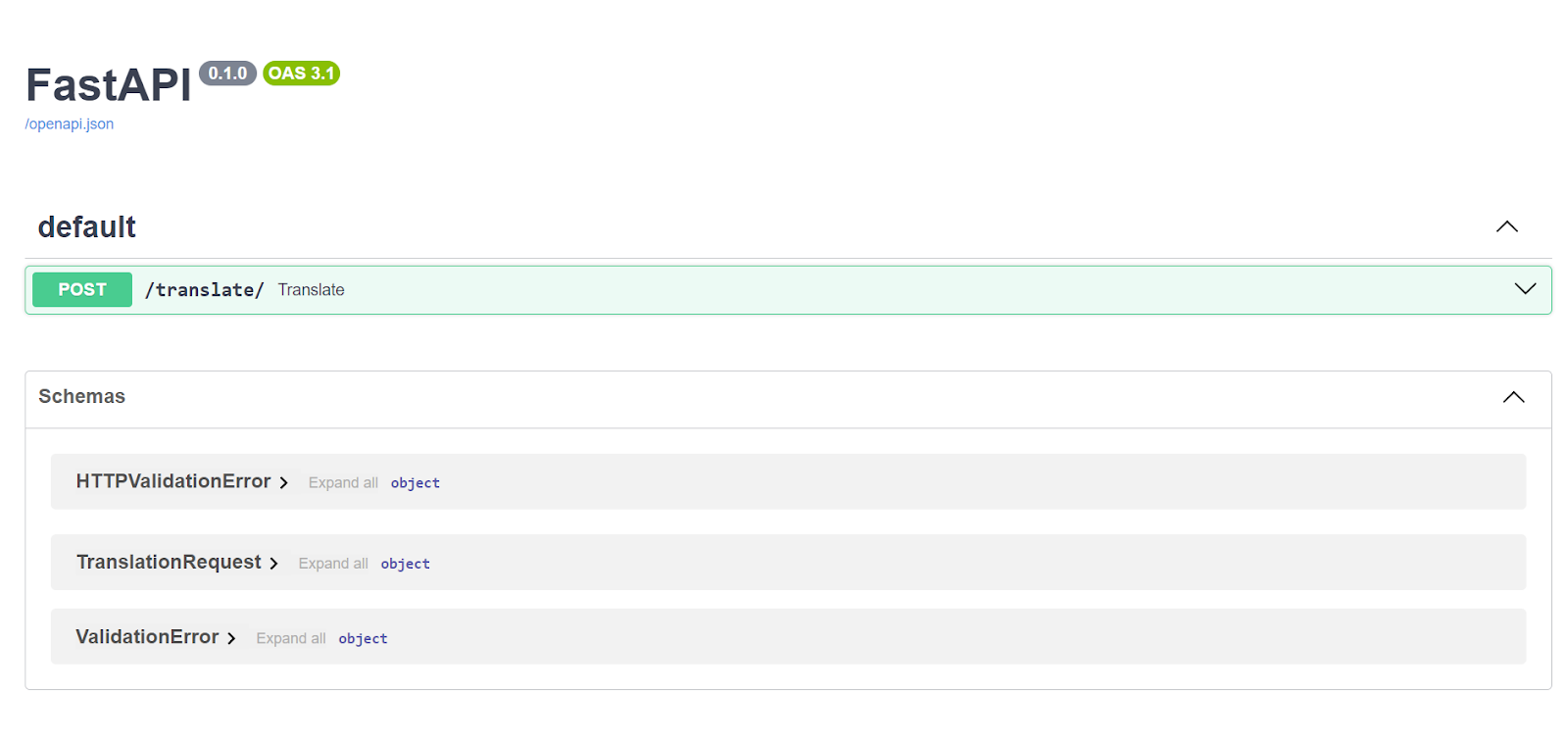

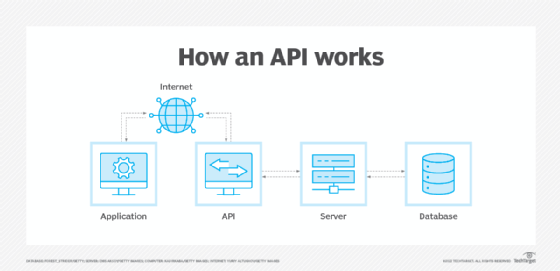

Serving An Llm Application As An Api Endpoint Using Fastapi In Python In this article, i’ll explore how to integrate ollama, a platform for running large language models locally, with fastapi, a modern, fast web framework for building apis with python. In this project, i built a custom ai api using ollama fastapi on a linux virtual machine. this api exposes llm capabilities via rest endpoints, similar to how real world ai microservices work. A demonstration of integrating fastapi with ollama, featuring streaming, formatted, and complete json responses from ai models. learn how to set up and use fastapi with ollama for building ai driven applications. In this tutorial, we will build a simple python application using openai gpt model and serve it with an api endpoint that will be developed using the fastapi framework in python.

Serving An Llm Application As An Api Endpoint Using Fastapi In Python A demonstration of integrating fastapi with ollama, featuring streaming, formatted, and complete json responses from ai models. learn how to set up and use fastapi with ollama for building ai driven applications. In this tutorial, we will build a simple python application using openai gpt model and serve it with an api endpoint that will be developed using the fastapi framework in python. This lab provides practical experience in building and securing an api for llm access, integrating devops practices like version control and optional cloud deployment. Step by step guide to deploying llms with fastapi in python. includes code samples, docker setup, and scaling tips for production ready apis. This article showed step by step how to set up and run your first local large language model api, using local models downloaded with ollama, and fastapi for quick model inference through a rest service based interface. I'm going to teach you how to write a very simple python api to control access to a llm or an ai model more.

Serving An Llm Application As An Api Endpoint Using Fastapi In Python This lab provides practical experience in building and securing an api for llm access, integrating devops practices like version control and optional cloud deployment. Step by step guide to deploying llms with fastapi in python. includes code samples, docker setup, and scaling tips for production ready apis. This article showed step by step how to set up and run your first local large language model api, using local models downloaded with ollama, and fastapi for quick model inference through a rest service based interface. I'm going to teach you how to write a very simple python api to control access to a llm or an ai model more.

Serving An Llm Application As An Api Endpoint Using Fastapi In Python This article showed step by step how to set up and run your first local large language model api, using local models downloaded with ollama, and fastapi for quick model inference through a rest service based interface. I'm going to teach you how to write a very simple python api to control access to a llm or an ai model more.

Comments are closed.