How Does Codegpt S Bpe Tokenizer Process Whitespaces In Code Completion

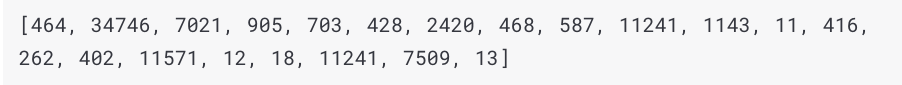

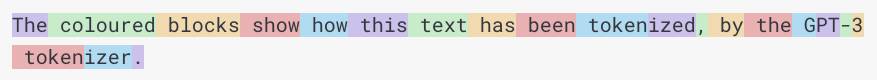

How Bpe Works The Tokenization Algorithm Used By Large Language Actually, when we use bpe tokenizer, e.g., the huggingface style tokenizer, we feed the whole codes into it but not one token by one token. for example, you may notice the special sub token Ġ to represent the whitespace (the separator). Byte pair encoding (bpe) was initially developed as an algorithm to compress texts, and then used by openai for tokenization when pretraining the gpt model. it’s used by a lot of transformer models, including gpt, gpt 2, roberta, bart, and deberta.

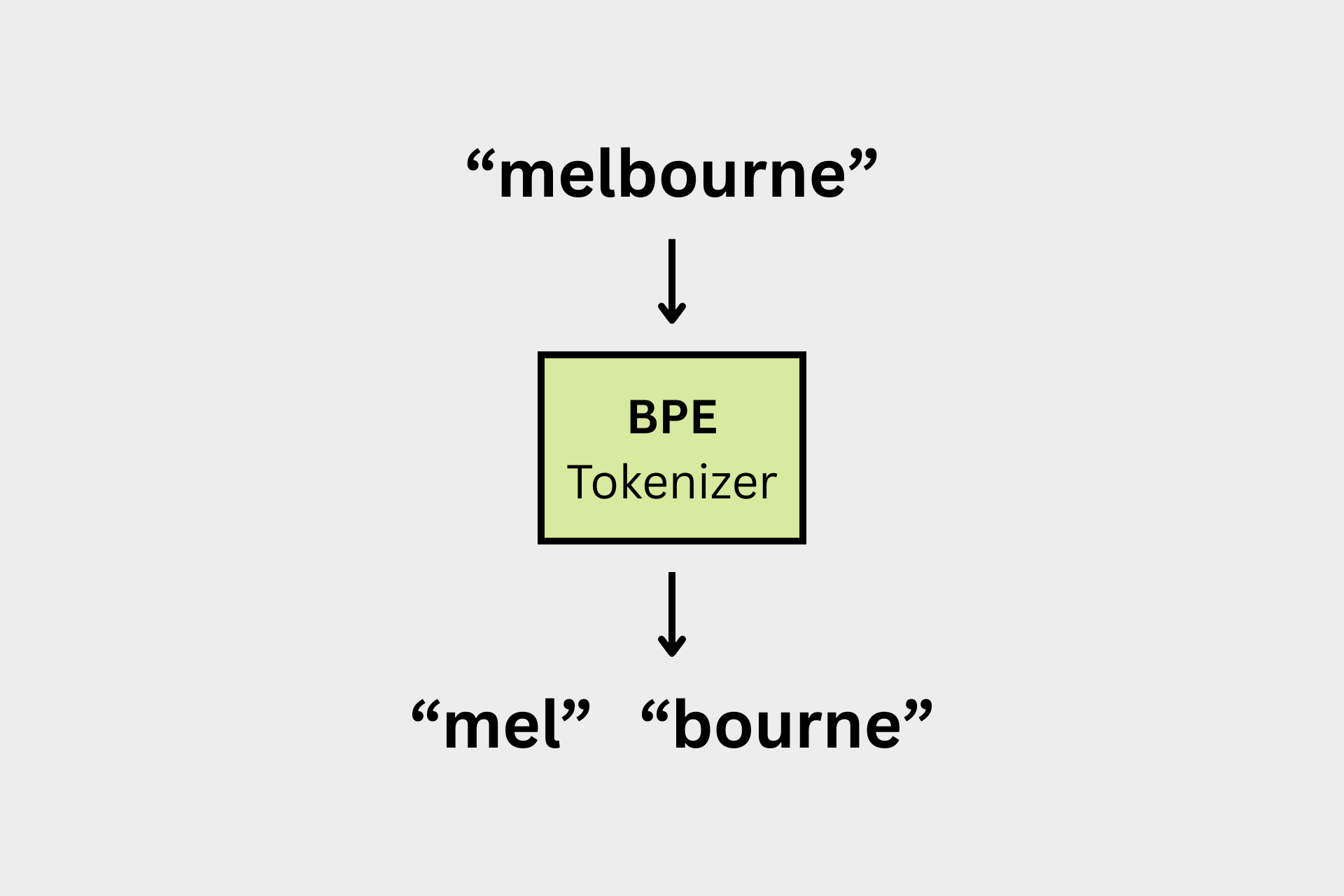

How Bpe Works The Tokenization Algorithm Used By Large Language Gpt 2 used a bpe tokenizer with a vocabulary of ≈50,257 tokens, and openai’s tiktoken is a fast rust backed implementation you can use today. below i explain the why, the how (intuition algorithm), and a short hands on demo using tiktoken. This is a standalone notebook implementing the popular byte pair encoding (bpe) tokenization algorithm, which is used in models like gpt 2 to gpt 4, llama…. Every token that gpt processes — every word, punctuation mark, and emoji — was produced by a byte pair encoding (bpe) tokenizer. bpe is the algorithm that decides "running" should become two tokens ["run", "ning"] while "the" stays as one. Byte pair encoding (bpe) is a text tokenization technique in natural language processing. it breaks down words into smaller, meaningful pieces called subwords. it works by repeatedly finding the most common pairs of characters in the text and combining them into a new subword until the vocabulary reaches a desired size.

Github Raj Pulapakura Bpe Implemented The Byte Pair Encoding Sub Every token that gpt processes — every word, punctuation mark, and emoji — was produced by a byte pair encoding (bpe) tokenizer. bpe is the algorithm that decides "running" should become two tokens ["run", "ning"] while "the" stays as one. Byte pair encoding (bpe) is a text tokenization technique in natural language processing. it breaks down words into smaller, meaningful pieces called subwords. it works by repeatedly finding the most common pairs of characters in the text and combining them into a new subword until the vocabulary reaches a desired size. When developing a simple tokenizer, whether we should encode whitespaces as separate characters or just remove them depends on our application and its requirements. removing whitespaces. I’ll specifically try to cover the byte pair encoding (bpe) algorithm, which is at the core of modern tokenizers, and hence a foundational layer of llms. what is a tokenizer and why does it matter?. This pre tokenization pattern has been refined in later models. gpt 4 uses a more sophisticated pattern that handles apostrophes, numbers, and whitespace more carefully, and also limits the length of digit sequences to avoid learning overly specific number tokens. We'll embark on a deep dive, not only to grasp how bpe functions, from the initial training phase to the final tokenization step, but also to rectify a widespread misconception about how bpe is applied to new, unseen text.

Github Jayatseneca Codegpt Codegpt Is A Full Stack Ai Tool That Uses When developing a simple tokenizer, whether we should encode whitespaces as separate characters or just remove them depends on our application and its requirements. removing whitespaces. I’ll specifically try to cover the byte pair encoding (bpe) algorithm, which is at the core of modern tokenizers, and hence a foundational layer of llms. what is a tokenizer and why does it matter?. This pre tokenization pattern has been refined in later models. gpt 4 uses a more sophisticated pattern that handles apostrophes, numbers, and whitespace more carefully, and also limits the length of digit sequences to avoid learning overly specific number tokens. We'll embark on a deep dive, not only to grasp how bpe functions, from the initial training phase to the final tokenization step, but also to rectify a widespread misconception about how bpe is applied to new, unseen text.

Github Aspiringastro Bpe Tokenizer Simple Byte Pair Encoding This pre tokenization pattern has been refined in later models. gpt 4 uses a more sophisticated pattern that handles apostrophes, numbers, and whitespace more carefully, and also limits the length of digit sequences to avoid learning overly specific number tokens. We'll embark on a deep dive, not only to grasp how bpe functions, from the initial training phase to the final tokenization step, but also to rectify a widespread misconception about how bpe is applied to new, unseen text.

Bpe Tokenizer Training And Tokenization Explained

Comments are closed.