How Does Ai Actually Work Transformers Explained

Learn How Ai Transformers Actually Work Ibm Research In this transformative era of ai, the significance of transformer models for aspiring data scientists and nlp practitioners cannot be overstated. as one of the core fields for most of the latest technological leap forwards, this article aims to decipher the secrets behind these models. We’ll start with a brief chronology of some relevant natural language processing concepts, then we’ll go through the transformer step by step and uncover how it works.

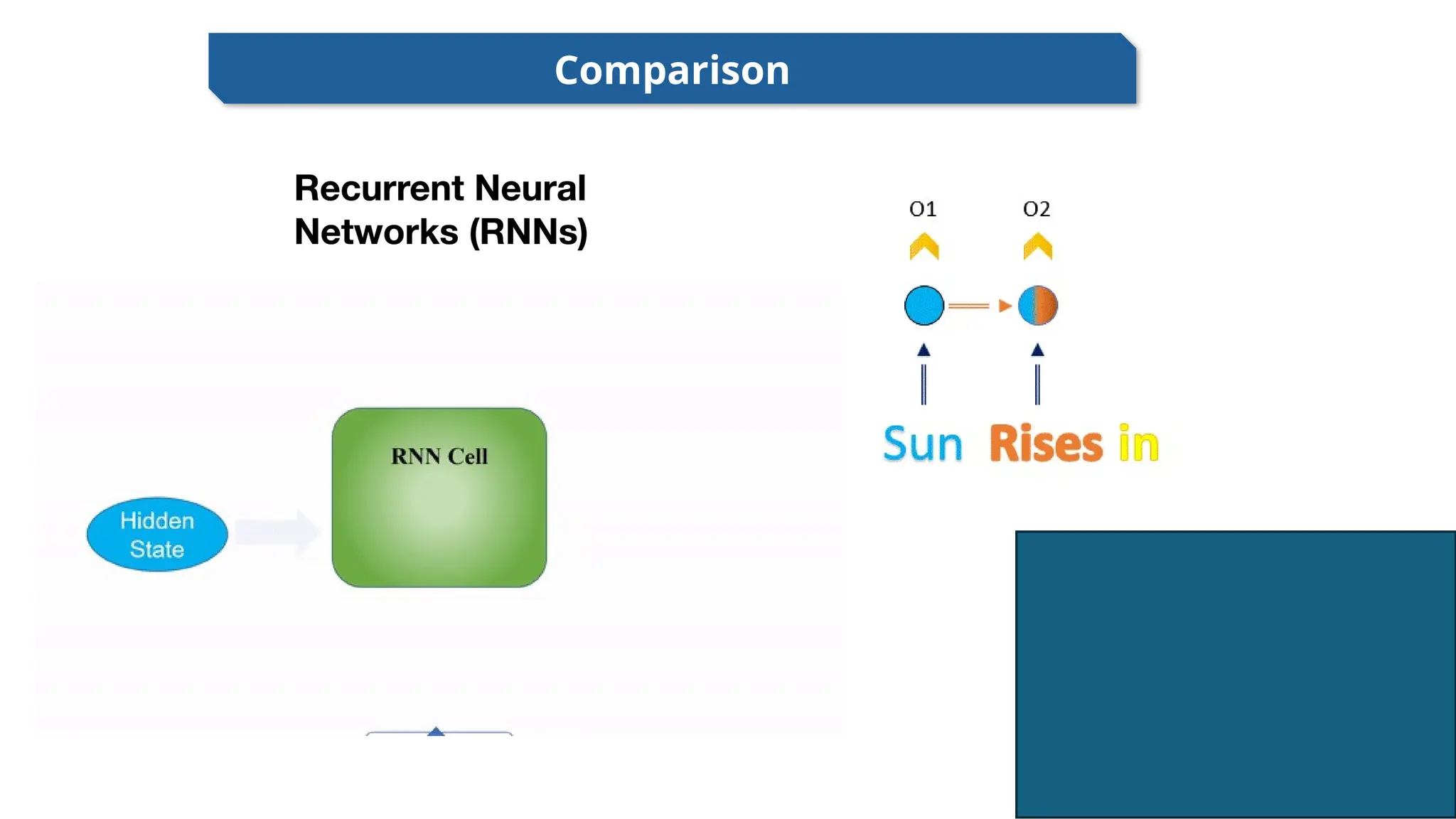

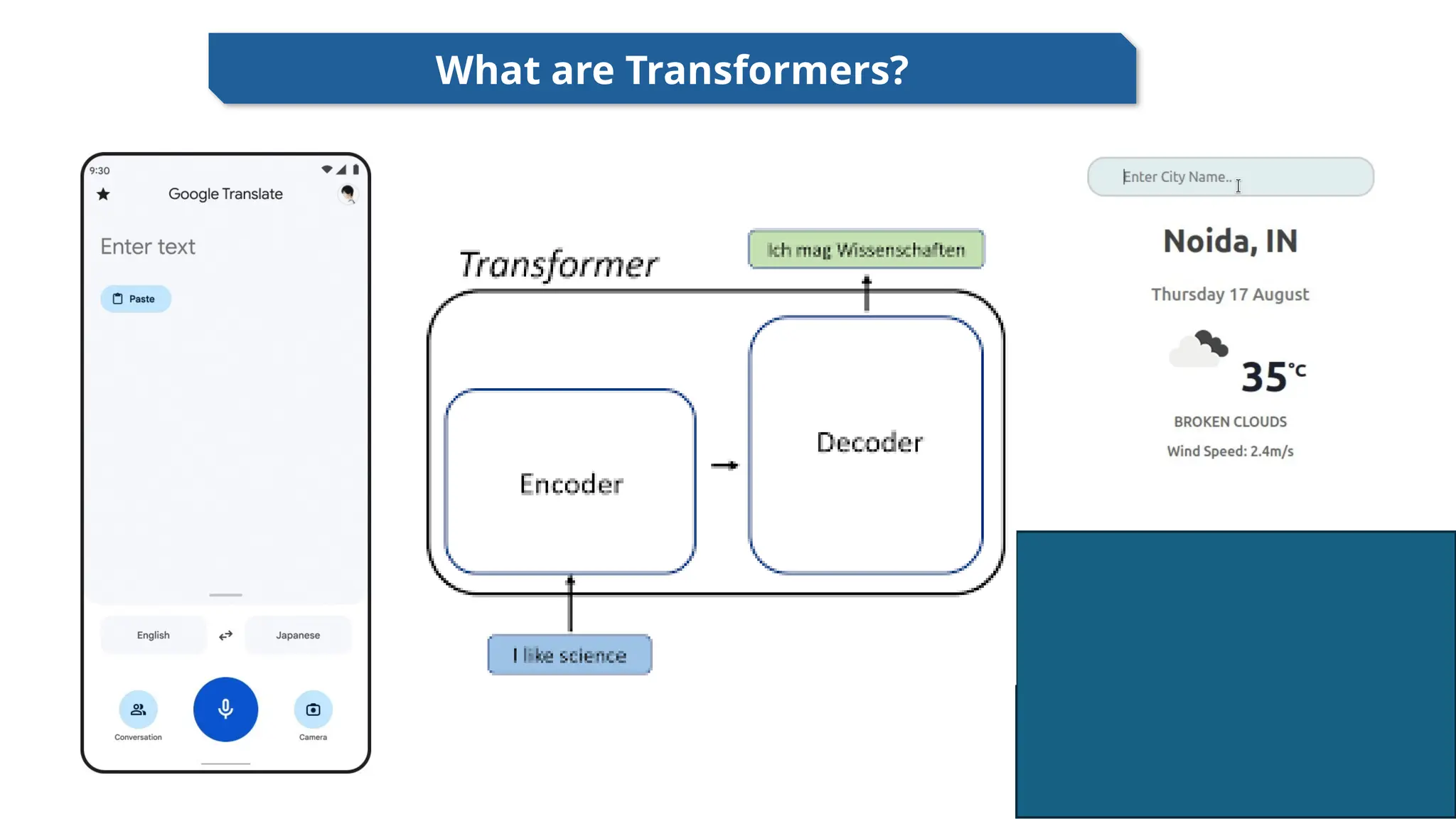

Learn How Ai Transformers Actually Work Ibm Research Transformer is a neural network architecture used for various machine learning tasks, especially in natural language processing and computer vision. it focuses on understanding relationships within data to process information more effectively. I want to offer a technically accurate but accessible look into how transformers function, from input to output and why their design marks such a radical leap from earlier ai systems. Transformers are a type of neural network architecture that transforms or changes an input sequence into an output sequence. they do this by learning context and tracking relationships between sequence components. for example, consider this input sequence: "what is the color of the sky?". A transformer is a type of transformer neural network designed specifically for processing sequences of data—like sentences, code, or dna strands. unlike older architectures that read text one word at a time, transformers look at every word simultaneously.

Transformers Explained At August Wiest Blog Transformers are a type of neural network architecture that transforms or changes an input sequence into an output sequence. they do this by learning context and tracking relationships between sequence components. for example, consider this input sequence: "what is the color of the sky?". A transformer is a type of transformer neural network designed specifically for processing sequences of data—like sentences, code, or dna strands. unlike older architectures that read text one word at a time, transformers look at every word simultaneously. What is a transformer model? the transformer model is a type of neural network architecture that excels at processing sequential data, most prominently associated with large language models (llms). Transformers have revolutionized the field of ai with their scalability and versatility, leading to the development of large scale pretrained models, often referred to as foundation models. Transformer is the core architecture behind modern ai, powering models like chatgpt and gemini. introduced in 2017, it revolutionized how ai processes information. the same architecture is used for training on massive datasets and for inference to generate outputs. In deep learning, the transformer is a family of artificial neural network architectures based on the multi head attention mechanism, in which text is converted to numerical representations called tokens, and each token is converted into a vector via lookup from a word embedding table. [1] .

Transformers In Ai Introduction To Transformers In Al Transformers What is a transformer model? the transformer model is a type of neural network architecture that excels at processing sequential data, most prominently associated with large language models (llms). Transformers have revolutionized the field of ai with their scalability and versatility, leading to the development of large scale pretrained models, often referred to as foundation models. Transformer is the core architecture behind modern ai, powering models like chatgpt and gemini. introduced in 2017, it revolutionized how ai processes information. the same architecture is used for training on massive datasets and for inference to generate outputs. In deep learning, the transformer is a family of artificial neural network architectures based on the multi head attention mechanism, in which text is converted to numerical representations called tokens, and each token is converted into a vector via lookup from a word embedding table. [1] .

Transformers In Ai Introduction To Transformers In Al Transformers Transformer is the core architecture behind modern ai, powering models like chatgpt and gemini. introduced in 2017, it revolutionized how ai processes information. the same architecture is used for training on massive datasets and for inference to generate outputs. In deep learning, the transformer is a family of artificial neural network architectures based on the multi head attention mechanism, in which text is converted to numerical representations called tokens, and each token is converted into a vector via lookup from a word embedding table. [1] .

Comments are closed.