How Context Windows Actually Work

Understanding Context Window For Ai Performance Use Cases This is the story of context windows — the invisible limit that shapes every conversation you have with ai. episode 4 of the "how ai actually works" course. For ai leaders, product managers, and engineers, understanding how context windows actually work—and why they can’t scale indefinitely—is critical to building real world ai systems. this article breaks down: how context windows are defined by the math of attention. why scaling them hits hard limits. engineering innovations that extend them.

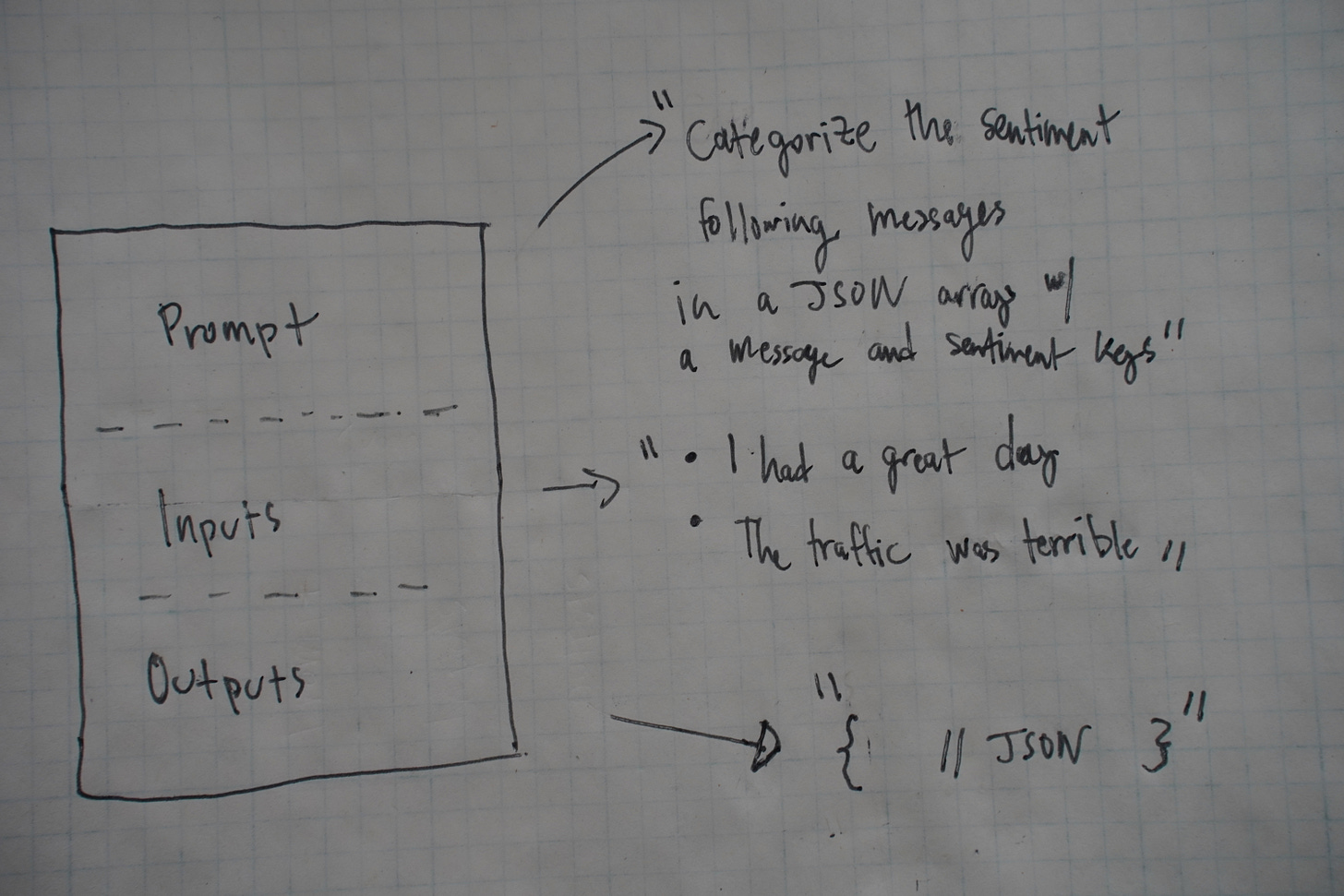

Understanding Context Window For Ai Performance Use Cases Larger context windows improve performance but come at a cost. smaller windows are more efficient but limit functionality. the key is to design systems that work within these constraints. An llm’s context window can be thought of as the equivalent of its working memory. it determines how long of a conversation it can carry out without forgetting details from earlier in the exchange. it also determines the maximum size of documents or code samples that it can process at once. As models become stronger and context sizes increase, understanding how these windows work becomes key to building reliable and scalable ai systems. in this guide, we’ll walk through the basics of context windows, the trade offs of expanding them, and the strategies to effectively use them. The context window is the total number of tokens a model can see at once during a single forward pass. this includes everything: the system prompt, conversation history, any documents you've retrieved, the current user message, and space for the model's response.

Llm Engineering Context Windows By Darlin As models become stronger and context sizes increase, understanding how these windows work becomes key to building reliable and scalable ai systems. in this guide, we’ll walk through the basics of context windows, the trade offs of expanding them, and the strategies to effectively use them. The context window is the total number of tokens a model can see at once during a single forward pass. this includes everything: the system prompt, conversation history, any documents you've retrieved, the current user message, and space for the model's response. This guide breaks down what context windows are, why they exist, how they work, and how to make smart architectural decisions when you're choosing between models with different context capabilities. Learn why context length matters for ai agents and how to work within limits. a context window is the maximum amount of text an ai model can process at one time. think of it as the model’s working memory. everything you send to the model and everything it generates back counts against this limit. A context window refers to the amount of information a large language model (llm) can process in a single prompt. context windows are like a human’s short term memory. like us, llms can only “look” at so much information simultaneously. Every ai model has a context window: a fixed limit on how much text it can process at once. everything inside that window (your prompt, the conversation history, the system instructions, and the model’s own response) has to fit. anything beyond it is invisible to the model. it doesn’t “remember” it. it never sees it.

Comments are closed.