Higher Order Graph Convolutional Networks

Github Psychologyphd Hwgcn Higher Order Weighted Graph Convolutional Current sc based gnns are burdened by high complexity and rigidity, and quantifying higher order interaction strengths remains challenging. innovatively, we present a higher order flower petals (fp) model, incorporating fp laplacians into scs. Existing popular methods for semi supervised node classification with high order convolution improve the learning ability of graph convolutional networks (gcns).

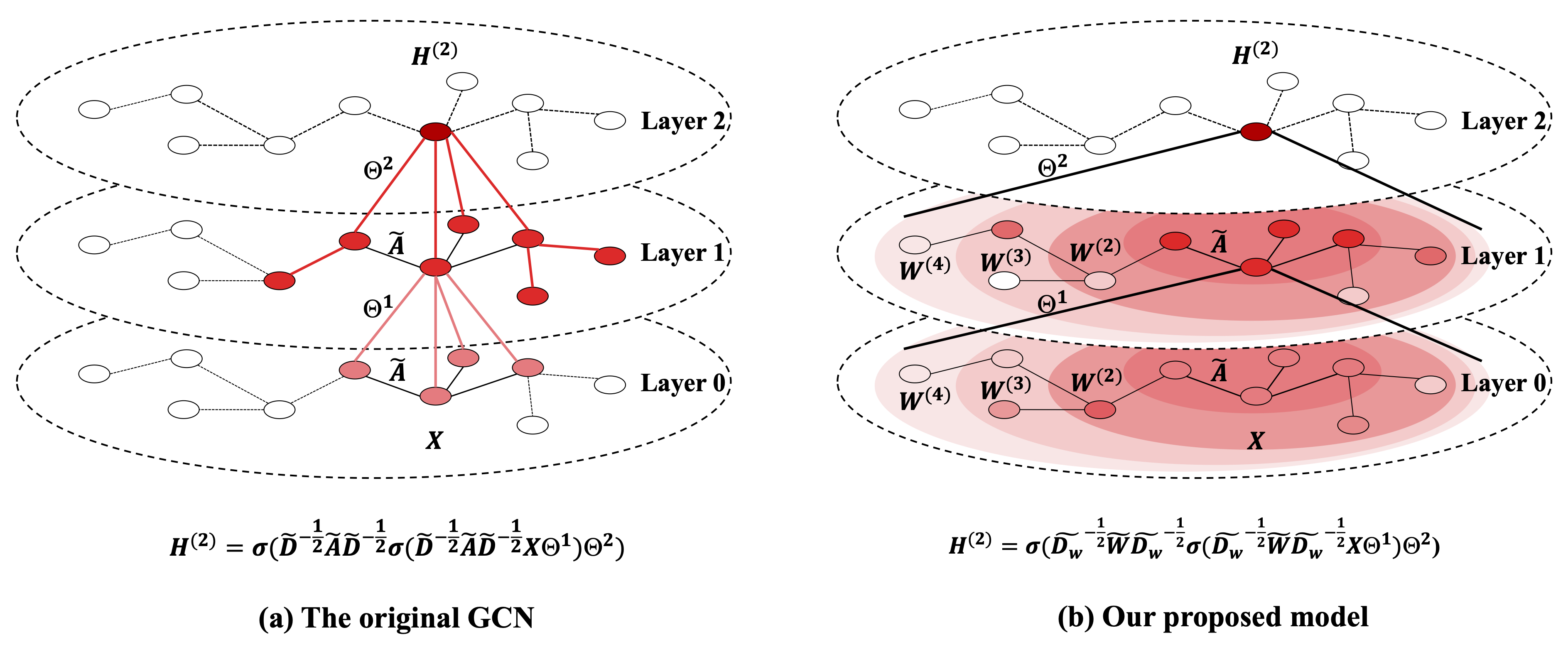

Pdf Higher Order Graph Convolutional Networks Innovatively, we present a higher order flower petals (fp) model, incorporating fp laplacians into scs. further, we introduce a higher order graph convolutional network (higcn) grounded in fp laplacians, capable of discerning intrinsic features across varying topological scales. We present a scalable approach for semi supervised learning on graph structured data that is based on an efficient variant of convolutional neural networks which operate directly on graphs. With the higher order neighborhood information of a graph network, the accuracy of graph representation learning classification can be significantly improved. however, the current higher order graph convolutional networks have a large number of parameters and high computational complexity. To tackle the aforementioned problems, we propose in the current paper a spatial temporal higher order graph convolutional network framework (st hn) for temporal network embedding hierarchically (the overview of st hn is described in fig. 1).

Higher Order Graph Convolutional Network With Flower Petals Laplacians With the higher order neighborhood information of a graph network, the accuracy of graph representation learning classification can be significantly improved. however, the current higher order graph convolutional networks have a large number of parameters and high computational complexity. To tackle the aforementioned problems, we propose in the current paper a spatial temporal higher order graph convolutional network framework (st hn) for temporal network embedding hierarchically (the overview of st hn is described in fig. 1). In this work, we introduce a general class of graph convolution networks which utilize weighted multi hop motif adjacency matrices (rossi, ahmed, and koh 2018) to capture higher order neighborhoods in the graph. In this work, we propose a new graph convolutional layer which mixes multiple powers of the adjacency matrix, allowing it to learn delta operators. our layer exhibits the same memory footprint and computational complexity as a gcn. Therefore, we present a high order improved graph convolutional network for modeling dynamic graphs, which uses a two branch method to model high order and pairwise relationships between nodes. With the higher order neighborhood information of a graph network, the accuracy of graph representation learning classification can be significantly improved. however, the current higher order graph convolutional networks have a large number of parameters and high computational complexity.

High Order Topology Enhanced Graph Convolutional Networks For Dynamic In this work, we introduce a general class of graph convolution networks which utilize weighted multi hop motif adjacency matrices (rossi, ahmed, and koh 2018) to capture higher order neighborhoods in the graph. In this work, we propose a new graph convolutional layer which mixes multiple powers of the adjacency matrix, allowing it to learn delta operators. our layer exhibits the same memory footprint and computational complexity as a gcn. Therefore, we present a high order improved graph convolutional network for modeling dynamic graphs, which uses a two branch method to model high order and pairwise relationships between nodes. With the higher order neighborhood information of a graph network, the accuracy of graph representation learning classification can be significantly improved. however, the current higher order graph convolutional networks have a large number of parameters and high computational complexity.

Comments are closed.