Hardware Acceleration For Ai At The Edge

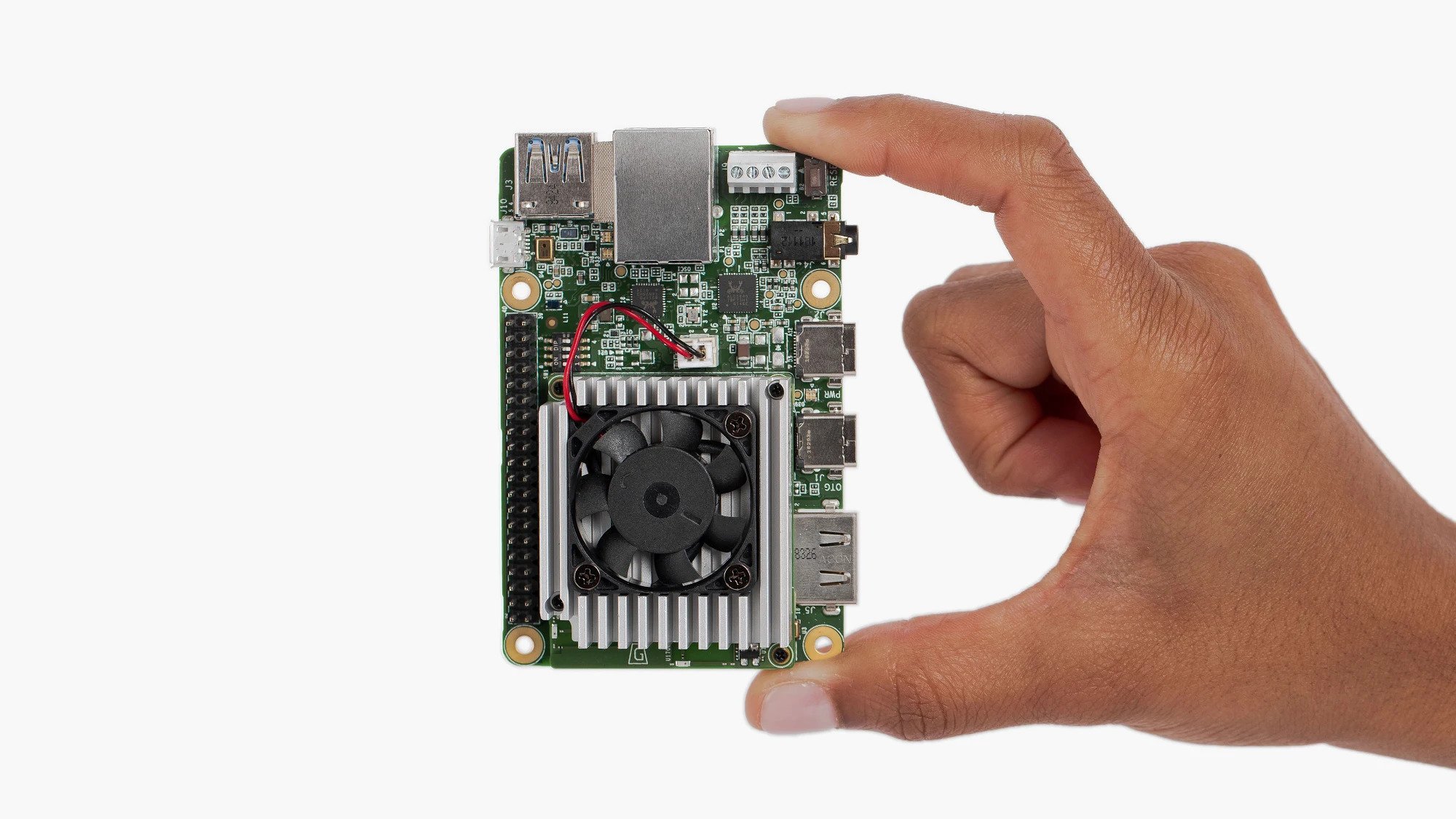

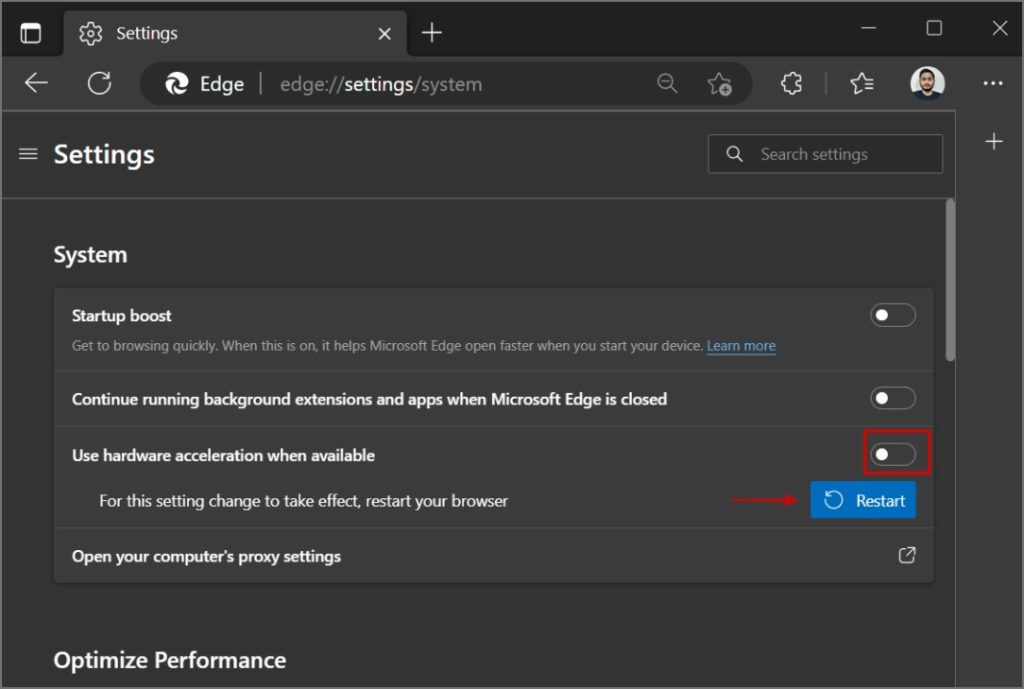

Edge Ai Hardware Stmicroelectronics Stm32 Ai However, harnessing such algorithms at the edge will require more computing power than what current platforms offer. in this paper, we present an fpga system on chip based architecture that supports the acceleration of ml algorithms in an edge environment. What is hardware acceleration? hw acceleration as a term refers to a concept where a device offloads some of the computing task from cpu to a hardware component that are specifically designed to run certain type of apps and workloads faster.

Ai Hardware Edge Machine Learning Inference Viso Ai This paper presents the design, implementation, and evaluation of a custom framework for hardware acceleration of ai inference at the edge, emphasizing energy efficiency and hardware–software co design. For enhanced edge ai performance, explore how npus and dsps accelerate processing—discover what makes them essential for real time applications. hardware acceleration with npus and dsps helps you run ai tasks faster and more efficiently on edge devices like smartphones, drones, and iot sensors. This paper presents a comprehensive investigation into the deployment of customized digital hardware for accelerating ai inference on edge devices. it evaluates the performance trade offs among asics, fpgas, and npus through benchmarking experiments involving representative edge ai workloads. In this section, we review some case studies of specific hardware specially designed for handling fast and energy efficient ai computation, covering different types of ai algorithms and hardware platforms.

Hardware Acceleration Edge At Jack Belser Blog This paper presents a comprehensive investigation into the deployment of customized digital hardware for accelerating ai inference on edge devices. it evaluates the performance trade offs among asics, fpgas, and npus through benchmarking experiments involving representative edge ai workloads. In this section, we review some case studies of specific hardware specially designed for handling fast and energy efficient ai computation, covering different types of ai algorithms and hardware platforms. It delivers a flexible software stack across a common high performance hardware and software platform to accelerate data analysis and visualization workflows at the edge. At its core, hardware acceleration for edge ai involves three complementary strategies: model optimization to reduce computational requirements, runtime optimization to maximize hardware utilization, and hardware specific tuning to exploit architectural advantages. One thing you really have to consider when bringing artificial intelligence to the edge is the hardware you will need to run these powerful algorithms. ted way from the azure machine learning team joins olivier on the iot show to discuss hardware acceleration at the edge for ai. Developing specialized accelerators for ai motivation: specialized hardware accelerators (asics, fpgas, etc) can make ai faster and more energy efficient (e.g. at the edge).

Hardware Acceleration For Ai Workloads It delivers a flexible software stack across a common high performance hardware and software platform to accelerate data analysis and visualization workflows at the edge. At its core, hardware acceleration for edge ai involves three complementary strategies: model optimization to reduce computational requirements, runtime optimization to maximize hardware utilization, and hardware specific tuning to exploit architectural advantages. One thing you really have to consider when bringing artificial intelligence to the edge is the hardware you will need to run these powerful algorithms. ted way from the azure machine learning team joins olivier on the iot show to discuss hardware acceleration at the edge for ai. Developing specialized accelerators for ai motivation: specialized hardware accelerators (asics, fpgas, etc) can make ai faster and more energy efficient (e.g. at the edge).

Comments are closed.