Hadoop Pdf Apache Hadoop Map Reduce

Hadoop Map Reduce Pdf Apache Hadoop Map Reduce Hadoop mapreduce is a software framework for easily writing applications which process vast amounts of data (multi terabyte data sets) in parallel on large clusters (thousands of nodes) of commodity hardware in a reliable, fault tolerant manner. Advanced aspects counters • allow to track the progress of a mapreduce job in real time.

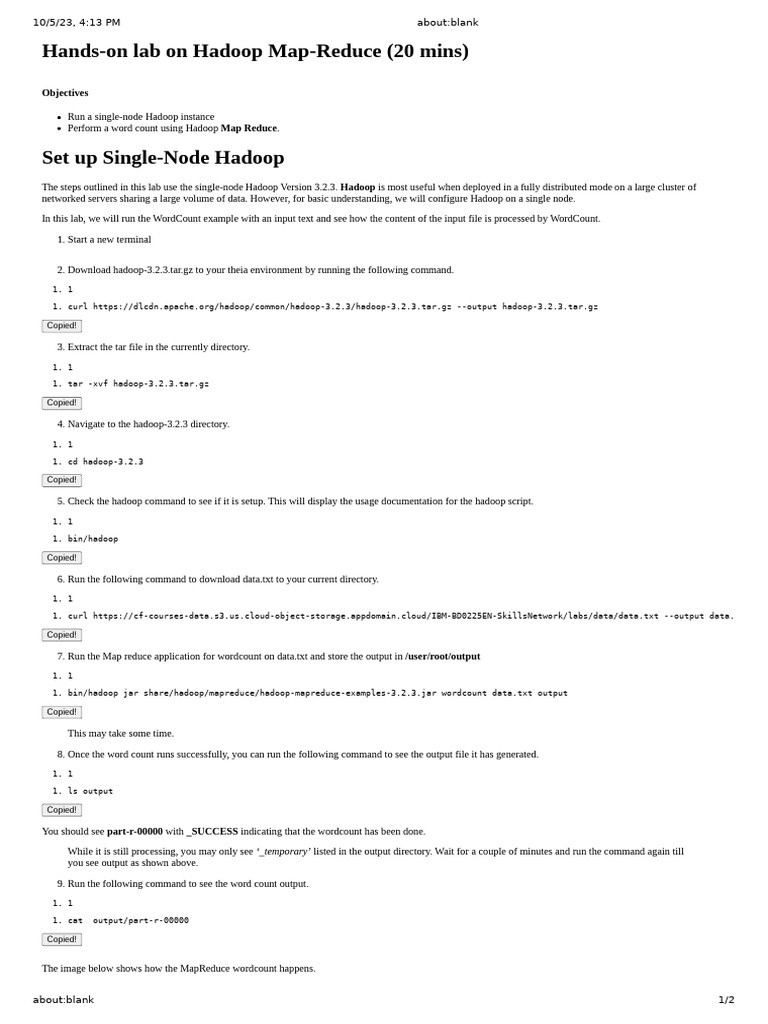

7 Brief About Big Data Hadoop Map Reduce 31 07 2023 Download Free The document explains the workings of hadoop mapreduce, detailing its components such as inputformat, inputsplits, mapper, combiner, partitioner, and reducer, along with their roles in processing data. The map is the first phase of processing that specifies complex logic code and the reduce is the second phase of processing that specifies light weight operations. the key aspects of map reduce are:. If you can rewrite algorithms into maps and reduces, and your problem can be broken up into small pieces solvable in parallel, then hadoop’s mapreduce is the way to go for a distributed problem solving approach to large datasets. Running hadoop on ubuntu linux (single node cluster) – how to set up a pseudo distributed, single node hadoop cluster backed by the hadoop distributed file system (hdfs).

Hadoop Main Pdf Apache Hadoop Map Reduce If you can rewrite algorithms into maps and reduces, and your problem can be broken up into small pieces solvable in parallel, then hadoop’s mapreduce is the way to go for a distributed problem solving approach to large datasets. Running hadoop on ubuntu linux (single node cluster) – how to set up a pseudo distributed, single node hadoop cluster backed by the hadoop distributed file system (hdfs). Apache hadoop. contribute to apache hadoop development by creating an account on github. In the initial mapreduce implementation, all keys and values were strings, users where expected to convert the types if required as part of the map reduce functions. During a mapreduce job, hadoop sends the map and reduce tasks to the appropriate servers in the cluster. the framework manages all the details of data passing such as issuing tasks, verifying task completion, and copying data around the cluster between the nodes. Mapreduce is a hadoop framework used for writing applications that can process vast amounts of data on large clusters. it can also be called a programming model which we can process large datasets across computer clusters.

2 Hadoop Ecosystem Pdf Apache Hadoop Map Reduce Apache hadoop. contribute to apache hadoop development by creating an account on github. In the initial mapreduce implementation, all keys and values were strings, users where expected to convert the types if required as part of the map reduce functions. During a mapreduce job, hadoop sends the map and reduce tasks to the appropriate servers in the cluster. the framework manages all the details of data passing such as issuing tasks, verifying task completion, and copying data around the cluster between the nodes. Mapreduce is a hadoop framework used for writing applications that can process vast amounts of data on large clusters. it can also be called a programming model which we can process large datasets across computer clusters.

Comments are closed.