Gradient Surgery For Multi Task Learning

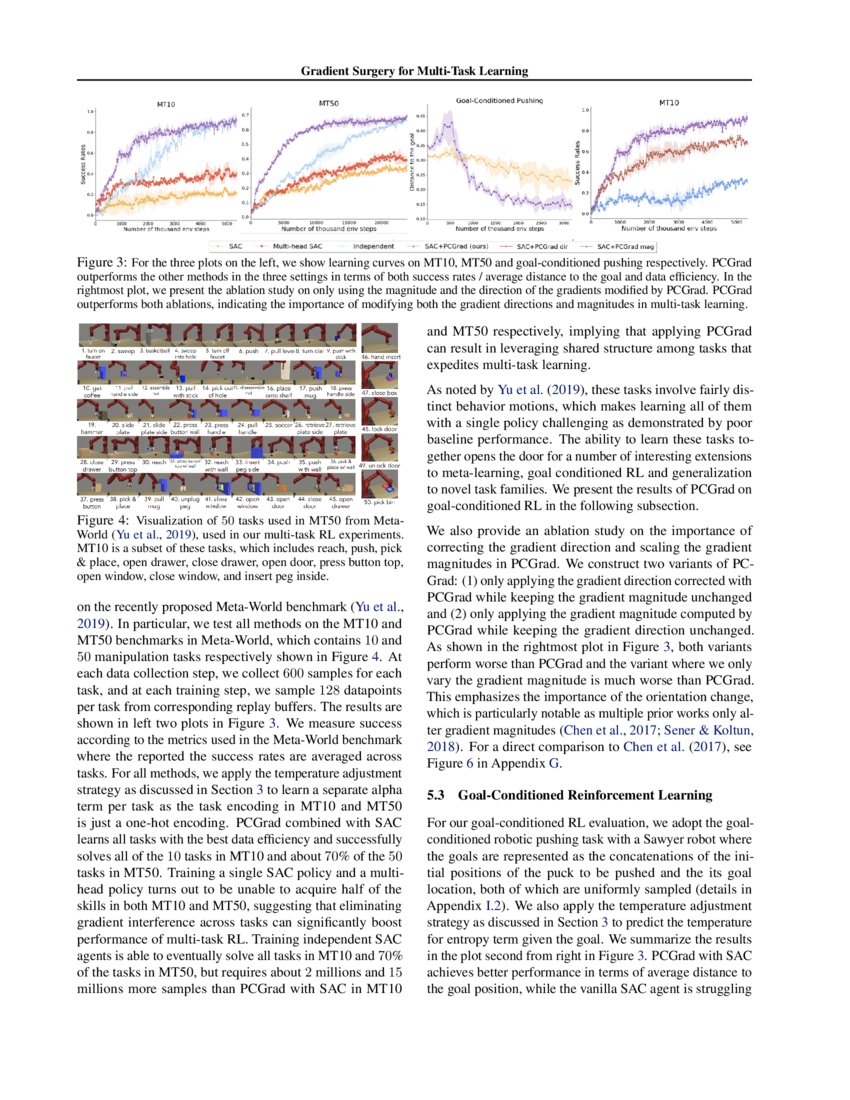

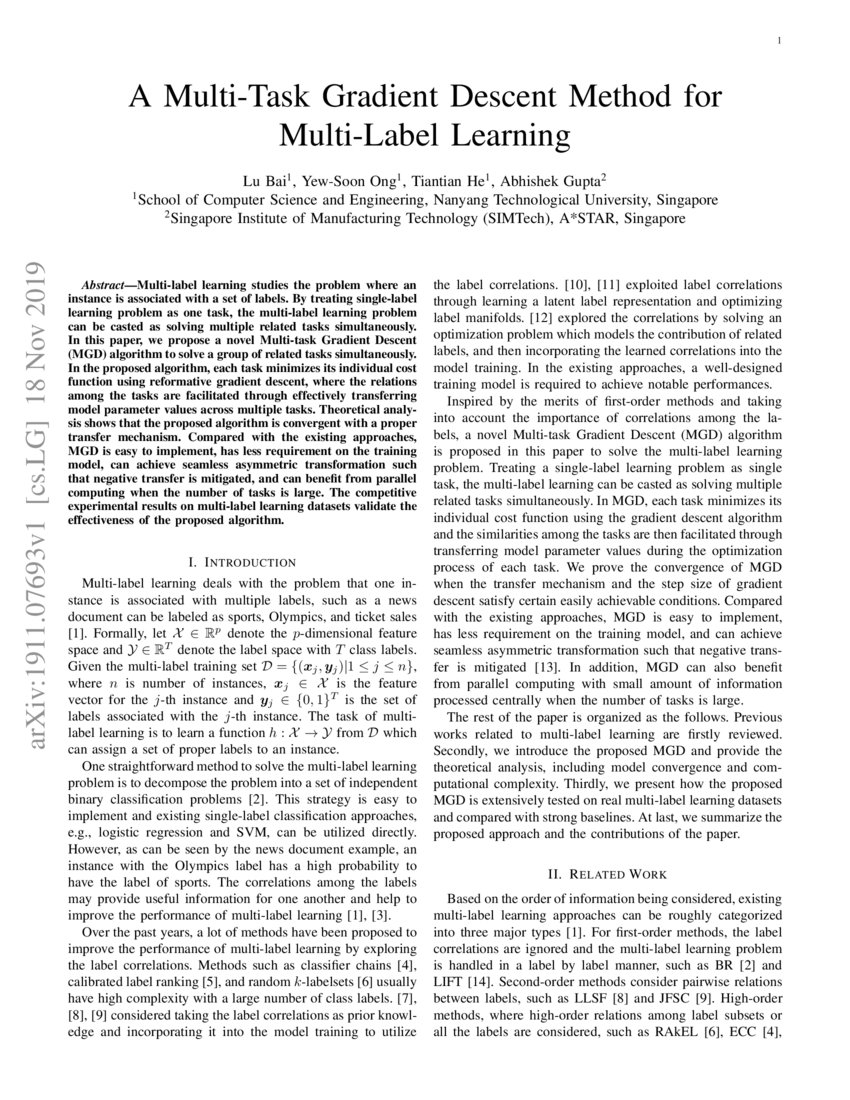

Yu Et Al 2020 Gradient Surgery For Multi Task Learning Pdf In this work, we identify a set of three conditions of the multi task optimization landscape that cause detrimental gradient interference, and develop a simple yet general approach for avoiding such interference between task gradients. We propose a form of gradient surgery that projects a task's gradient onto the normal plane of the gradient of any other task that has a gradient. on a series of challenging multi task supervised and multi task rl problems, this approach leads to substantial gains in efficiency and performance.

Gradient Surgery For Multi Task Learning Deepai In this work, we identify a set of three conditions of the multi task optimization landscape that cause detrimental gradient interference, and develop a simple yet general approach for avoiding such interference between task gradients. Summary and contributions: the paper proposes a gradient based method for tackling multi task learning problem, in which "conflicting" gradients are detected and altered so that such situations would not harm the common objective. Motivated by the insight that gradient interference causes optimization challenges, we develop a simple and general approach for avoiding interference between gradients from different tasks, by altering the gradients through a technique we refer to as “gradient surgery”. In this work, we identify a set of three conditions of the multi task optimization landscape that cause detrimental gradient interference, and develop a simple yet general approach for avoiding such interference between task gradients.

Free Video Gradient Surgery For Multi Task Learning From Yannic Motivated by the insight that gradient interference causes optimization challenges, we develop a simple and general approach for avoiding interference between gradients from different tasks, by altering the gradients through a technique we refer to as “gradient surgery”. In this work, we identify a set of three conditions of the multi task optimization landscape that cause detrimental gradient interference, and develop a simple yet general approach for avoiding such interference between task gradients. This repository contains code for gradient surgery for multi task learning in tensorflow v1.0 (pytorch implementation forthcoming). One issue with multi task learning is that gradients from different tasks can destructively interfere. the major contribution of this paper is a custom implementation of gradient surgery as described in yu et al. (2020) which addresses the problem of interfering gradients. This work introduces a novel gradient surgery method, the similarity aware momentum gradient surgery (sam gs), which provides an effective and scalable approach based on a gradient magnitude similarity measure to guide the optimisation process. A novel gradient surgery method addresses challenges in multi task learning by mitigating detrimental gradient interference, leading to improved efficiency and performance.

A Multi Task Gradient Descent Method For Multi Label Learning Deepai This repository contains code for gradient surgery for multi task learning in tensorflow v1.0 (pytorch implementation forthcoming). One issue with multi task learning is that gradients from different tasks can destructively interfere. the major contribution of this paper is a custom implementation of gradient surgery as described in yu et al. (2020) which addresses the problem of interfering gradients. This work introduces a novel gradient surgery method, the similarity aware momentum gradient surgery (sam gs), which provides an effective and scalable approach based on a gradient magnitude similarity measure to guide the optimisation process. A novel gradient surgery method addresses challenges in multi task learning by mitigating detrimental gradient interference, leading to improved efficiency and performance.

Comments are closed.